Never Trust An LLM

Chapters7

The video argues that LLMs frequently hallucinate and that this unreliability persists across many interactions, despite the author’s overall positive view of their usefulness. It previews three focal areas: types of hallucinations, why they happen, and practical workarounds for day-to-day use.

Hallucinations in LLMs are real and persistent, but with proper use (and tool-assisted search) they can be trusted for specific tasks like coding, not for blindly trusting extrinsic knowledge.

Summary

Matt Pocock argues that large language models (LLMs) hallucinate in many ways and that the problem is systemic, not occasional. He traces the taxonomy of hallucinations to a comprehensive study, then explains intrinsic versus extrinsic knowledge and why compression during training leads to imperfect recall. Real-world examples—Google Bard misquoting JWST findings, Air Canada's bereavement policy mishandling, and fabricated entities—illustrate how even smart models can mislead. Pocock connects these failures to how benchmarks reward guessing over uncertainty, citing OpenAI’s Why Language Models Hallucinate as the rationale. He emphasizes that relying on intrinsic information (data you feed the model) yields far better accuracy, especially for coding tasks. To bridge the gap, he recommends turning on web/search tools and explicitly prompting the model to use them, so answers come from cited sources. Finally, he urges viewers to verify critical information by reading the underlying documents and to share the video to foster healthier, more critical usage of LLMs. Pocock also plugs his Claude-based coding course and newsletter for engineers who want to leverage AI without blind trust.

Key Takeaways

- JWST misinformation in Bard’s advert caused Alphabet’s stock to drop about 8%.

- LLMs struggle with intrinsic vs extrinsic information: context provided in a chat helps, training-data-based guesses often do not.

- Fabricated entities—fake packages, government departments, or laws—are a recurrent risk in LLM outputs.

- Contextual inconsistency can occur even when the user-provided context is explicit, leading to wrong answers in real cases like bereavement policies.

- OpenAI’s Why Language Models Hallucinate explains that models are rewarded for guessing, not admitting uncertainty, which drives hallucinations.

- Using the model’s web search tools (or “Use your search tool”) helps pull in authoritative sources and reduces reliance on intrinsic guesses.

- For high-stakes domains (health, law), always read the source documents yourself and don’t rely solely on LLM output.

Who Is This For?

Essential viewing for software developers and engineers who use LLMs for coding, debugging, or research. It helps viewers understand the limits, adopt safer workflows, and learn practical tricks to reduce hallucinations.

Notable Quotes

"“LLMs lie. They lie all the time. They lie in different ways. They lie so often it's been given a term hallucination.”"

—Introduction to the core problem of LLM hallucinations and why trust is an issue.

"“There are two types of hallucinations. There are hallucinations based on intrinsic information and extrinsic information.”"

—Defines intrinsic vs extrinsic information with simple examples.

"“The first one is factual errors… JWST took the very first pictures of a planet outside of our own solar system.”"

—Example of a factual hallucination from Bard’s advert.

"“Why Language Models Hallucinate… because the training and evaluation procedures reward guessing over acknowledging uncertainty.”"

—Cites OpenAI work to explain why models tend to guess.

"“Use your search tool… then it will pull the articles into its context and answer based on those articles.”"

—Practical tip to reduce hallucinations by leveraging built-in search tools.

Questions This Video Answers

- How do intrinsic and extrinsic information differ in LLMs and why does it matter for accuracy?

- What is contextual inconsistency in LLMs and can you see concrete examples in real-world apps?

- How can I safely use LLMs for coding without being misled by hallucinations?

- What does OpenAI’s Why Language Models Hallucinate say about evaluation and benchmarks in LLMs?

- What practical steps can I take to verify critical information produced by an LLM?

LLM hallucinationsIntrinsic vs extrinsic informationContextual inconsistencyFabricated entitiesOpenAI Hallucination researchWeb search tools in LLMsClaude/AI tooling for engineers

Full Transcript

LLMs lie. They lie all the time. They lie in different ways. They lie so often it's been given a term hallucination. These hallucinations are so unbelievably common that I am now paranoid about everything an LLM says to me. And I will never ever trust an LLM. I'm a software developer and every single other software developer I speak to has this same intuition. Having worked with LM for the past 6 months. Maybe you also have this intuition, but maybe someone you know doesn't. Well, I'm making this video so that you can share this video with that person so that they never again trust an LLM implicitly.

I'm finding that even the very very smart people in my life for some reason don't have this intuition that LLMs hallucinate all the time. Now, don't get me wrong, I'm really pro LLM. I really like LLMs. I think LLM have massively improved my quality of life in terms of my job, in terms of what they can produce. I think LLMs are great, but they have massive downsides. And because they're presented so beautifully, then people just don't realize that. So, we're going to work through three things. We're going to look at all the different types of hallucinations.

We're going to look at why hallucinations even happen in the first place and why it's such a hard problem to solve. And then we're going to look at how to work around them when you're working with LM's day-to-day. I'm going to explain these to you in simple terms because the simple terms are the only ones I really know. I've been working with LMS for, you know, I don't know, a year and a half or something, but I I'm I'm not a machine learning expert. I don't work at OpenAI or anything. I'm just coming at this from someone who likes to talk about LLMs and who likes to use them day-to-day.

I'm going to reference a couple of academic papers which I will put below. In fact, the first one is this comprehensive taxonomy of hallucinations in large language models. In other words, these guys went and looked at all of the different ways that language models can hallucinate and figured out the exact taxonomy of what types of hallucinations can happen. Perfect for us. The first one is pretty easy to think about. It's factual errors. This has been present since the very beginning of LLM. For instance, when Google announced Bard, they said in its experimental conversational AI service powered by Lambda, it said, "What new discoveries from the James Web Space Telescope can I tell my 9-year-old about?" And it said JWST took the very first pictures of a planet outside of our own solar system.

This was in the freaking advert. They didn't even try to uh road test this. This is just wrong. It's a hallucination. After posting this, Alphabet's share price dropped like 8%. Now, it's crucial to say here in this case, they didn't pass the LLM a document explaining all about the James Webb Space Telescope and the new discoveries. It seems like they just asked it based on its training data. This is a super important distinction that the paper actually makes. There are two types of hallucinations. There are hallucinations based on intrinsic information and exttrinsic information. Intrinsic information is stuff that you've sent to the LLM during this conversation with the LLM.

For instance, I'll go on anthropic here and I'll tell it my cat is called Bandit. It gives some reply here saying that's a great name for a cat. Wonderful. Thank you. And now I'll ask it what is my cat's name. Then of course it says your cat's name is Bandit. You just told me in your previous message. So if the LLM for some reason got that wrong, then it would be an intrinsic hallucination. Whereas if I start a new conversation with the LLM and I say, "What is my cat's name?" Then very good. In this case, it has not attempted to guess my cat's name.

It's just saying I don't have any information about your cat in my context. And so if it attempted to guess here, then it would be an extrinsic hallucination. The next type of hallucination is fabricated entities, inventing stuff that just doesn't exist. This is actually really important for developers because developers rely on these things called packages that package up useful tools to help them do their work. So if you ask an LLM, does a package exist for this purpose? Then it's very likely to just say, yep, it does. This has personally happened to me dozens of times, and it's now opening up developers to supply chain malware attacks.

Attackers can exploit a common AI experience, false recommendations to spread malicious code via developers that use chat to create software. This is an old article, but this literally just happened to me the other day. And it's not just packages. LLMs will make up government departments. They will make up laws that don't exist. They will make up all sorts of things. The next one is contextual inconsistency. In other words, ignoring or contradicting context that you explicitly provided. This would be an intrinsic hallucination like what we saw before. This article is from 2024. or Air Canada found liable for chatbot's bad advice on plane tickets.

This guy called Jake Moffett asks the Air Canada chatbot about their bereavement policy. And even though the bereavement policy was probably in the LLM's context, it had been explicitly told about it, explicitly passed into its context, it just made something up. It said, "If you need to travel immediately or you've already traveled and would like to submit your ticket for a reduced bereavement rate, kindly do so within 90 days of the ticket your was issued." But when they tried to get their money back, they basically responded and admitted the chatbot had had provided misleading words.

In other words, a contextual inconsistency. An inconsistency with something that the LLM just had in its own context window. This would be kind of like the LLM not knowing my cat's name just after I gave it to it. It's baffling that these things occur, but they do. Now, I could go through all 10 of these terms from this paper and try to explain to you about each and every one of them, but I think you're starting to get the picture. LLMs are unreliable and especially if you're relying on their extrinsic knowledge. My experience with LLM is that they're much much more reliable when you send them the information first.

Of course, why wouldn't they be? But if you're relying on their extrinsic knowledge from their training set, then you're going to be disappointed. We should talk in a kind of basic sense about why this is. First of all, the process of training an LLM of taking a bunch of training data and turning it into something smaller is essentially a compression. You take, let's say, a massive file with all of the stuff that you've gathered over the course of all of your data scraping, huge amounts of data sets about all the different, you know, text or everything on the internet, let's say.

You then through some very clever mechanisms that I won't go into, you compress it down into a much smaller size that can fit on a GPU. And this compressed version of that training set is what we can think of as the LLM's brain or its memory or its data store. And the output here is much much much smaller than the input. Now, when you're taking some massive training set and you're squishing it down into a smaller size, you're going to lose stuff. For instance, let's take this photo of this beautiful man. And let's compress it a little bit.

Okay, it's not bad. You can still see it's me, but I've lost a bit of definition here. You can't quite tell how many wrinkles there are on my forehead, for instance. We compress it a bit more, and the skin has been kind of smoothed out. I've lost a lot of definition. and you go all the way and I just end up like this blobby looking person that you can barely recognize it's me. When you ask an LLM a question and it's not in their training set, I want you to imagine that this is the picture that they're seeing of the information they have.

Just a blobby, crappy, hypercompressed version of the information that was once there. And look, you can still get good answers out of this image. It can still say, okay, what color is the little cap on the end of my microphone here? It is blue, right? That is visible from the image. You could probably just about say that this man doesn't have any hair on his head, too. But if you ask about who it is or how old this person is or, you know, any of that, it's not going to have a clue. So, the question then becomes, if LLMs only have this crappy low res version of all of the information in the world, why are they so insistent on guessing?

Because an LLM presented with this information and you asked who they are, they will often just have a go and guess. Now, the answer for this comes from the second paper that I want to link to, which is below. It's from OpenAI and the title is Why Language Models Hallucinate. Like students facing hard exam questions, large language models sometimes guess when uncertain, producing plausible yet incorrect statements instead of admitting uncertainty. We argue that the language models hallucinate because the training and evaluation procedures reward guessing over acknowledging uncertainty. Now, what do they mean by evaluation procedures?

What are they talking about? Well, LLM prove that they're getting better over time by showing their numbers in benchmarks. For instance, here this one is LiveBench. I mean, I think this is a leaderboard of multiple benchmarks. And you can see that all of the top models here are rated a number out of 100 on how good they are at different things. For instance, reasoning, coding, agentic coding, mathematics, data analysis, language, etc. Being at the top of these leaderboards is incredibly valuable for these companies. If you can say you have the best model in the world at something, then everyone who's doing that thing is going to want to use your model because it's actually really easy to swap models.

You just like stop using one thing and go and use another thing. And there's a tension that these benchmarks introduce because if your model is really well tuned to say I don't know something when it comes to let's say maths, then it's probably not going to score very well on the maths benchmark because it might just be better on average for it to have a guess. In other words, you miss all of the shots that you don't take. And so LLMs try to take as many shots as possible. In fact, there's kind of a tension inherent in designing LLMs.

And you can think of this kind of by thinking of it in human terms. It's quite rare to meet a person who's really, really smart, but also really humble about their smartness. People who are really smart, especially in an exam context, will probably be smart enough to get close to the right answer and will trust themselves and be confident enough to actually work it out. But people who are humble enough to say, "I don't know," will probably go in the opposite direction where they might not be confident enough to actually get it done. However, if you're really smart, you're often going to be way too confident about your own answers.

And this will lead to hallucinations. In other words, all the stuff that we've been talking about so far. But if you're on the other side and you're not confident enough, then you're probably not going to do really well in the domains where you need really, really deep thinking. And the people who grade LLM and who train LLM need to figure out somewhere on this line. And I'm not sure it's a solvable problem. Or rather, it might be solvable, but it's going to be someone way above my pay grade. So to sum it up, LLM's hallucinate because guessing is more highly rewarded than refusal, which to be honest is kind of true in most walks of life anyway.

So then we now know how LLM hallucinate and we kind of understand why LLM hallucinate. But why after all of that am I still so pro- AAI? Why do I still like AI? Well, it's because when you actually pass them intrinsic information here, if you actually send them stuff, if you get them to uh peruse a big document or something, then it tends to be really really accurate, way more accurate than extrinsic information. So when I'm coding for instance, I can pass my codebase or you know big files to the LLM and get it to answer questions about it, get it to explore the code and it will give me really really good insights as to what it's doing.

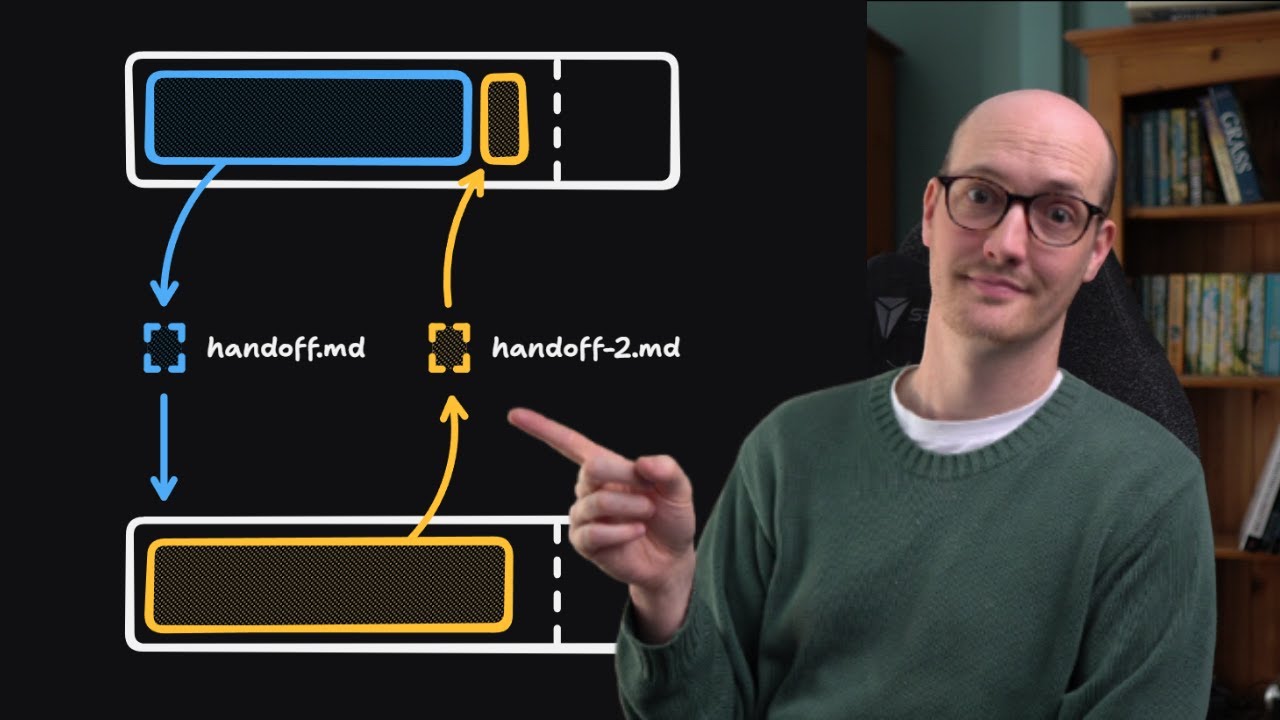

In other words, when you're using AI, you need to always make sure that you're providing it the information it needs to succeed. But so you might ask, uh, that doesn't make any sense because I often use LLM for searching for information. If I have a question that I don't have the answer to, I can't use this intrinsic stuff. All I have to rely on is exttrinsic stuff. So, I'm going to tell you about a four-word prompt that will allow you to get the LLM to fetch intrinsic information that it doesn't yet have. Use your search tool.

Most chat bots and most, let's call them agent harnesses have some kind of tool that the LLM can use in order to search the web. For instance, in Claude here, if we go to files, connectors, and blah blah blah blah blah, then we have this little web search thing just inside there. And I always have this turned on. And for instance, I can ask it which Arsenal players are injured right now. And it will go and search the web and try to find the information. So here we go. It's saying here's the current Arsenal injury picture, blah blah blah blah blah.

And as you can see, there are citations based on where it's got the information from. Now, it's done a good job this time because, you know, Sonnet is a good model and I like this tool in general. But many tools will not by default go and do this. Especially if the model is confident that it knows it and its training set if it doesn't need to use its search tool. In other words, in order to use its search tool, it needs to be humble enough to be able to say, "I don't know. I need to go and fetch this." And so, if it's like tuned to be really smart, it might just go, "Oh, I know the answer.

Let me just repeat it to you." And so, whenever you're prompting the LLM to ask it for information, say, "Use your search tool." because then it will pull the articles into its context and it will answer based on those articles and you'll be less likely to get hallucinations because you're relying on intrinsic information, information in the context window. However, remember there's a category of hallucinations called contextual inconsistency. In other words, even stuff that you provide explicitly to the LLM might just be ignored. Even if you're an airline and you have a chatbot with a bereavement policy explicitly passed to it, it might still get it wrong.

And so for really critical stuff, for stuff that's life or death, for health related stuff, especially for legal stuff, you basically need to ask it, use your search tool, but you then need to go and actually read the documents yourselves. This happens all the time for me when I'm coding, too. The LLM will read some code and it might just misinterpret it or not know the full context and so it spews something out very confidently and I then have to say, "No, that's actually not quite right." So hopefully this video has given you a bit more understanding about what the limits of LLMs are and how you can work around them.

And if you have someone in your life who you want to be better with LLMs or you notice them just slightly using them wrong, then maybe send them this video and hopefully we can build a world where people don't trust LLMs and instead use them for what they're good at. For instance, they are very very good at writing code and I'm running a Claude code for real engineers course over the next two weeks. If you dig that or you dig the idea that real engineering principles can still be used in the AI age, in fact, they're better than ever, then sign up to my newsletter below or sign up to learn about when the next cohort is happening.

Thanks for watching, folks. This was a bit of a change of pace for me. I don't usually make these kind of wider videos or videos for a wider audience, but I kind of just wanted to get this out there. Thanks for watching and I will see you in the next

More from Matt Pocock

Get daily recaps from

Matt Pocock

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.