How to Make Your Website Agent-Ready | Cloudflare Engineering Meetup Lisbon

Chapters9

Introduces the need for agent readiness and how agents differ from human users in interacting with websites.

Cloudflare’s Andre Zoo (Jesus for France) explains practical steps to make your website agent-ready, from sitemaps and content negotiation to MCP and commerce protocols, plus a live demo of isitagentready.com.

Summary

Andre Zoo, a Cloudflare Systems Engineer, guides developers through turning human-centered websites into machine-friendly, agent-ready surfaces. He emphasizes that agents see only a wall of HTML, CSS, and assets, so sites must be engineered for content discovery and retrieval. The talk covers established standards like robots.txt, sitemaps, and link headers, then moves into newer concepts such as markdown content negotiation and content signals. Andre details how to expose actionable content to agents using .txt files (llm.txt and llms.txt) and how markdown can reduce token usage for AI models. He explains access control via robots.txt, introduces the idea of an API catalog for discovering APIs, and highlights the MCP (Model Context Protocol) as a way to provide context and tooling to AI models. The session also touches the possibility of agents subscribing to commerce protocols (example: X42) for paid content, and how Cloudflare’s agent tool set (isitagentready.com) can diagnose a site’s readiness while offering concrete fixes. A live demonstration showcases the agent-readiness scan, score breakdown, and a “Improve Your Score” button that outputs actionable Markdown for LLMs. Finally, Andre presents findings from a bulk web scan of the top half-million domains, noting that while classic standards like robots.txt and sitemaps are widely adopted, newer standards (markdown negotiation, LLMs.txt, MCP) show growing but incomplete uptake. The takeaway is that agent readiness is in early stages, much like SEO was in 2003, and developers should start with a quick scan and implement sensible fixes.

Key Takeaways

- Expose agent-friendly navigation with sitemaps (XML) and link headers so agents can discover pages without parsing every HTML page.

- Offer an explicit content brief the agent can read via llm.txt or llms.txt to summarize essential site information and usage guidelines.

- Enable markdown content negotiation so agents can request content as markdown, reducing token costs and preserving structure.

- Publish an API catalog and authentication guidance to help agents find and securely use your APIs.

- Adopt MCP server cards to describe your MCP server, authentication methods, and supported tools for AI agents.

- Consider implementing content-usage signals in robots.txt to indicate AI usage preferences for specific content.

- Use Cloudflare’s bot controls and web authentication mechanisms to enforce access policies beyond simple user-agent checks, including Web of Trust-style signatures (BOT signatures).

Who Is This For?

Web developers and site operators who want to prepare their sites for AI agents, including those exploring Cloudflare Radar, MCP, and markdown-based content delivery. Essential for teams building APIs or dynamic content intended for agent-driven applications.

Notable Quotes

"Okay, let's start. Hi everyone, I am Andre Zoo or Jesus for France. Um, I'm a systems engineer here at Cloudflare. I mainly work on a product called Cloudflare Radar."

—Opening remarks and the speaker's credentials.

"Agents see a wall of HTML, stylesheets, and all the CSS a developer had to do just to center a div. The agent doesn't care about this."

—Explains the core problem: agents need content, not UI.

"So you can expose like a link to to your MCP server or to your API catalog and and so on."

—Demonstrates using link headers to guide agents.

"Markdown is also good because it keeps structure so it has headers and everything else that other formats have. It also reduces token usage and is the preferred format for LLMs."

—Highlights markdown negotiation benefits.

"You can define rules about access control in your robots.txt. You can disallow Google bots to crawl that specific path."

—Shows access control via robots.txt.

Questions This Video Answers

- How do I make my website agent-ready with sitemaps and robots.txt?

- What is MCP and how does it help AI models interact with my APIs?

- Can markdown content negotiation actually reduce AI token costs on my site?

- How can I test if my site is agent-ready using isitagentready.com?

- What are practical steps to publish an API catalog for AI agents?

Cloudflare RadarAgent readinessRobots.txtSitemapsLink headerllm.txtllms.txtMarkdown content negotiationMCP (Model Context Protocol)API catalog','Authentication for agents','Content signals','X42 commerce protocol','Cloudflare bot management','isitagentready.com

Full Transcript

Okay, let's start. Hi everyone, I am Andre Zoo or Jesus for France. Um, I'm a systems engineer here at Cloudflare. I mainly work on a product called Cloudflare Radar. And uh, today I'll talk about how you can make your websites agent ready. So agents are everywhere. So there are different types of agents like coding agents uh like uh open code clouds um personal assistants that run 24/7 like open claw and other types of agents and they do they are not just simple LLMs they do a bunch of stuff they call tools they they browse the web and uh they are probably uh already browsing um your the web and accessing your websites.

Um, so I'm sure that most of you have interacted with an agent in the past week or maybe today or even in the last hour. I know that I did because I have open code running on the background and hopefully it's not deploying things to production. Uh but the thing is that agents are are everywhere and uh the problem is that uh websites uh were built for humans and not for agents. And while a human sees a beautiful UI with um good navigation um animations, images, an agent uh just sees a wall of uh HTML, stylesheets, um and all the CSS a developer had to do just to center a div.

Um, so the agent doesn't care about this. He only wants to make a request uh retrieve the the content of of your website. Um, so most of the time like 80% of of what's in the response, it's not actually useful. Uh, so how do we fix this? Uh, just like we learn to care about SEO or accessibility, uh, now we also need to care about agent readiness. Um so there are some some standards that uh that are here for some time and new ones are are being drafted and today I will talk about some of them.

Um yeah so let's start. So first we need to help agents um navigate through your website and probably the easiest way to do this is to uh use sitemaps. So sitemaps are XML files that you can uh expose in your website and as as the name implies it's a map of your website. So it lists all of your uh pages. Uh they are referenced in in the robots txte file. You can have several of them. You can have sitemap indexes which are site maps that points to other site maps. Um and uh and yeah this is also a good way to um increase your SEO uh improve your SEO because search engine crawlers like Google bot uh they also use site maps to to crawl your website.

Another thing that you can do uh is to define link response headers. Um, so instead of hiding um links to important resources in your HTML um and crawlers have to parse HTML and try to find the links, you can expose them directly in the in the response headers using the the link header. Um so when agent makes a request and it just need to to look at at the headers. uh and you can expose like um a link to to your MCP server or to your API catalog and and so on. So now agents uh can navigate through your website but can they actually read uh the content of it?

So, back to the problem I mentioned earlier. Um, when an LLM does a request, it receives a bunch of of stuff, files, HTML, CSS, fonts, whatever. Um, and they only care about the the content. So, one thing that you can do is to expose LLM's uh .txt file. Um, it has a markdown content um content type, and basically it's it's like a readme, but for for your website. So you can um mention important uh things about your website uh important links um and so on. So and the agent just needs to fetch this file and basically it knows the the most important stuff about your your your website.

Um and uh there's also llm's full.txt txt which is uh basically um this this file but with all your website uh uh content for for crawlers that want to fetch everything at once. Um and then there is markdown content negotiation which goes a step further. So uh using the llm.txe txt files every time you update your website you need also to uh keep and maintain this these files and with markdown content negotiation you you doesn't don't need to do it because this is uh dynamic so uh when agents are requesting uh content from your website they they can say oh I would prefer to reserve the uh to receive the content in markdown and they do this uh by setting the accept header using the text marked on mime type and then either the the origin server or a middleware like clouds can parse this this accept header and instead of returning HTML or other content type they can uh convert the the response to markdown and the agent will will receive markdown.

Um so in Cloudflare you can do this by just uh using a single toggle and uh markdown is also good because it keeps structure so it has headers and everything else that other formats uh have. Um but it also helps with the token reduction. Um yeah so it's also uh cheaper use less tokens and uh basically it's the preferred format for for LLMs. Um yeah, now agents can navigate and can consume your website, but probably you don't want to let uh every bot or every agent to to do it. Um so not all crawlers are are equal.

Some of them are from companies that you know and some of them are from startups that you never heard of. Um so you can define rules about access control in your robots file. Um so you can define rules um access rules per uh bots and you can go down to a specific path. Uh for example in uh here we are defi we are disallowing Google bots to crawl uh that specific path. Um robotics txt is here for quite a long time since 1994. Um I did not uh exist back there. um and uh it's a really useful um standard.

Now content signals goes uh a step further. So uh instead of just saying uh so you can crawl or you can't crawl this this path, you can also um declare the usage preference. So the AI usage preference of that content. So for example, you can say that okay Google what you can crawl this this uh my website but only for AI search you can't use uh this for training or AI input just for for search. Um so this is a a good way to to define the the usage preference of your content. Uh no it's also important to note that robots CXE is not enforced.

So it's just a file that you host at your website and uh we expect that well behaved bots will follow it but uh others don't. Um so you need to enforce these rules at the the network level and to do that uh there's a product at Cloudflare called the Acro control that uh uh leverage Cloudflare bot detection um to to block the the requests from uh from bots uh specific bots. Um, now there's also uh we can do this by using the user agent, but it's not a very good idea because anyone can spoof a user agent.

Uh, so I can just set the user agent header to Google bot and bypass any user agent um detection. Um, so there's a a protocol or a standard called web bos that basically um helps with with this problem. So the way that it works is that uh uh bot operators uh generate a key pair. So a public and a private key. uh they publish the the public key in a well-known path and then they use the the private key to sign their uh bot requests and then uh you or a middleware like Clauser uh can um identify the the source of the the request using that uh that signature and make sure that uh that request came from that bot.

Um yeah, this is this is also useful. Now agents uh can not only navigate through your website but they can also um interact with it to interact with your APIs. Um but first they need to find them. So uh you can host uh a file called the API catalog uh which is basically catalog of all of your APIs. You can set uh like the the status the the status of your API ser the documentation of of the API and agents just need to fetch this file and uh read all the all the information from uh to know where are uh your APIs.

Now most of VA also require authentication um using go out or other uh protocol and you can also host files to help agents know how to authenticate. Um for example you can um uh host this file with the endpoint for authorization the different uh grant types and and so on. So it's also useful to to let agents know how to authenticate now. And uh since we can talk about AI without mentioning MCP. Uh so MCP stands for model context protocol. It's uh an open-source uh standard to provide more context um and access to tools and external systems to AI models and everyone has an MCP server and um if you have one you can also um host uh a JSON file which is the MCP server card with information about your MCP server uh again the URL how to authenticate uh the different tools that it supports.

parts and and so on. So agents know how to use it. Um yeah, and um agents can also buy stuff. So they can uh buy content, access to content, buy pizza or airplane tickets. Uh you can give them your credit cards, which I don't recommend. Um but they can buy stuff and there are also uh commerce protocols to to do it. So X42 is one of them. Um and the way that it works is that um an agent tries to to do requests. Uh but uh the server responds with the 402 payment required. So you can't access that resource because it's it requires payment.

And then um in the the server response it also contains information about how you can pay for the content and then the the agent can can pay it uh using stable coins which are cryptocurrencies that follow stable currency like the US dollar. Um and then the agent retries and this time it gets access to to the to the resources because it pays for it. Um yeah so that's it. So, a bunch of of standards um and I didn't talk about all of them. So, uh some of them are proper RFC's, some of them are are just proposals.

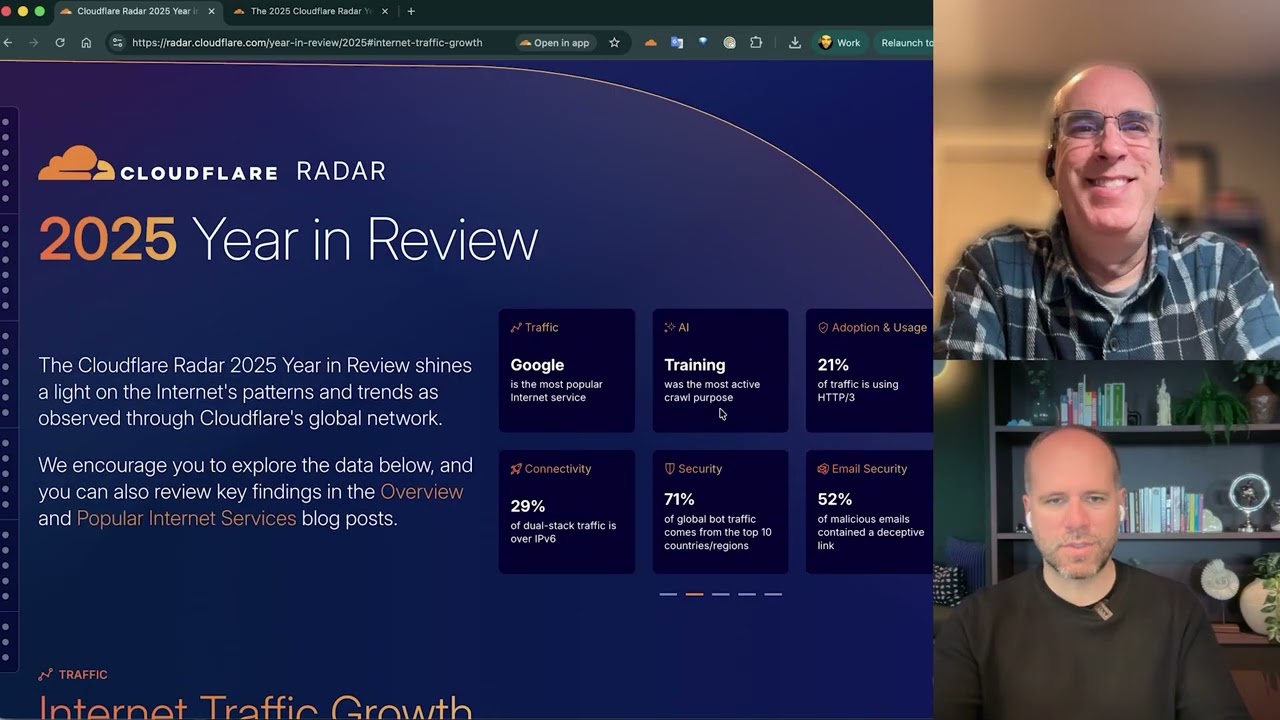

Uh some of them won't survive. Um, and you don't need to memorize all of this uh because Cloudflare launch a tool this agent week um that basically uh lets you type any URL and get an instance of it u across these these standards and as you can see my website is not very ready for uh agents um but yeah you can access the tool at isit agent ready.com Um, and the tool not only tells you what's what's failing, it also tells you how to fix it. It gives you links to documentation, um, the RFC's and so on.

So, it's a really cool tool. And, uh, we not only build the tool, but we also used it to check the current state of of the web. So, to see if uh, most websites weren't were ready or not for AI agents. So we scan the the top half million domains. You can get this this lists uh through cloud radar. We have the domain ranking section which is updated uh daily. So we we get uh these this half million domains. We filter out categories that uh matter for this this test like um email server, search engines, uh CDNs, malware and so on.

and then we will run a bulk scan. So, uh the short answer is that the web is not very ready for for it. Um so um this charts here uh shows the the adoption of um AI agent standards. So this has the the results of the of our bulk scan. As you can see um standards that are here for quite some time like robot CXE site maps they are the most adopted ones. We are starting to see some adoption of uh uh recent standards like uh uh markdown negotiation, content signals, LLMs.xt um and then there are a bunch of uh standards that are not very adopted either because they are recent.

Um yeah, so and some of them are old and weren't uh adopted at all. Um, another important thing to note is that not all standards are uh applicable for every website. So if you have a website with just a content or a simple personal website, a blog, a portfolio, if you don't have an API, you probably don't need to define your API catalog or if you don't have an MCP server, you don't need to expose MCP server cards because it doesn't make sense. Um so that's why most of those um those standards are not very adopted.

So this will be available on the cloud radar in the AI insights uh section. It's not yet but it will this week. Um and uh yeah so to conclude uh we've been here before but for SEO. So in 2003 I was one year old and most websites weren't uh optimized for search engines. So it took years to uh websites to be um for SEO to be a standard practice and we are at the same point but for um agent readiness. So, my recommendation is go to uh is it agent ready.com uh scan your website. It only like takes like 30 seconds and fix what you think makes sense to fix and uh and yeah, so I think that's it.

Uh thanks for your time and I'm happy to take questions. That's it. Hey there. Uh I'm Alex. Thank you for the presentation. Uh so robots.txt and uh sitemap, I'm guessing those are also very useful for um SEO and their maturity levels. Um so question uh for the website is your agent ready? Do you have the MCP server? So I can make my site ready following your rules. Yep. The site has an MCP server. It also has an MCP server card. The site is also agent ready. So you can use it and you send the the MCP server or the API to to your agent and help.

Oh, and also the the site has a feature which is basically a button where you can copy all the fixes and just uh send them to your coding agent. So it's also easy to there to do the fast. Cool. Thank you. Thanks. Anyone else? Hello. Thank you for the presentation. Uh I was thinking about the SEO um parallel you draw and I'm wondering if you think any of the present day technical uh properties that the AI is crawling do you think it will evolve in the future if you already seeing like alternatives of those robots and sitemap if there are other technical because if you think about SEO there are way more technical uh implementations of schema markups and stuff like this.

And do you foresee AI going in that directions too? Yes, I think agent readiness is is related to SEO because agents are bots and SEO is is also a standard uh a set of standards for for crawlers and and bots. Uh so yes so some of those those standards are kind of shared between SEO and agent readiness and some of them for example these ones for MCP are not very useful for SEO but are useful for for agents. I'm not I'm not sure if I answer your question. Yeah, the question was more about if you foresee in your opinion if this area of like like how SEO evolved, do you think the agent readiness domain will evolve to include more tools in the future?

Yeah, I think so because there are so some of these standards are like one month old. Um so the there are a bunch of of standards and RFC's and RS being uh being created and agents are here to to stay. Um so probably the there will be more more standards and more more stuff. All right. Thank you. Yeah, I think there are question. Yeah. Uh thank you uh for the presentation and uh my question is about this extension that you mentioned about robots txt about the intents that bot uh should expose how they will use this data.

Uh and uh what about the major bots that currently exist? do they follow it? Uh who follows? Uh if I'm not mistaken, Google uses the same board for training and for searching. So it's it's just a uh so I'm not sure if we can call it a standard. So it was proposed by Clausair like an year ago or some months ago. Uh and we expect u good P bots to to start following it. But I don't have data about uh uh if it's fault or not because we don't know that. So we Google bot does a request it receives the data but we don't know uh what will be used for.

So ideally they would respect that. Um yeah but we we we don't know. Thank you. Okay. Thanks for the presentation. Um I just wanted to make a comment more of a experienced warning if that makes sense. Um SEO and and a lot of the things that we are talking about how SEO is stabilized and their standards and all of that it's because it works right. So one of the things that I would warn about is that when we are implementing a bunch of new things to make a site agent ready sometimes we might disable some of the things that made it SEO ready.

So I'm not saying that of course this makes sense a lot of sense but we have to to be aware that sometimes by implementing some of these we'll actually implement for example uh we might use some more crawl budget from Google. we might uh add new pages that don't make sense for Google to index but are still going to be indexed. So I just as a warning more than anything uh that I've seen sites implement some of the agentic stuff but then actually get penalized on Google maybe even get some traffic and some visibility on on LLMs but then get penalized there.

So at some point there's going to be a balance between the two things because of course we need the two worlds to work together. Yeah, that makes sense. Nice. So this we are in a very early stage of of this. So this is more an experimentation phase of this uh of this stuff. Um yeah. Yeah. Um can you can you whatever you want to direct anything. Yep. Um hi um thank you for your presentation. My my question is um is there like an order of specificity for this different um standards? So for example with the new um llm.tech.txt um in the case where you have like you know rules in llm.txt and you have things in sitemaps which one do the agents pick first which what's the source of truth the single source of truth.

So I think it that depends on on the agent. It depends on the agent purpose. So this is just some things that you can help them. So, but I'm I'm not sure of Yeah, it depends on the agent. So, there isn't a right answer. So, the agent might might fetch like the site maps. If you ask it to crawl the website, uh but then if you ask about the the purpose of the website, it might just fetch the llm.txt. So, I think there's no clear answer. So, there's not a priority on the the resource. Um, so my question is more on semantics.

Uh, so you mentioned it. Yeah, I think that's just because we have robots txt, we have ads .txt, security .txt, and they just wanted to use .txt. Um, but uh yeah, markdown is a better uh format that that's why, but llm.txt txt sounds better than lm.mmd. So I think that's it. Yeah. So yeah, next we missed a guy. This one is Oh, it gets recorded this way. We want to hear what we're only supposed to want news on Friday. Use the microphone. We're only supposed to ship this u Friday, but we we discussed here. I think we're okay to do a demo of the the the website.

You want the demo of the website? Yeah. Yeah. Just just run a scan for for for people to see. Sure. Can't go wrong. No pressure. Well, it's not. Yeah, it's not shipped yet, so it's okay. It failed. Um, no, let's use my range and uh scan and yep. Okay. Uh, so you get the the scorec card here. So, this is a summary of of your of your scan. Um, and then you can, as I mentioned, you have the improve your score button, which it's just a a markdown uh text that you can just copy and send to your uh LLM.

Um, for for the checks, you can check the the audit details. So, the requests it made, uh, how the check works. Um, And then for the failing test, we you also have like the goal and the issue, the skills that the the site uh hosts um and also links to to documentation um about that that check and uh yeah that that's it. Yep. cuz you are penalized a I am score but if you don't have API in your website it doesn't make sense to be pen s yes it doesn't uh yeah yes I was just we didn't find uh a perfect solution to do that so here uh you can also customize your scan so for example my website is more of a content uh website so it's more blog and stuff and this will only run uh some checks and I'm also I'm still failing at maron for agent.

Um but uh yeah so as I mentioned some checks don't make sense uh for every website um like commerce or API for my website don't don't make sense um yeah we are still working on it. Yep that's it. Thank you.

More from Cloudflare Developers

Get daily recaps from

Cloudflare Developers

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.