I Tried NEW Package PAO: Save AI Tokens on Test Responses

Chapters13

Presenter introduces Pow and the goal of saving tokens by shortening test related output.

Pow promises big savings on AI testing tokens; this review probes if Cursor 3 and a Kimi 2.5 prompt really shrink test results as advertised.

Summary

Laravel Daily’s video by the channel’s host dives into the new Pow package from Nuno Maduro, aimed at shortening test responses so AI agents use fewer tokens. The host demonstrates Pow in action within a fresh Laravel 13 project using Cursor 3 in agent mode, testing CRUD generation with Kimi 2.5. He contrasts prompts with and without Pow, watches Cursor dashboard token impact, and notes mixed results: sometimes outputs are compacted, other times not, especially with failed tests. The video reflects on Token efficiency realities for cheaper AI models and the broader takeaway that token-saving is an ongoing concern in AI-assisted development. The host also references prior work on markdown-for-agents and Laravel Markdown responses to contextualize Pow’s direction. He closes with cautious optimism about Pow’s potential, noting Pow v0.1 and its Agent Detector under the hood, while inviting viewer comments on real-world effectiveness. If you’re tinkering with AI agents for testing in Laravel, this provides practical, hands-on insight into token economics and early-stage tooling.

Key Takeaways

- Pow shortens PHP unit test outputs to a token-friendly chunk, potentially reducing token usage for AI agents running tests.

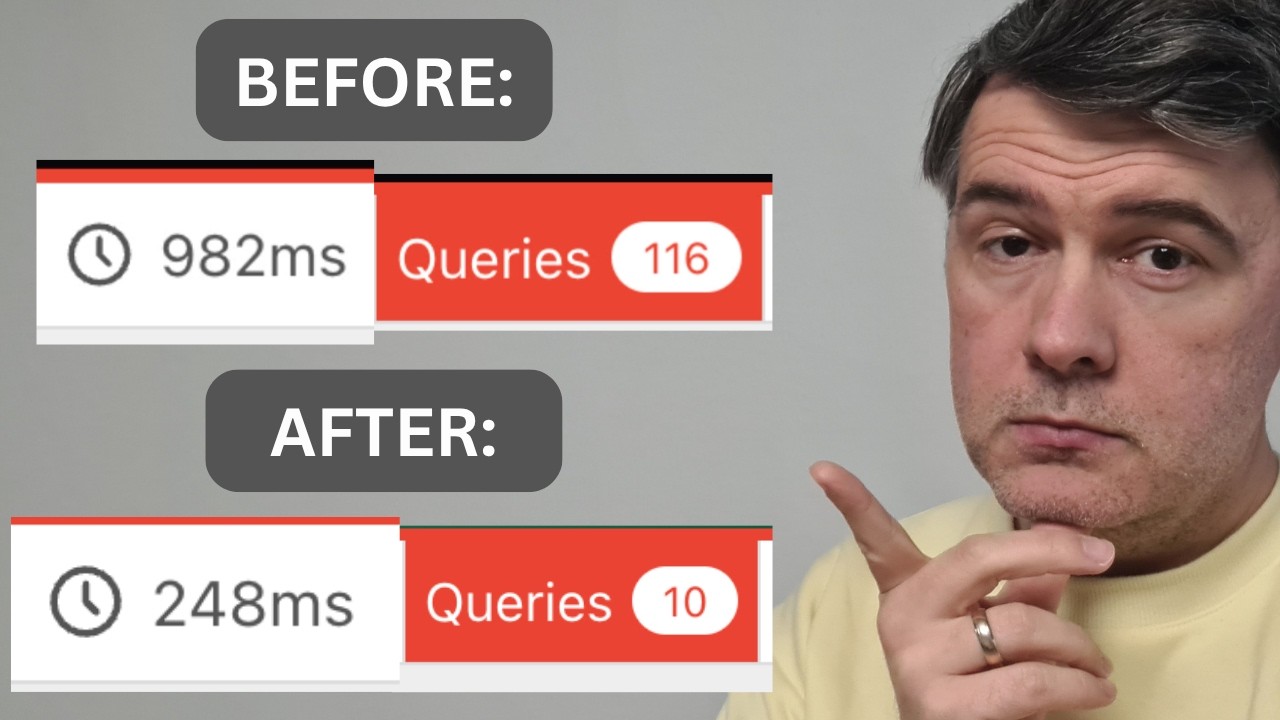

- In Cursor 3 agent mode, the host demonstrates a CRUD creation with Kimi 2.5 and logs token costs—1 million tokens (~$0.41) for a Kimi-based run.

- With Pow removed, the same scenario can yield longer outputs and higher token use, illustrating variability in how models handle errors and tests.

- Early results show mixed truncation: some failures are compacted; others retain verbose stack traces, depending on the error context.

- Pow uses Agent Detector to identify Cursor/Codex/Cloud Code and tailor responses, but detection isn’t perfect for all agents yet.

Who Is This For?

Laravel developers who experiment with AI agents for code generation and testing, especially those curious about token costs and how new tools like Pow could reduce them.

Notable Quotes

"So in short what Pow does is shortening the response from PHP Unit, Pest, ParaTest and also PHP Stan from this to just a few tokens."

—Explanation of Pow’s core idea: shorten test outputs to save tokens.

"But for many, especially cheaper models, I've noticed it goes like this. Generate the code, the full code, then run the tests, then the tests fail miserably and then the model is running in circles trying to fix tests one by one and then executing tests again and again until they succeed."

—Describes the typical loop when AI models handle tests without stable tokens.

"The new package Pow is actually in the same direction of saving the tokens."

—Summarizes Pow’s purpose and positioning.

"Now it is not in this case shortened but I’m not sure why, but let’s see what happens next."

—Notes inconsistent truncation during a failed test scenario.

"Pow uses another package Agent Detector which is this Ship Fast Labs… that detects if it’s Cursor or Codex or Cloud Code with these detection methods."

—Mentions the underlying detection mechanism Pow relies on.

Questions This Video Answers

- Can Pow really cut AI testing token costs in Laravel projects, and by how much?

- How does Cursor 3 agent mode interact with new AI tools like Pow in a Laravel workflow?

- What are the practical limits of token savings when tests fail or produce verbose errors?

- Does Pow work reliably with Pest, PHPUnit, and PHPStan across different models and environments?

- How does Agent Detector affect Pow’s ability to shorten responses for non-standard agents?

Full Transcript

Hello guys. In this video I want to show you a new package by Nuno Maduro called Pow. And yes, another package from Nuno starting with letter P. Don't ask me. And you should care about that package if you work with AI agents for coding and you want to save some tokens, means money. Who doesn't, right? So in this video I will show you the prompt with and without Pow and we will actually compare the difference in tokens with Cursor because Cursor shows on the dashboard how much money it was using. So in short what Pow does is shortening the response from PHP Unit, Pest, ParaTest and also PHP Stan from this to just a few tokens.

Because AI agents don't need those things. They just need if the test succeed or failed and if failed the reasoning for the failure also may be shortened. And you would think it's not a big deal because it's just a few symbols here and there, right? But think about how many times AI agent is running the tests. If you're working with a model that is capable of one-shotting the results successfully from the first time, then the tests are executed only once or a few times maybe for segment of tests and then for the full suite. But for many, especially cheaper models, I've noticed it goes like this.

Generate the code, the full code, then run the tests, then the tests fail miserably and then the model is running in circles trying to fix tests one by one and then executing tests again and again until they succeed. So in those cases saving on tokens could be pretty big. Let's try it out. So here I have a fresh Laravel 13 project with Livewire starter kit and I will launch one prompt on that in Cursor with agent mode, the new agent mode in Cursor 3. If you haven't seen that, I've reviewed that on my separate channel AI Coding Daily.

This is here. I tried Cursor 3 in action and I will launch this prompt to create a CRUD with model Kimi K 2.5. So not the frontier model, not Opus, not Codex, specifically deliberately a cheaper model which is still good, but let's see how it handles the testing specifically. So I launched the prompt and here's how Cursor 3 works. There's workspace and there's list of multiple prompts for agents and let Kimi run and I will stop when it's actually running the test with PHP Artisan test. And meanwhile I want to remind you that saving on tokens for AI agents is not a really new thing.

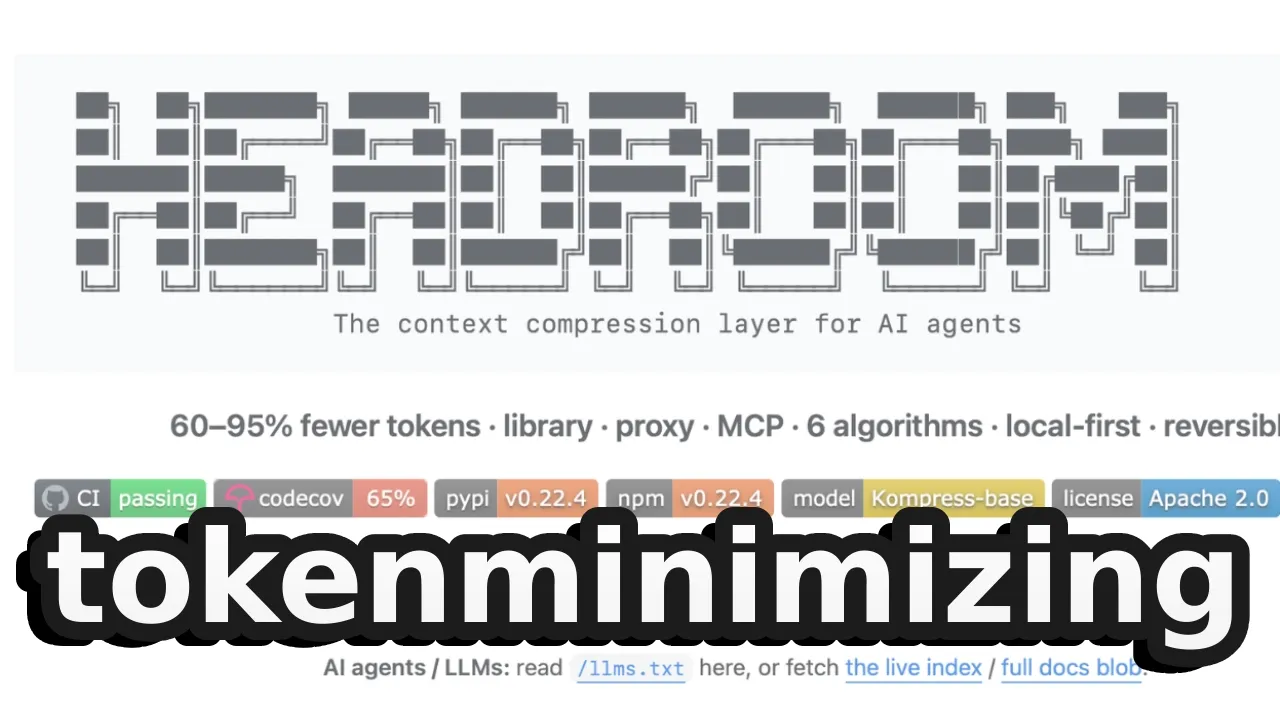

So a month ago on my AI Coding Daily channel I had this video Markdown for agents and this is the blog on Cloudflare blog introducing Markdown for agents. So Cloudflare acknowledged that AI agents don't really need those HTMLs with fancy Tailwind classes. They just need the markdown with information which may lead to 80% reduction in token usage. And also our beloved company Spatie have released a package Laravel Markdown response which basically allows you to do exactly that, return the markdown if you have accept text markdown header. So the new package Pow is actually in the same direction of saving the tokens.

Let's see how Cursor is doing. It's generating the test but it's not yet launching the test. Let's see in a few seconds I would guess because Kimi is pretty fast model actually and running the test. Let's see the result of that. Result failed as expected because with a lot of models, as I said, it's failure and then fixing things. And look at the response. So the response should be compact somewhere here. I'm not actually sure but I've seen in my experiments that this long response is shortened. Maybe not in this case, I'm not sure why, but let's see what happens next.

So there's a fix by Kimi, running tests again and now it is passed. So this is shortened result for AI agent and then there's summary and then function is done. But I'm not sure how Pow is doing with the failed test. No, in this case I don't think it's truncated but let's actually try to introduce some kind of error. So let's close the agent mode and in Cursor you can switch between agent or code. So in IDE let's make a typo for example and then let's prompt AI agent run Pest test. Let it be Sonet.

Fine. And let's see if it will truncate the result. And let's see run tests and nope, it doesn't seem to truncate. So this is probably early days for Pow for truncating the test. And yeah, then of course then Cursor fixes the thing and reruns the test and then it is passed. And now let's see the Cursor dashboard how much it took in terms of tokens and money. Okay, and there we go. We are on the dashboard. For some reason for Cloud and Composer the tokens are zero. Not sure why it's not logged, maybe the bug on Cursor dashboard, but for Kimi response we have 1 million tokens which is in money 41 cents for that CRUD creation on Kimi via Cursor.

And let's try the same thing with Kimi but without Pow. Will it be somewhat cheaper? So here we go. I removed the Pow package in composer.json. It's not here anymore and let's run the same prompt. So new agent on the same Pow project, same Kimi 2.5 and let it run. And now actually I'm not sure it will be cheaper because the bigger problem in test it's actually failing test and Pow in this case doesn't seem to shorten that. And I'm actually not sure if it should shorten that because the stack trace and all the information about the error will help to actually fix the error by AI agent, but let's just see out of curiosity.

And this is by the way one of the things by cheaper models like Kimi K 2.5. We have auth check in the form request in Laravel. Seriously? It should be a middleware auth in the routes instead of that. But let it run. In this video I'm not here to judge the code written by Kimi. I'm here to evaluate the tests. And while it is running, a reminder that testing in general becomes hugely important in this AI agentic era and if you're not that familiar with writing tests and the best practices, this was one of my recently updated courses on Laravel Daily Testing in Laravel 13 for beginners.

This is a text-based course so you don't have to watch videos. You can skim through that for specific topics that you are interested in. And also at the end there's AI skill to test your tests if they are with good structure. And currently this week is 40% off yearly or lifetime membership until April 12th on Laravel Daily. No coupons needed. Now let's get back to Cursor. And yes, running the tests now and we have not compacted, not Pow result with failed this and then again running the test passed. Now it is not in the shortened format anymore.

Then run all tests. 49 tests passed successfully. As I said, this is a typical behavior by especially cheaper models. They generate the code, then the tests fail and then they start fixing. In this case it was a simple CRUD, but for more complicated operations it may be quite a lot of running in circles. Now let's see Cursor dashboard and the Cursor dashboard shows zero tokens for that Kimi request for whatever reason, I don't know. Maybe it's a bug on Cursor dashboard side or maybe that was free request for whatever reason. So I don't even know the difference and cannot really compare the before and the after.

But you saw the response difference. So yeah, not every video is going according to the plan but I still wanted to shoot this anyway and will not edit that out because it's a fair unbiased review whether Pow is successful and whether it helps. And directionally I agree with Pow direction to shorten the response to save the tokens. Everything is fine. But for now I didn't see the shortened response or somehow truncated or zipped response from failed test and this would be probably the biggest gain, but I'm not sure how to make it properly for all kinds of errors.

So I will watch that space and will shoot new videos if Pow comes with new versions or new approaches come in general for AI agents in Laravel. The final thing I want to notice is that under the hood Pow uses another package Agent Detector which is this Ship Fast Labs which is pushback from the core Laravel team. It's his own kind of personal account and that Agent Detector detects if it's Cursor or Codex or Cloud Code with these detection methods. So maybe for some of your AI agents if you don't use any of those, you use something different, it may even not detect Pow and not shortened test response may appear for you.

So just keep in mind these are the agents detected. So yeah, this is Pow, still early days version 0.1, but Nuno told me it may become the default behavior for upcoming Pest versions or maybe even Laravel versions in the future because it doesn't make sense to not try to save tokens for AI agents. What do you guys think? Let's discuss in the comments below. That's it for this time and see you guys in other videos.

More from Laravel Daily

Get daily recaps from

Laravel Daily

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.