token efficiency

11 videos across 9 channels

Efficient token usage is explored through practical strategies that pair smaller helper models with larger executives to cut waste, trim AI test outputs with tooling like Pow, and keep prompts lean by avoiding over-detailed setups. The discussions emphasize cost, latency, and drift risks, offering guidance on when to deploy multi-model delegation, how to minimize unnecessary output, and how to structure contextual data and files to preserve memory and relevance without bloating tokens.

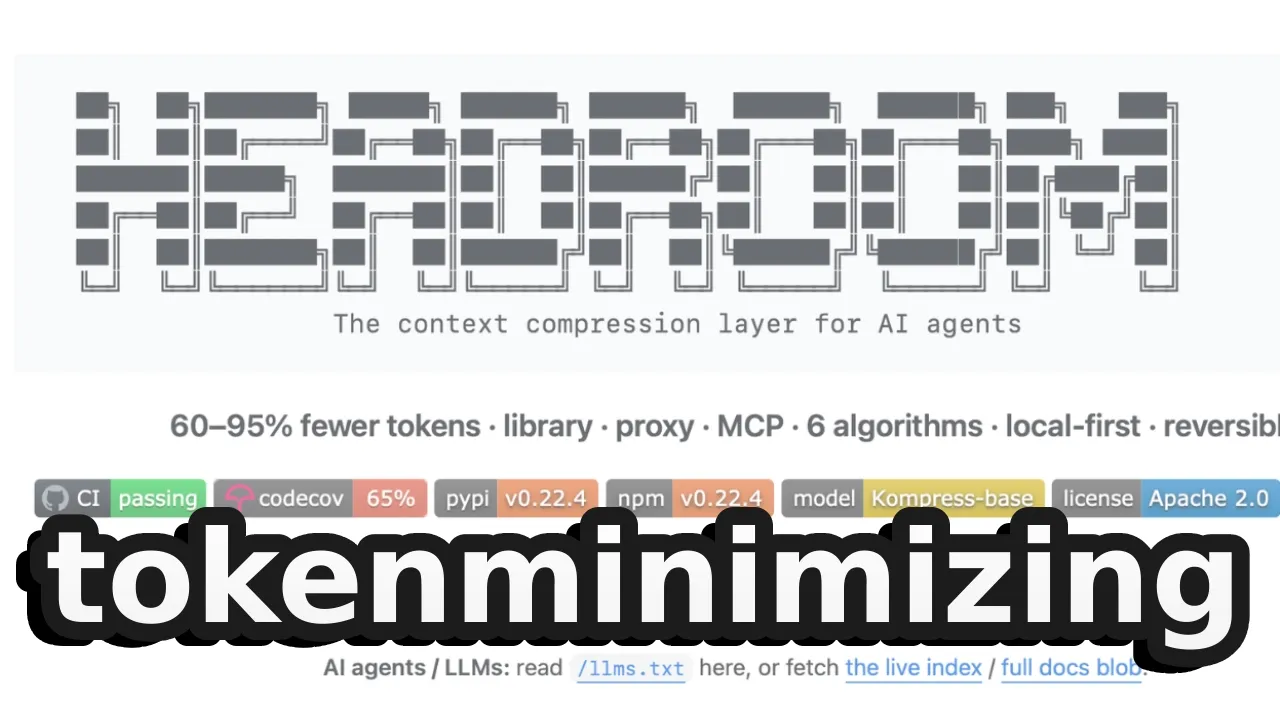

Someone just open-sourced tokenminimizing (8500 stars on GitHub)

The video introduces Headroom, a context compression layer for AI agents that promises 60–95% fewer tokens while maintai

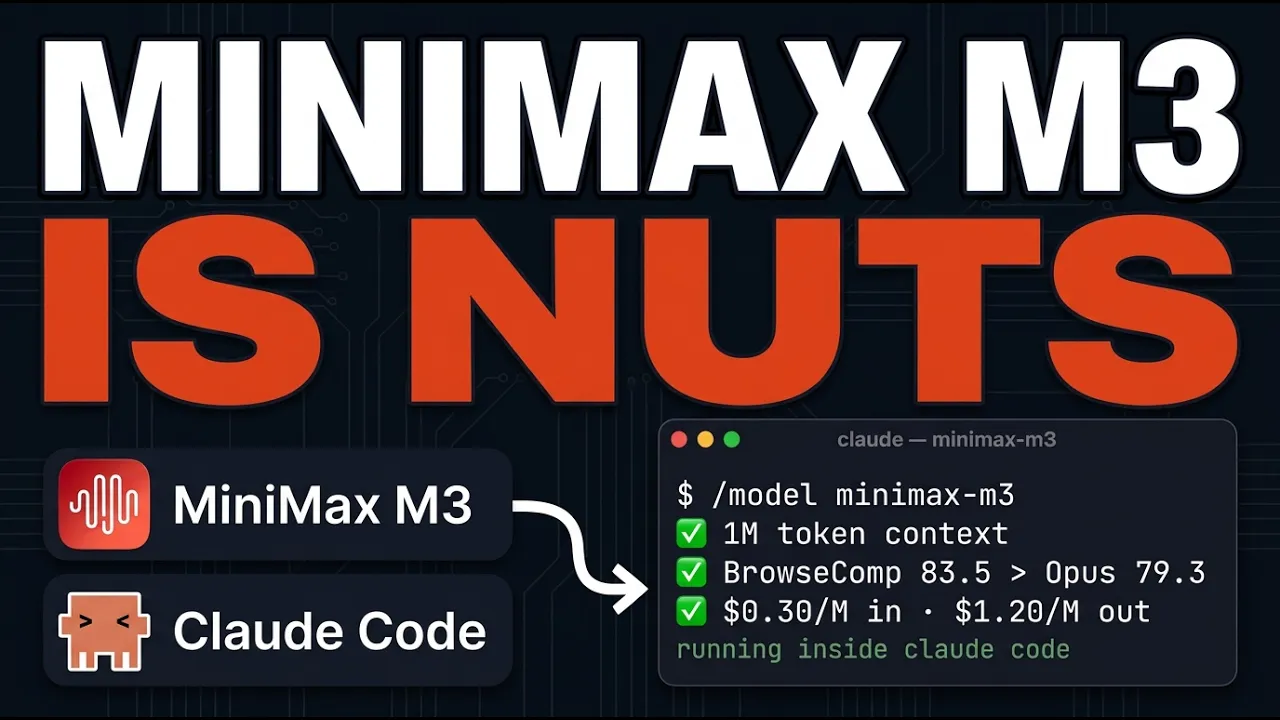

I Replaced Opus With MiniMax M3 In Claude Code (It's INSANE)

The video reviews the MiniMax M3 multimodal model and compares it with other open models, sharing early test impressions

Opus 4.8 Just Dropped. Here's How To Actually Use It.

A quick analysis of Claude Opus 4.8, focusing on what's new over 4.7 and how it changes usage, pricing, and expectations

Everyone is Wrong about Tokens

The video uses a provocative take on extreme AI token spending to argue that the future will shift from maxing token usa

Agent Battle: Mine the most diamonds in 45 minutes

The video introduces a teams-based workshop where participants build and optimize a Minecraft mining agent, focusing on

Printing Press Just 10x'd Everyone's Claude Code

The video argues that command-line interfaces (CLIs) are a superior abstraction for autonomous agents compared to APIs a

This Huge Update Changed The Way I Use Claude Code

The video explains how Anthropic's adviser strategy uses a dual-model setup to optimize token usage and performance, sho

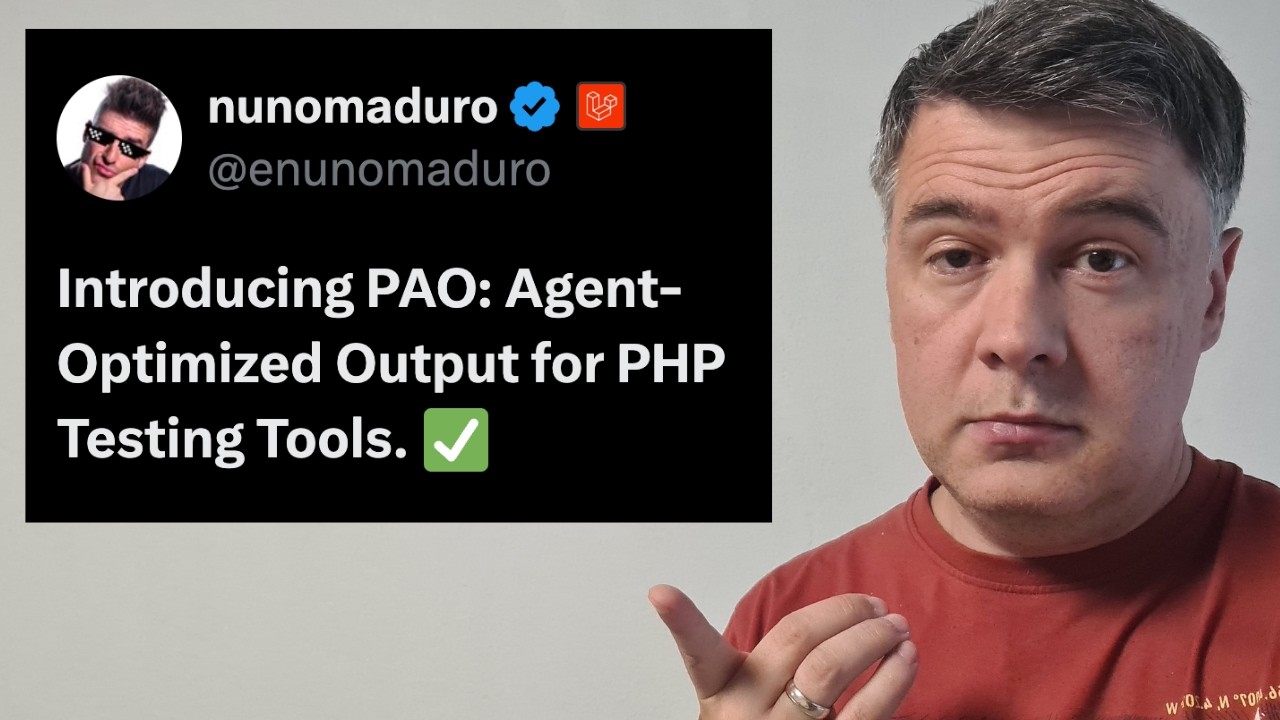

I Tried NEW Package PAO: Save AI Tokens on Test Responses

The video introduces the Pow package by Nuno Maduro, which trims unnecessary output from AI agent tests to save tokens,

Never Run claude /init

The video argues against creating or heavily detailing claw.md and agent files, warns about the token cost and drift fro