5 Claude Code skills I use every single day

Chapters6

The speaker emphasizes the importance of strict, well-defined processes for AI agents that have no memory, sharing a personal set of designed skills and a course called Claude Code for Real Engineers to improve engineering quality and workflows.

Matt Pocock reveals Claude Code skills he uses daily, like grill me, PRD, and TDD, to sharpen AI-driven engineering workflows.

Summary

Matt Pocock walks through the core Claude Code skills he trusts every day to impose discipline on AI agents. He highlights the grill me skill as a way to force a shared understanding and walk every branch of a design tree. He ties the design tree concept to Frederick P. Brooks’s work and shows how it guides rigorous design before coding. From there, he introduces a PRD skill to turn ideas into concrete GitHub-ready plans, and a PRD-to-issues skill to slice work into vertical, testable tasks. His TDD skill enforces a red-green-refactor loop, with emphasis on interface design and testability. He also explains an “improve codebase architecture” skill to surface dependencies and create multiple interface candidates. The video plugs his course, Claude Code for Real Engineers, a two-week cohort starting March 30 with 40% off for the next week. Pocock wraps with a call to action, inviting feedback on what to cover next and reiterating how these skills actually raise code quality when used together.

Key Takeaways

- Grill me forces a detailed, questions-first exploration of design space, often yielding 15–16 focused questions in a single session.

- Design tree thinking helps you resolve design choices and dependencies before writing code, clarifying paths like advanced vs. text search.

- PRD-to-issues converts a PRD into a set of linked GitHub issues with blocking relationships to enable parallel work.

- TDD with agents uses a red-green-refactor loop to build tests first and drive code quality, emphasizing interface design for testability.

- Improve codebase architecture surfaces hidden coupling and suggests multiple viable interfaces, then synthesizes the best approach with human judgment.

- The Claude Code for Real Engineers course is a two-week program starting March 30, with a 40% discount for seven days, focused on real engineering skills and autonomous-agent workflows.

Who Is This For?

Essential viewing for developers and AI engineers who want to supercharge Claude-based workflows. If you’re building AI-assisted architectures or onboarding a team to agent-driven design, these techniques show how to keep quality high and decisions well-documented.

Notable Quotes

"Grill me. I'd like to think about adding this to the right page."

—Shows the grill me discipline as a way to force a thorough, question-driven discussion before implementation.

"Skills don't have to be long to be impactful."

—Emphasizes that concise prompts can yield deep, useful inquiry from the LLM.

"The design tree is this idea that as you're coming towards a design, you need to walk down all of the branches of a design tree."

—Defines the design-tree concept and its practical application in design decisions.

"A pure function document editing engine with 28 tests covering all acceptance criteria."

—Illustrates how a well-tested, modular engine supports reliable AI-driven features.

"If you have a garbage codebase, then the AI is going to produce garbage within that codebase."

—Underlines the importance of good codebase hygiene to get quality AI outputs.

Questions This Video Answers

- how do you implement a grill me workflow with Claude Code

- what is a design tree and how do you apply it to software design with LLMs

- how to convert a PRD into actionable GitHub issues for AI-assisted projects

- what is the Ralph loop and how does it improve AI-driven software development

- how can TDD improve the reliability of AI-generated code in complex projects

Claude Codedesign treeGrill mePRDPRD to issuesTDDinterface designcodebase architectureRalph loopGitHub issues

Full Transcript

I've been an engineer for nearly a decade and in all of that time right now process has never been more important. At your fingertips now you have access to a fleet of middling to good engineers that you can deploy at any time. But the weird thing about these engineers is they have no memory. They do not remember things they've done before. And so you need extremely strict and well-defined processes to get those agents to actually do things that are useful. So this means that you as a developer are looking constantly for ways to steer your agents to keep them on the right track.

And for me that has resulted in a lot of skill building. Here's the repo of all the skills that I'm using right now. Each of which I have gone through and designed. Some of these I use relatively rarely but some of them I use every single day. And these skills help me encode my process. So the AI has a really strict path it can walk down every single time. And as a result of using all of these skills, the code quality that the AI is producing has shot up. Now, if you think that process is important and that real engineering skills are important, then boy, do I have a course for you.

This course is called Claude Code for Real Engineers. It's a 2 week cohort that starts on March 30th and for seven more days, it is 40% off. If you feel like you're behind the curve on Claude Code and you want to get way ahead of the curve in just two weeks, then blime me, this is the place for you. Let's start talking about our skills with number one, which is maybe my favorite. This is the grill me skill. This skill, yes, it is just three sentences long. And let's just read it out in full to describe what it does.

Interview me relentlessly about every aspect of this plan until we reach a shared understanding. Walk down each branch of the design tree, resolving dependencies between decisions one by one. And finally, if a question can be answered by exploring the codebase, explore the codebase instead. The concept of a design tree comes from this book by Frederick P. Brooks, which is the design of design. Actually, I don't know if it comes from this book, but this book is where I saw it first. The design tree is this idea that as you're coming towards a design, you need to walk down all of the branches of a design tree.

For instance, you might be designing a search page and you need to decide whether you want an advanced search or a text box. If you choose advanced search, then you need to figure out all of the filters and all of the sorting methods that you need on advanced search. and you keep on walking down the tree until you figure out your design kind of in full or as full as you can before actually committing to code. This grill me skill when I invoke it, I invoke it when I want to reach a shared understanding with the LLM.

I found that relatively recently claude code will tend to just spit out a plan really early when I go in plan mode and it tends to just create a document before I feel I've reached a shared understanding with the LLM. But the grill me skill forces that conversation. and it forces the LLM to interview me about every single part. Here's a conversation I had with Claude recently about adding a feature to my course video editor codebase. I gave it some research that I done in a markdown file and I said, "Grill me. I'd like to think about adding this to the right page." It loaded up the skill and the thing I want to show you is just how many questions it asked me.

So, the first thing it did is it just explored the relevant stuff in the codebase, which is good. Then we zoom down. We can see it asked question one, where does the document live? Question two, what's the UI layout? Question three, which modes get the document panel? Question four, the document life cycle? Question five, what does the right document tool look like? Question six, the edit tool shape. Question seven, question all the way down to question 9. Question 10, question 11, question 12, all the way down to question freaking 16 here. And this is a relatively short grilling session in my book.

I've had sessions where I've sat there for nearly half an hour, 45 minutes with the AI answering questions on really complex features. you know, that could be 30, 40, 50 questions all from this absolutely tiny skill. That's one thing I want you to take from this. Skills don't have to be long to be impactful. You've just got to choose the right words for the LLM at the right time. And this design tree, resolving dependencies, has just been absolutely great for me. By the way, if you want these skills, then they will be at a link below.

Once I have reached a shared understanding with the LLM, once I have grilled my idea and sort of understood all of its ramifications, if I then decide I want to implement it, then I invoke my next skill, which is a write a PRD skill. I actually did this in the conversation we were just looking at. So, it said anything I've missed or got wrong and I said write a PRD. I was suffixing it with user because I have some that sort of live in the project. So, that's the reason why I did that. Here's what the skill looks like.

This will be invoked when the user wants to create a PRD. You may skip steps if you don't consider them necessary. So for instance, in the previous conversation, it said, "We've already done a deep interview. Let's move to step four." So step one is to ask the user for a long detailed description. Then number two is to explore the repo to verify their assertions. Number three is basically to interview the user relentlessly. So just a copy of the grill me skill again. Next, we sketch out the major modules you will need to build or modify to complete the implementation.

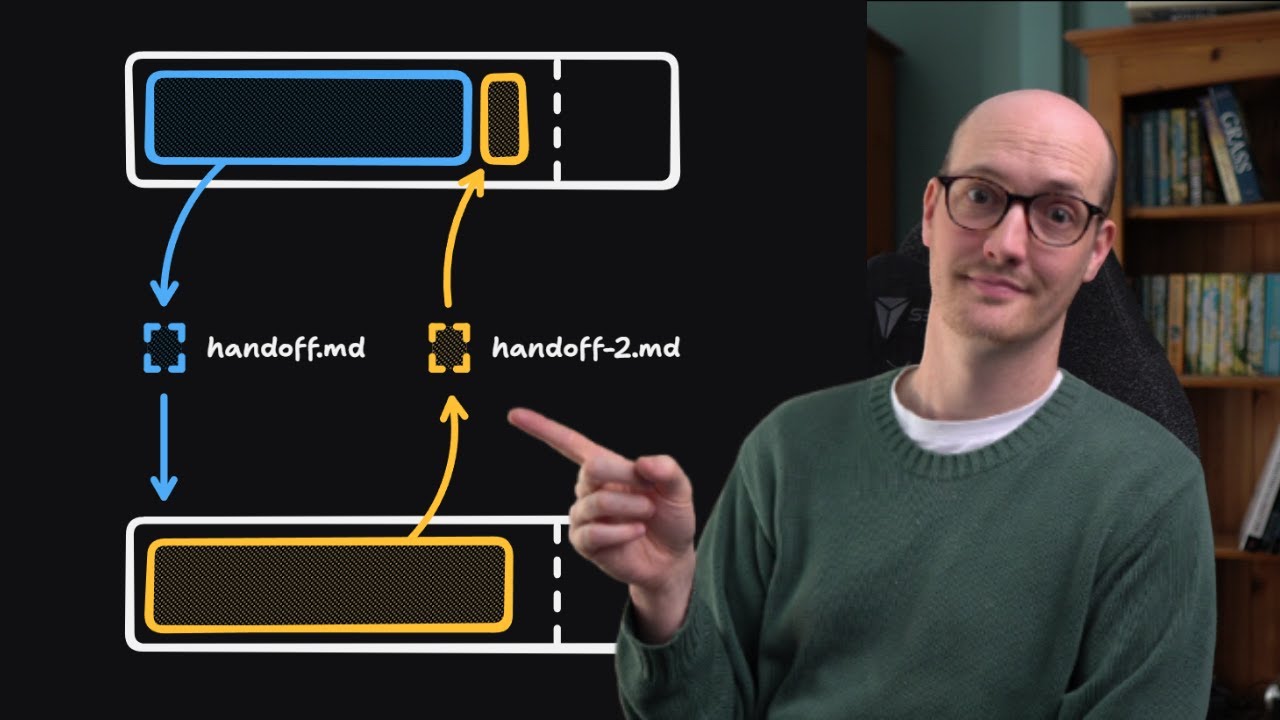

We're going to look at this later because it links to skills I'm going to show you in a bit in this video. And finally, once you have a complete understanding of the problem and the solution, use the template below to write the PRD and the PRD should be submitted as a GitHub issue. The way that my dev flow works is I take these PRDs in GitHub. I turn them into more GitHub issues that reference the parent PRD and then I have a Ralph loop that just loops over each issue until it's done. If we go back to the conversation where we were before, we can see that it created this PRD here.

This was 4 days ago. As you can see, we've got a problem statement. The article writing page currently regenerates the entire document on every AI interaction. And the solution was to add a split pane document editing experience to the article writer. Chat stays on the left. A new document panel blah blah blah. So this is a big feature. We're adding document editing to a kind of AI chat feature. The important thing here is the user stories. There are many many user stories as part of this and this comes from agile methodology and we're basically trying to describe the kind of desired behavior of our system in language which is not an easy thing to do.

I still haven't properly like landed on the right format for these. This is just something I sort of like, but you could easily use like cucumber language for these or whatever your kind of used to do used to working with. We then zoom down to the bottom and we just sort of pass in some implementation decisions. The implementation decisions here we don't want to be like overprescriptive because we want these to be durable because if the code ends up getting out of date with the PRD, then we're going to have issues when we actually go to implement it.

But you can see the theory here. This is the kind of uh it's a really good description of the destination that we're going to. But what we don't have from the PRD is the actual journey is the is the way we're going to get to this destination. And if we leap back to that conversation, this is where I use my next one, which is PRD to issues. What this does is it takes a PRD, takes the destination, and it turns it into a canon board of different issues that can be independently grabbed. So the first step in here is it locates the PRD.

If the PD is not already in your context window, fetch it with this instruction. Explore the codebase if you need to. And then draft vertical slices. It's not always clear how you should break a PRD down into individual tasks. This is something that developers have been doing for yonks, right? And we've developed a kind of intuition for how to do it. In my opinion, the best way to do it is to break it into tasks that flush out the unknown unknowns really quickly. For instance, if you're integrating with a new kind of service or integrating two things which you haven't integrated before, then you should do that work first because it's going to give you feedback on whether your approach is even valid.

The right analogy here is the tracer bullet analogy. I won't go into what that means, but basically each issue is a thin vertical slice that cuts through all integration layers, not a horizontal slice of one layer. In the conversation, it broke down that really complicated PRD into just four slices. It first created a kind of engine with some tests applied to it. This is actually quite a good vertical slice because this was the engine that was going to then power the rest of the kind of setup. If this engine wasn't working for whatever reason or it wasn't feasible, then we would need to flush that out quickly.

And this is what this um breakdown does. The PRD2 issues also establishes blocking relationships between the tasks. For instance, number two here is not actually blocked by anything. So, it can be picked up independently to one. This is really useful if you have a parallel agent setup where you can actually fire two agents at it at once for instance in like background tasks. And it also means that in the future you can add other issues to this like uh QA issues that you find or things that need to be improved. And you can then establish blocking relationships between that and all of the other things.

We can see that number three here is blocked by one the editing engine and the number four the Monaco editor toggle is blocked by number two. So I said yes to all of these and it created then all of these GitHub issues. These issues reference the parent PRD so that the uh local agent can fetch it and view it and it sort of just breaks down what to build really and crucially it references the previous user stories in the PRD. We can then see a comment actually from claude code that ended up implementing this. It said a pure function document editing engine with 28 tests covering all acceptance criteria.

And we can then take a look at the commit that references this issue. So this was basically my Ralph loop came and just implemented this based on the issue, commented on it, closed it and uh then the next issue was unblocked. So so far the grill me skill can help you flesh out an idea. The write a PRD skill can help you take that idea and turn it into a document and then the PRD is or PRD to issue skill helps you then turn that destination document into an actual journey. But then how do you actually execute on that skill?

How do you make it like how do you make the implementation really rock solid and increase the code quality of what gets produced? We have got a TDD skill. TDD means testdriven development. And when you invoke this skill, it basically forces the agent or encourages the agent rather to follow a red green refactor loop. Unusually for my skills, there is actually a lot in here. So it's not just the skill itself. It's also uh ideas on refactoring, on mocking, on what deep modules are. doing really really good. TDD has been the most consistent way that I've improved agents outputs.

So let's have a look at what's actually in here. What we can see is I'll just skip over the philosophy stuff. I'll let you guys read that. We are basically looking at this workflow. Yeah. Now the first one here is really important. Confirm with the user what interface changes are needed. Now I made a video on interfaces and implementations recently, but let me just give you the prey. When an AI looks at a bad codebase, it will look at or it will see something like this where it has a ton of tiny modules here that are kind of undifferiated.

They're not really grouped together. It doesn't really understand how these things relate. And so it has to do a lot of work kind of working out, okay, what's responsible for what? What are the dependencies? How does this actually how's the codebase even function? Whereas if you restructure this into several larger modules with just kind of thin interfaces on top, the interface being the functions that are actually exported from this, the uh things that the callers actually call, then it's a lot easier for AI to navigate this codebase and it's a lot easier to work out how to test these modules because you just test them at their interfaces.

You test them at their boundaries. You can check out the whole video on that below. So what this TDD skill is encouraging here is basically trying to make these interface changes really uh top of mind for the AI to get it to understand that when it changes an interface that's an important decision it needs to take time over. You confirm with the user which behaviors to test. You design the interfaces for testability linking to a dock and then we have some more stuff around planning here. It then goes into a lovely loop where it writes one test at a time and it writes the test first.

Now, I've talked about red green refactor before. So, I'll link the video below if you're interested. But I found that red green refactor with agents is incredible, and it basically does this loop until it's complete. It just writes a failing test, then writes the code to make that test pass. Then, finally, it goes through and looks for refactor candidates. I haven't found that this is amazing. It hasn't been brilliant because often LLMs are quite uh you know, they're quite reluctant to refactor their own code. If you were to clear the context of the LLM, then it would just sort of wipe its own memory and it would be a lot less precious about the code that it's just written.

But while its own code is sitting in its own context window, it's quite reluctant to change it. So this TDD skill is what I prompt my Ralph loops with in order to get them to do red green refactor. Now TDD demands a lot of you, or rather it demands a lot of your codebase. TDD is really hard to do in a badly structured codebase because the test boundaries of this are really unclear. Should it just sort of test these modules on their own? Should it test these modules on their own? What are the boundaries here?

Whereas, when your codebase looks more like this, then it's a lot easier to test because the module boundaries are really clear. So, wouldn't it be great if there was a skill that made your codebase look more like this? Well, isn't it nice? We've got an improve codebase architecture skill. The process for this one is that we explore the codebase and explore it kind of like naturally as an agent would. We're trying to find confusions. We're not like we're trying to sort of surface naturally what the AI finds confusing so that it can then sort of like help it out later.

Where does understanding one concept require bouncing around between many small files? Where have pure functions been extracted just for testability, but the real bugs hide in how they're called? Where do tightly coupled modules create integration risk in the seams between them? All of these are questions that a senior engineer would be asking about your codebase. Number two is you present candidates. So you present a numbered list of deepening opportunities. In other words, opportunities to deepen shallow modules in your codebase into deeper ones. The user then picks a candidate and then you design multiple interfaces. So it says to spawn three sub aents in parallel, each of which must produce a radically different interface for the deepen module.

In other words, we're extracting that code and designing possible ways that it could look in the future. designing it in multiple different ways is a really great way that you can then decide on the right idea. I've seen this agent spawn like five different sub aents for a really big refactor. The coolest thing about this is you don't need to know a lot about interface design in order to get this working. After comparing, give them your recommendation which design you think is strongest and why. And if elements from different designs would combine well, then propose a hybrid.

Notice that I've made this really language agnostic, really kind of sort of everything agnostic really. You can just run this in any codebase and just get a decent answer for how it could be improved. There might be four or five candidates that really could use some work, but really I think you should only be sort of doing one of these at a time because they really are quite hard to get your head around and they require a human in the loop to sit with them and improve the codebase because these decisions do require taste. Finally, it creates a GitHub issue.

So, it creates a refactor RFC as a GitHub issue using GH issue create. Usually once this is done, I will then go with my PRD to issues uh skill reference that GitHub issue that's just been created and get it to you know this describes the destination. We then need a journey to get there. So just doing this every so often in a codebase you know once a week just to identify opportunities or if you have a sudden surge of development and you kind of create a whole sort of extra wing of features then this uh skill will be really really useful in just making sure it conforms to the rest of the codebase.

making sure that it's not uh too sloppy. And as you keep running this, as you keep refining your codebase, you're going to notice the quality of the agents output goes up. Because the old adage really does apply. If you have a garbage codebase, then the AI is going to produce garbage within that codebase. Because to be honest, if you took all of these skills and just said, "Okay, this is like a little mini markdown book of processes for humans," then it wouldn't look out of place. I found that the most successful way to get code quality up from agents is just to treat them like humans.

Humans with weird constraints. Sure, humans that uh have no memory and are just sort of cloned come out of the birthing pod and go right to work. But if you like me think these real engineering skills are super important, then this course is absolutely for you. What I noticed while I was creating the course is that I'm really not teaching Claude code that much. I'm teaching kind of what are sub aents. I'm talking about the constraints of LLMs, the sort of weird smart zone, dumb zone stuff with the context window. We're talking about steering, which is essentially just a way of documenting stuff inside your codebase, how to tackle massive tasks, understanding tracer bullets and building those into our skills, understanding how to build really great feedback loops and doing exercises with them, and crucially, how to hook these up to an autonomous agent.

Every part of this course just sort of like leads onto the other, and I'm super happy with how it turned out. So, over the course of two weeks, you'll be working through that self-paced material with me as your guide in Discord and on live office hours. And if that sounds fun to you, then the link is below. Thanks for watching, folks. I'll be coming back with a lot more stuff this week. What would you like me to cover next? I find the intersection between this real engineering and AI is like it's such a awesome place to make content about.

But anyway, thanks for watching and I will see you in the next

More from Matt Pocock

Get daily recaps from

Matt Pocock

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.