The 7 phases of AI-driven development

Chapters7

Introduce the concept of starting with a clear idea or feature and deciding whether it is large or small, including refactors as part of the scope.

Seven AI-driven development phases to ship quality code, with Claude Code guiding ideation through QA in a repeatable loop.

Summary

Matt Pocock lays out seven concrete phases for building with an AI coding assistant, using Claude Code as his go-to example. He starts with a broad idea and shows how it can balloon into a set of actionable tickets, possibly running many AI agents in parallel. Research may be cached in a research.md asset when external APIs or hard-to-access data are involved. Prototyping helps impose taste and concrete UX decisions before committing to code. The PRD then translates that vision into a tangible end state, followed by turning the plan into a Kanban board (GitHub Issues or another tool) to enable parallel execution. Execution is typically a Ralph loop, though a sequential, human-in-the-loop flow is also viable. After execution, a QA plan is created and a human reviews the results, feeding new tasks back into the Kanban board for iteration. Pocock emphasizes that these phases are not dogma but a practical framework for AI-assisted engineering aimed at durable software, with room to grow and adapt over time.

Key Takeaways

- Idea-to-tickets: start from a broad idea that becomes a set of AI-driven tasks or tickets to ship features or refactors.

- Research phase when external APIs or uncommon integrations exist; cache findings in a research.md asset for reuse within a sprint.

- Prototype early to impose design and architectural taste, iterating on multiple options before code commitment.

- PRD (or end-state spec) documents what users will see and how the product should behave, guiding subsequent implementation.

- Kanban board enables parallel work; GitHub Issues can model tasks and blocking relationships (or use tools like Linear for explicit block relationships).

- Ralph loop is Pocock’s preferred execution method, allowing AFK progress by running tickets in sequence or in controlled parallel bursts.

- QA loop closes the iteration by producing a QA plan and having a human review to generate new tickets for continuous improvement.”],

Who Is This For?

Essential viewing for AI engineers and developers who want a practical, repeatable framework to ship AI-powered features using tools like Claude Code, GitHub Issues, and Kanban boards.

Notable Quotes

"I have identified seven phases of development with AI."

—Opening statement outlining the seven-phase framework.

"This idea can be as small and as big as you like."

—Emphasizes scalability of the framework from tiny fixes to large projects.

"We can expand this idea and this process can take very very large ideas into reality or it can be teeny very narrow and very focused."

—Highlights flexibility and scope of the process.

"The next step after research is to get to prototyping."

—Shows sequencing: research → prototyping in the workflow.

"Finally, once you've done with execution, you've got a completed asset for you to actually look at, then you get the agent to create a QA plan for the human to QA the completed work."

—Describes the move from execution to QA and human review.

Questions This Video Answers

- how do you structure an AI-assisted development workflow with Kanban?

- what is a Ralph loop and why use it for AI coding projects?

- how can I use a PRD effectively when building with AI agents?

- what role does research.md play in AI-driven development?

- can GitHub Issues replace a traditional Kanban board for AI projects?

Full Transcript

What's up friends? I'm going to keep this short and sweet. I have identified seven phases of development with AI. In other words, as you're working through coding with your AI coding assistant, in my case, Claude code usually then these are the seven phases you should be thinking about for shipping great work. The way you achieve these phases is kind of up to you. There are many different implementations of it, but these are the ones that I have kind of understood to be kind of common across lots and lots of different approaches. Whether you're doing Ralph loops like I mostly am, whether you're doing GSD, whether you're using SpecKit, you are probably going to be using these seven phases.

If you dig this stuff and you believe that engineering fundamentals are really important in the AI age, then guess what? So do I. And this is what I cover and elaborate on in my newsletter. This is not for vibe coders. We are people that are serious about AI engineering and serious about building applications that are built to last. So if that sounds like you and you want to improve your skills, then this is the place. But without further ado, let's go into the list. Phase one, we start with the idea. You have some kind of idea, some reason that you are invoking this progress, something that you want the AI to do for you.

This might be that you have an entire app idea that you want to build. Or you might just have a narrow thing that you want to complete within the codebase that you're in, like a bug fix or a feature. I also count refactors as part of this, too. So, if you have a codebase that you need to refactor, then this process will work for you, too. This idea can be as small and as big as you like. We can expand this idea and this process can take very very large ideas and turn them into reality or it can be teeny very narrow and very focused doesn't matter.

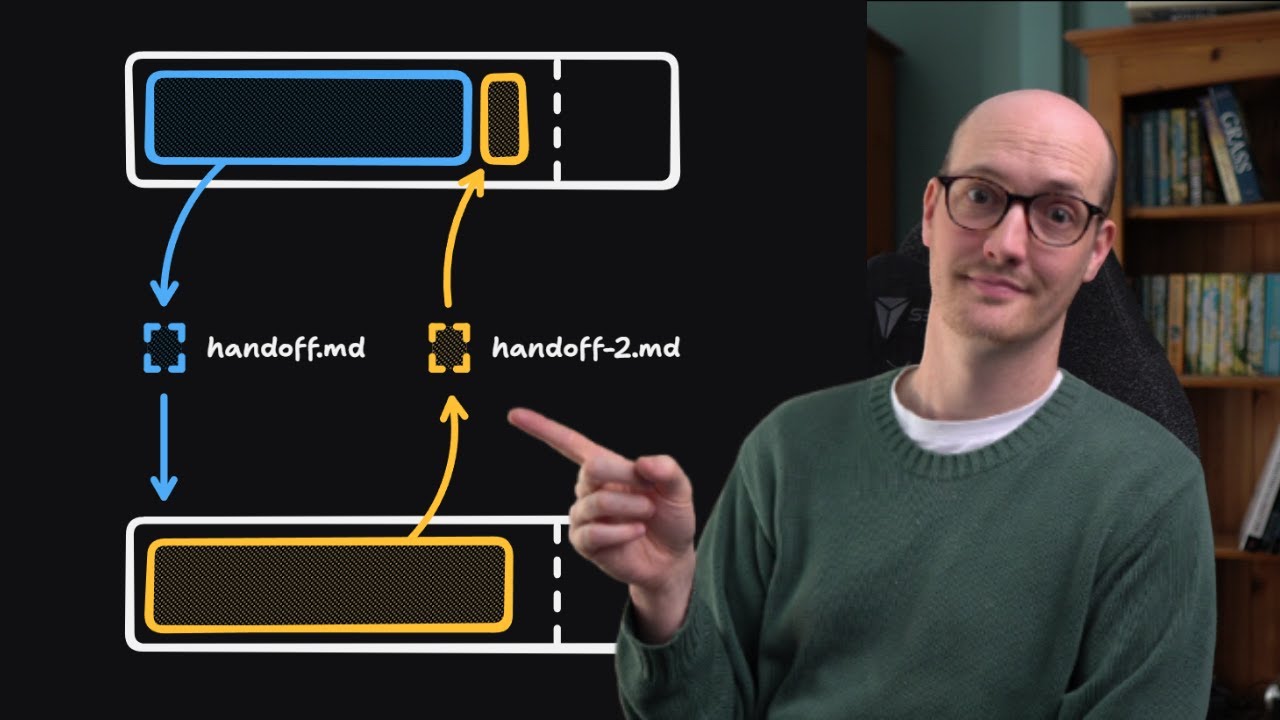

Now just to give you a glimpse of the future setup here the idea is going to be turned into a set of tickets which a kind of AI is going to complete. Now, that set of tickets might end up being lots and lots of different kind of like AIs working at once or maybe just a big list of tasks that the AI is going to complete sequentially. So, if this idea involves any kind of research here, any kind of like difficult explore phases as part of building the code, then you may want to include a research phase.

Now, for instance, if you're doing like a Stripe integration or maybe integrating with an API that's not very common, then you might want to create an asset that kind of takes all of the research about that thing like based on your idea and kind of caches it and puts it inside the repo or somewhere that your agent can access. Essentially, every time your agent is doing work, it might need to explore the repo in a fresh context window. And if that exploration is difficult, so it's in an external API or it's somewhere that's hard to access, then you'll want to cache it in a research.md asset and you'll definitely want to run a research phase at this point.

The next step after research is to get to prototyping. Now, in the prototype stage, we're still not really sure what we're actually building and even maybe why we're building it. Prototyping is really important if you need to impose your taste on the outcome. In other words, maybe you need some UI that needs to look a certain way or behave a certain way. You're not quite sure which one to do. What I tend to do is just chuck up a bunch of different ideas on a throwaway route, which is kind of like the LLM showing me all of the different ways it can think of to build out the prototype.

I then iterate on the prototype inside a couple of sessions and say, "Okay, no, that one looks like the best." I found that doing this early is absolutely essential because then you can actually commit the prototype to your codebase and then make that available to the agent when it actually goes to implement it. The next step we are in step four now is to create a PRD. Now that we understand a bit more about the kind of like external APIs that we're using in the research phase, now that we understand a bit more about the prototype and we've actually seen some code, it's time to start actually properly describing the destination.

We should now feel confident in ourselves that we can kind of like understand the end state, what we're trying to create at the end. We won't know all of the implementation decisions yet. we will just kind of know the basic stuff that the user is going to see and the way that it's going to behave. We don't have to call this a PRD, by the way. This is a PRD is a product requirements document, but really it's just some kind of document that describes the end state of where we're going. Now, in the process of creating this end state, we really need to hammer out the design.

And this means we need to prompt the agent to absolutely grill us walking down every part of our decision tree. I have a writer PRD skill that is purpose-designed for this, which I will link to below if you're interested. But once we've created the PRD, then it's time to actually start breaking down the PRD into some kind of implementation plan. For those of you who are not developers or you've never used a I don't know, a canban board or a Jira board or anything like that, a canon board is just a list of tickets that have blocking relationships between them.

We're essentially just describing the work that needs to be done. So I then have a separate skill for turning my PRD into separate issues. We could create a single sequential plan that turns the uh PRD into like actual code. But with a canon board, you actually get to parallelize really effectively. And so I can just literally go on my canon board, find all of the tickets that aren't blocking and spin up an agent for each one and get it to resolve it. But of course, what I'm starting to talk about here is execution. So in some kind of loop here, run a coding agent to execute all of the tickets on the canban board.

Most times you won't need to parallelize this. Most times a sequential agent just working through each ticket will be enough. And for me, this is a Ralph loop which works really, really effectively with this setup. And I'll drop some links below on writing about Ralph that I've done. Now, finally, once you've done with execution, you've got a completed asset for you to actually look at, then you get the agent to create a QA plan for the human to QA the completed work. And what this usually results in is more tasks in the canban board and going through the execution loop again.

So you will tend to loop these last three steps quite a few times until you iterate towards a perfect product. And QA here also involves a human actually going and reading the code that has been produced during the execution loop. That might not always be needed, especially if you're using a kind of gray box architecture that I've talked about in previous videos. But overall, these seven phases are the things I'm thinking about whenever I'm working with an AI agent. We start with the idea some kind of app or feature or refactor. If we know there are external dependencies and difficult to execute explore phases, then we cache it in a research phase.

And by the way, this research generally only lives for the lifetime of this sprint essentially or the lifetime of the idea that we're imposing on the app. The reason for that is that research can go out of date or it can just rot away essentially and actually cause our agent to u take a wrong turn where it's not needed. If I need to impose my taste then I will use a prototype here. So I will really just sit with an agent human in the loop to hash out some ideas. This is not just for design as well.

It can be for a software architecture too or let's say testing something out with an external service. This is an essential step because by the time we get to the PRD it's a little bit too abstract. You really need concrete feedback first. Then I write the PRD which is the documentation the spec for where we are going. Next, I make a kind of understanding of the journey towards the PRD by turning it into a canban board. I generally use GitHub issues for both the PRD and the canbon board. By the way, it's just an easy thing I found, although GitHub doesn't have yet a kind of built-in way to represent blocking relationships between tickets.

So, you might be just better off with something like linear, which does. Once the canon board is all ready and set up, then I execute it in some kind of loop. For me, that's a Ralph loop. You could also, I suppose, do execution human in the loop style where you sit and execute the um tickets individually, but I generally find with all of this setup with the research, with the prototype, with the canboom board, with the PR helping it, you can totally run this execution loop AFK and the results will be really good. And to make sure that they're really good, we then enter a QA phase where we get the agent to produce a QA plan.

Then a human, yes, a human, yep, we're here, actually walks through and QAs the completed work and then produces more tickets for the cam board, which then goes and are executed more QA. You get the idea. But what do you think about this? What did I get wrong and what am I missing here? I imagine these phases will grow to eight phases and nine phases as I get more ideas. There's no explicit mention of code review here really. I suppose I could do that as part of the execution flow. I suppose maybe it comes under QA, but you know, it's definitely an essential step to producing good code.

Either way, you can tell that I care about good code, and if you do too, then you should check out my newsletter. But whether you sign up or don't, thanks for watching, and I'll see you very soon.

More from Matt Pocock

Get daily recaps from

Matt Pocock

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.