Robots.txt

3 videos across 2 channels

Robots.txt is shown as a gatekeeper and facilitator for web automation: it guides AI agents on what to crawl or ignore, while tools and standards push sites toward being agent-ready with clear sitemaps, exposed APIs, and content designed for machine interaction. The talk on Cloudflare’s crawl API demonstrates how respectful crawling can coexist with protections, reflecting how robots.txt rules influence access across sites with varying policies, and why resilience and visibility continue to shape its use in an AI-driven web.

How to Make Your Website Agent-Ready | Cloudflare Engineering Meetup Lisbon

The talk explains why websites must be made agent ready for AI agents, outlining standards, tools, and practical steps l

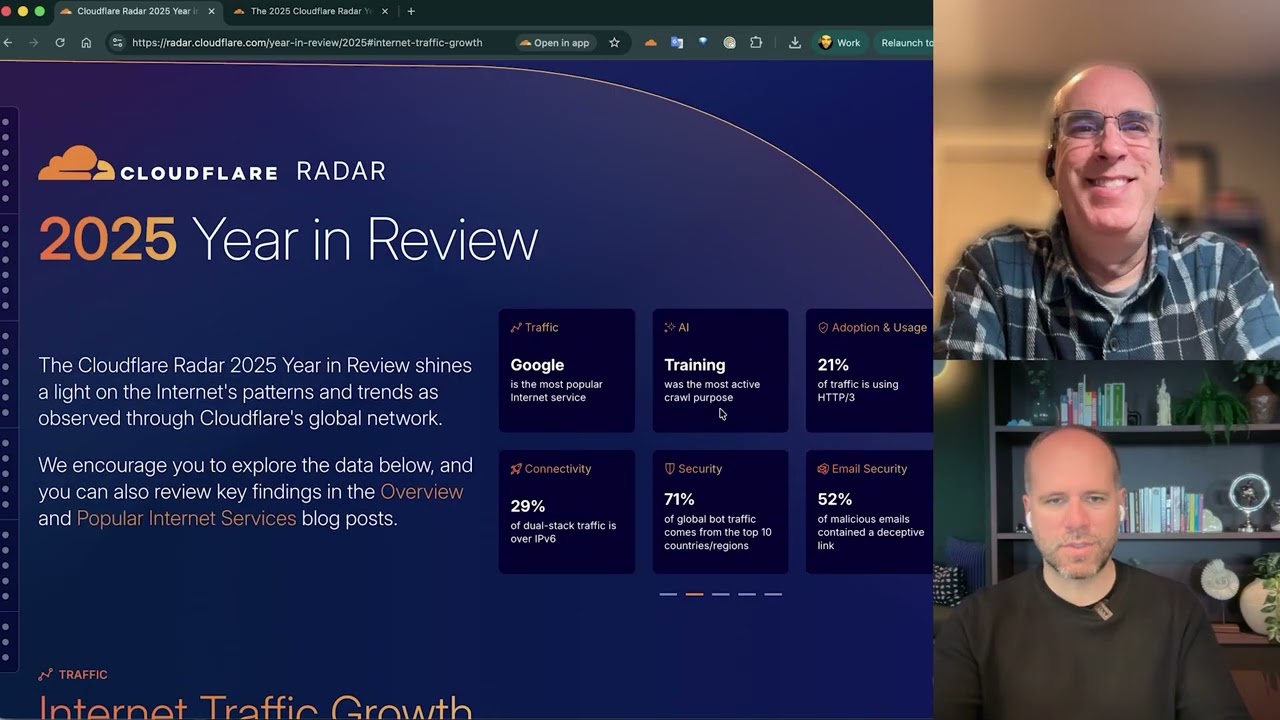

AI, DDoS, and the Internet in 2025 | Cloudflare Radar Year in Review

The video covers Cloudflare's 2025 Radar year-in-review, highlighting how AI-driven metrics, crawling behavior, post-qua