Moltworker (for OpenClaw) & Markdown for Agents: Running AI on Cloudflare

Chapters19

A discussion about adding Markdown support for agents, highlighting the rapid ideation-to-implementation timeline across teams and the excitement around this new capability.

Cloudflare debuts Markdown for Agents and Mold Worker, showing how private AI agents run fast, token-efficiently, and on our network using workers, R2, and AI Gateway.

Summary

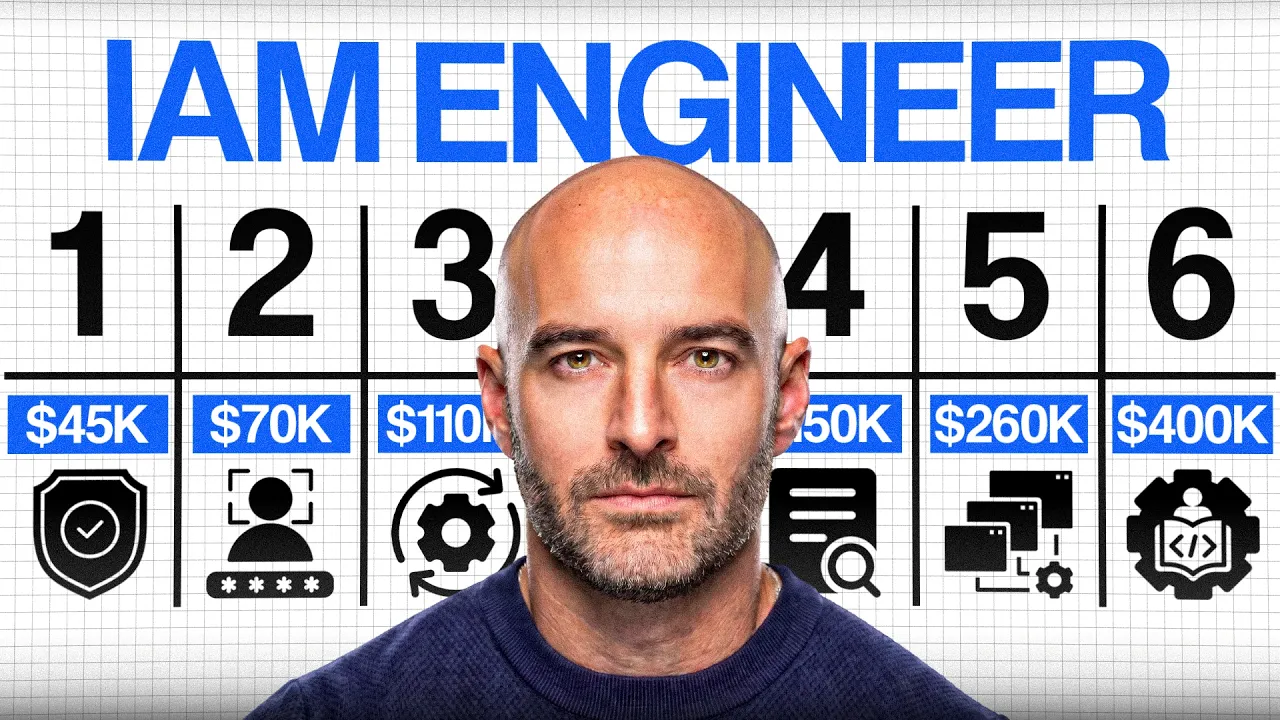

Cloudflare’s Salu Martin joins Ronto to unveil two hot projects: Markdown for Agents and Mold Worker (formerly Clawbot/OpenClaw). The team highlights how Markdown, a 20-year-old format, helps AI systems focus on semantic content by stripping HTML packaging, enabling cheaper and faster token usage. Markdown for Agents automatically converts HTML to Markdown on the network when agents request Markdown, reducing token waste and speeding up AI requests. Mold Worker demonstrates how a private AI agent can run on Cloudflare’s stack using workers, zero trust authentication, browser rendering, and storage emulation via R2, with AI Gateway enabling flexible model selection and fallbacks. The discussion emphasizes a rapid, one-week delivery cycle driven by cross-team collaboration and AI-assisted development. They show a live demo of content negotiation: requesting Markdown yields clean Markdown instead of cluttered HTML. While Mold Worker is a powerful proof of concept, the team stresses that Agents SDK and native APIs will mature to deliver even more efficient, secure personal AI agents in the future. They also cover broader Cloudflare initiatives like Radar charts for content-type trends and Paper Crawl for observability and fair monetization of content. Martin and host stress that AI is increasingly useful and that embracing it responsibly can elevate professional workflows. The conversation closes with a nod to the community’s excitement and ongoing open-source work around Mold Worker.

Key Takeaways

- Markdown for Agents automatically converts HTML to Markdown on Cloudflare's network when agents request Markdown, enabling cleaner content and lower token costs.

- Converting a 16,000-token HTML page to Markdown drops the token count to about 3,000, substantially reducing LLM context window usage.

- Mold Worker is a proof-of-concept showing how private AI agents can run on Cloudflare using Workers, R2 as storage, browser rendering, and AI Gateway for configurable AI providers and fallbacks.

- The architecture combines Cloudflare's global network with Workers to forward Markdown requests and perform the actual HTML-to-Markdown conversion close to the edge.

- Delivery happened in about one week from idea to ship, illustrating a fast, cross-team collaboration rhythm.

- OpenClaw lineage is discussed, with Mold Worker positioned as a stepping stone toward more native agent frameworks like Agents SDK.

- Radar is used to track Markdown adoption trends across AI tooling, illustrating growing demand for Markdown-first content in AI pipelines.

Who Is This For?

Dev teams and architects building AI-powered agents on Cloudflare, and publishers or developers curious about edge-native AI workflows and cost-efficient content delivery.

Notable Quotes

"AI alone doesn't work without talented engineers behind it."

—Salu Martin emphasizes that people are essential to making AI projects succeed.

"We did go from idea to ship the product today in about one week."

—Highlighting the rapid, cross-team delivery that powered Markdown for Agents.

"If they prefer markdown to HTML, our network will automatically convert HTML to markdown in real time."

—Explains the core content-negotiation feature of Markdown for Agents.

"Mold Worker is a proof of concept, not a final product, but it proves you can run complex apps on our stack."

—Clarifies the intent and scope of Mold Worker.“

"This is not a Cloudflare product. It’s a proof of concept, keep an eye on agents SDK for future builds."

—Sets expectations about product scope and future direction.

Questions This Video Answers

- How does Markdown for Agents reduce token usage on Cloudflare's network?

- What is Mold Worker and how does it run private AI agents on Cloudflare?

- How can I enable Markdown for Agents in the Cloudflare dashboard?

- What is AI Gateway and how does it improve AI model selection for agents?

- What are the security considerations when running Mold Worker inside a sandbox on Cloudflare?

Cloudflare Markdown for AgentsMold WorkerOpenClawMarkdown for AgentsAI GatewayR2 storageWorkers platformZero TrustBrowser RenderingRadar charts

Full Transcript

Hello everyone and welcome to this week in net. It's Friday the 13th, February the 13, 2026 edition and this week we're going to talk about AI agents open claw and friends and also how agents seem to perform better with this old language that is over 20 years markdown. For that I have returning to the show Salu Martin a VP of engineering with a few great products in his hands. As usual I'm your host Ronto and this is a conversation recorded in Lisbon Portugal. Hello salsa. Hey, how are you? Thanks for inviting me. You're back to this weekendet.

We had an episode more than a year ago. It's been a long time. Long time. Uh two exciting blog posts that we published recently. one today, the day we're recording called introducing Markdown for agents and the other one called introducing mold worker, a self-hosted personal AI agent minus the minis, which is related to the Mac minis that many are using to try to make open claw work. Um, why not start with a blog post from today, the markdown for agents uh blog. Uh fun fact, how how many days it took the team to from idea execution do this?

Uh this felt very good because we started discussing um adding this capability to our network like last week. Um and it was one of those magical moments where all the teams got together. Um we uh we came up with a plan. Uh we brought out some really talented engineers from a couple of teams and uh we were able to go from idea to ship the product today in about one week. So it felt really good to do something like this. Yes. Uh in what way uh AI also helped to make it faster? Well, I'd say AI is helping everywhere uh these days.

Uh but a AI alone doesn't work uh without uh talented engineers behind it. Um and and that was the case. For those who don't know first uh why is markdown uh in in the case of agents of LLMs important? Why why is it different? It's an old technology over 20 years language. Well, markdown is not new. It's been around for quite a few years now. Um, everybody uses markdown for the number of use cases. It's especially important for LLMs and AI because um, LLMs care about semantic value. Uh, they care about the content itself. They don't care about the package, everything that's around the content.

And if you look at the web today uh and you're looking at a page, take our blog post for instance, you have the content, but you also have, you know, navigation elements, uh images all over. Um sometimes you have advertising. Um and typically the web is not made of markdown. It's made of HTML. Um and the problem with HTML is that um it has a lot of packaging. um the letter uh I'm using a metaphor here uh is just a small percentage of the of the package uh that HTML HTML is. So markdown tries to solve that problem and what it does is it takes away all the irrelevant elements of the HTML page and focuses on the content itself which which is what the LLM and AIS need.

Um so markdown has organically become very popular on the AI world. Every AI pipeline, every AI AI system is converting HTML to markdown today. Um and so what we thought about doing is why don't we make this really easy uh for our customers and convert to convert and instead of uh you know putting the burden on our customers to convert their content to markdown if they want if they want to make it available to AIS and LLMs why don't we do it automatically using our network and so that's what that's what we did so day.

Um our customers can go to the dashboard and just click click a button and if they have their websites with us um they just enable markdown for agents and then what happens is when any AI agent or AI system tries to fetches content from our customer, if they say in the request that they prefer markdown to HTML, then our network will will automatically ly and in real time efficiently convert HTML to markdown and serve markdown to the agent directly. So this has a number of benefits. It's very easy for our customers to serve uh AI agents.

It's also cost efficient uh and uh and stops with the token waste which is really important when we talk about AI costs. Um so that's it. the the blog post actually has a number related to the actual blog post in terms of if you're reading this blog post it takes 16 over 16,000 tokens in ATML exactly and much less 3,000 tokens when converted to markdown. So that's important for you not to pay too much to the LLM. Right. It's very important because you know um there's this this thing called context windows in AI. They are limited.

uh they should not be abused. Uh big context windows with irrelevant tokens also make the AI slower, more less accurate. So everything you can do to save tokens and you you know take away the um uh the things that have zero semantic value out of the context window, the better. Uh in what way? the the blog post explains many things of how it was built as well and the the the things that you can use even to to check for yourself. Uh do you have something to show in terms of the blog that could be relevant for people?

I mean I I can show the diagram of how we built this cloudflare is in a privileged position because we have all the building blocks to do something like this. Uh we have a global network uh which is very flexible in terms of how we can plug uh logic and um and how we can manipulate content that goes across our network and then and then also we have workers our workers platform. Uh so what we did is you like a mix of both worlds where the global network is aware of the agents requesting markdown in the request and if that's the case then it forwards the request to a worker that does the actual HTML to markdown conversion and then serves the markdown to the agent.

Um, so it's it was one of those cases we where we had any everything that we needed. We just needed to put all the pieces together um and make it work. Jess, so I won't ask you for feedback. Specifically, the the blog post also mentions uh radar new charts on radar uh that are related to markdown as well. Yeah. So we do know that markdown usage is increasing. Um we've been looking at what um agents frameworks and coding tools AI coding tools are doing and we've seen that increasingly they're trying to fetch markdown uh from the web as instead of HTML.

So we think we think the trend has started but we wanted to track that over time and radar is the ideal place to track internet trends. Uh so again one of those situations where we went to the team hey can we start tracking uh content types across the internet over time and they said sure how much time do we do we have to build this and we told them one week [laughter] and it's done. was fast. Um the in terms of do you have like some demo that you can show in terms of how it works?

Yeah, I can uh I can maybe just you know from the dashboard or even get my terminal and um if I go here and so what I'm doing here is I'm fetching uh the blog of the announcement that just came out. Um, and I'm basically simulating a web request using curl, which is a popular command line tool. And I'm including the special ether except uh this is what we call the content negotiation. And what I'm saying in except is that I prefer markdown to HTML. So I'll I'll actually do the the two examples. So if if I put you know the normal HTML I will get HTML in the response and you can see a lot of things that does don't matter to AI HTML tags all over but if I do the same request and I put that I prefer markdown instead of HTML what happens is I get a markdown version of the same content okay it's cleaner and it was automatically converted by our network.

Mhm. So that's it. Quite amazing. Um we didn't discuss this at the beginning but before going into mold worker why don't you give us a glimpse for those who don't know and didn't check the episode that we record before uh in terms of uh your many different um projects at cultur uh over a year ago there was those were different. Now we you have actually even more in some situation. What what is the projects you oversee here right now? Um again the kind of question that that we need to put a date on. Um I I think that the teams in Lisbon are doing you know the usual structural projects like workflows, email, radar, we've talked about those.

Um, and then a bunch of AI features. Uh, which by the way, it's not just us that are building those features out of Lisbon. Uh, a lot of the teams at Cloudflare are doing AI, uh, features and products, but we're we're doing a lot of those as well. So two examples of that are AI search uh which is a very important feature for our customers where where where they can easily build search engines for the AI age. Um uh there's an exciting road map of new features coming up. Uh we have paper crawl uh which I think we also talked about about it in the past.

It's still ongoing. uh lots of new uh ideas for the upcoming months. Um and then other smaller improvements uh that we're doing and in the middle of that things like markdown for agents worker. Yeah. about paper crawl. Actually, that's a popular one even for publishers because it's all about blocking crawlers and uh making uh almost creating an industry there in terms of crawlers paying to content creators, right? It's not so much about blocking crawlers. Uh it is important to say that we don't have anything against uh uh crawlers or nor we want to incentivize anyone or our customers to block crawlers.

It's more about knowing what's going on uh understanding how crawlers and AI systems are using uh our customers content and how can they monetize that fairly for both parties. Uh so I I think what we're trying to do is two things. First provide observability so that everything is clear the metrics are there. there's no discussion about what's going on. Uh that's very important. Uh because what we've seen in the past that is that people don't know what's happening. Um and and so observability first and then creating frameworks to try come try to come up with a new business model for the internet because the advertising business model is not is not um sustainable for a lot of uh a lot of content creators.

Uh so we're trying to come up with new ways for to fairly monetize content. Makes sense. Uh about mold worker here specifically it uh it before we go specifically to that why don't you explain a bit of open claw and what it does it do and why it has become such a viral thing created by a guy in Austria. Actually, it started as claudebot and then in the in the week we launched it changed to malt malt claw, right? Yeah. And now open call. No, moltbot and now open claw. Exactly. Um so the mold work mold worker is actually related to to that to the f the second name.

Yeah, it was funny that day because I was uh I woke up in the morning and a friend of mine sent me a message saying, "Hey, have you heard about this um uh uh what was the first name?" Clawbot. Clawbot. Clawbot. Um and I told him, "Actually, no. Uh because it's trending in on Hacker News. I don't know about it." And uh and like 30 minutes later, I'm not kidding. uh our CEO Matthew Prince uh told us in the chat, "Hey, we could build this on top of this is becoming popular. Everyone's talking about it.

We could build this on top of our uh uh on top of Cloudflare. Um why don't we do it?" [laughter] And so I went from from not knowing what uh Cloudbot was to becoming an expert and trying to port uh Cloudbot to work on to to work on top of Cloudflare in a few hours. Um, so again, one of those magical moments where we come came up with a couple of very um talented engineers that were motivated to respond to our CEO and um and we did we did a bunch of glue. So we didn't change Cloudbot.

It's it's the same software package uh as uh as the official open source project is. For those who don't know, what does it do? It's an open- source personal AI assistant that you control, right? There was a lot of discussion that people need a Mac Mini, an Apple Mac Mini or a VPS to make it work like inside a container. So I I think it was the first project to explore this idea of having private AI personal uh personal agents um especially background agents that uh that you can interact to using your own resources, your your own AI models.

Uh it is your own personal instance AI instance. Um, and I think it also became very popular because they came came up with u hundreds of skills uh for for the assistant that can do pretty much everything like you know talk to chat applications, WhatsApp, Discord, social networks that it can control the browser and browse the internet. Um so and and so it had like a community exponential exponential community effect. Um and um and it become very popular very fast. Um, so, um, so what we did, what we did was was basically we built a a bunch of glue around the official open source, uh, package and we made it possible to run on top of our network instead of um, instead of you having to buy dedicated hardware or a Mac mini.

M everyone was talking about buying Mac minis to run Cloudbot and we and and so we we made a version of that where you you didn't need your own personal computer to run it. uh you could just run that on top of uh cloudflow and less expensive as well as yeah but [clears throat] uh it is important to say that it was more of a proof of concept that this was possible and that now we have such a complete stack of APIs and products that make things like this possible. Uh but at the same time, if you ask me if this is is the ideal way of running a background personally assistant on top of a network like ours, I would say it's not.

Uh I think the solution will be to use um more of our native APIs or to rely on a framework like agents SDK which is something that we're actively working on and I think in the future that will provide the necessary tools and APIs to build something like uh what's the name now open open yeah uh but more even more efficiently and cost effective And there's a security side as well because it's a very raw tool in terms of uh vulnerabilities. Some would have discovered so having some security uh present. Yeah. I mean I'm not going to discuss the team's security decisions.

Um it is important to say that this runs on a sandbox. Uh so we're discussing security inside a It's contained in a it's contained. Um it's not in my opinion the ideal model uh but but again it runs inside a sandbox. Uh so offer some protection if if the end user of the AI since this really knows what what what they're doing and they understand the technical tradeoffs u should be fine. Um if that's not the case it can be a problem. In terms of practical use, do you have any examples from the blog that you can show about mult worker?

Yeah, I mean I can show you the blog. It has some examples. So we tell people how we built it. Uh which is which was the main goal of the blog post. Uh um so the architecture is basically uh we we use a bunch of products including zero trust for authentication. Then we have a worker that simulates a bunch of things that the official uh open claw package needs. For instance, the open cloud needs a browser to browse the internet. And what we did was like a proxy uh between the CDP protocol that uh open claw requires to operate the browser and our browser rendering product.

Uh so when open cloud needs to use a browser what what what it's actually using is our browser rendering APIs and so this is just one example of the things that were we um uh ported to use cloudflare. Another thing is open claw is expecting storage like a hard disk in your Mac mini and uh so we use R2 to uh to emulate that storage device. Uh another example so things like that. Um and for AI for instance uh they typically connect to an AI provider like cloud or entropic or open AI. And what we did was we added support for AI gateway which is another cloudflare product and through AI gateway you can say uh what AI providers you want to use and and then you can you can you can have more sophisticated logic on top of that and you can have like fallback models uh you can do caching you have better observability on how the model is being used uh so there's a bunch of benefits from using AI gateway that now you can take advantage of.

It was not done for this but it's actually perfect for this. It showcases how uh powerful and flexible our platform has become. Uh and that was the main goal. This is not a Cloudflare product. This is not a Cloudflare feature. It's it was also important for us to make that clear. But it was a an excellent opportunity for us to show that now you can run very complex and demanding applications on top of our stack. It actually also talked about the sandbox container there and also some of the applications uh discord telegram in terms of writing things for you.

You can give it them like a prompt. Yeah, we put a few demos here. uh we connected it to a Slack instance and uh and so you can go on a chat room and you can start talking to one of the things we did uh one of the more complex things we did was we where is it um it's here. So we told uh Maltbot at the time that was the name to go on our developer documentation website and just browse around but to record a video of that. Mhm. And so what Maltbot did was uh it started browsing user browser rendering the browser rendering APIs one of our products but then at the same time it it downloaded FFmpeg which is a very popular open-source software to the sandbox.

It took frames of the developer docs pages uh like screenshots using our APIs. It put the screenshots in files in the disk in the R2 APIs and then it used ffmpeg to convert the screenshots to a video and it gives gave us the video and it posted the video on Slack. Okay. And it it took it took him like 30 seconds 1 minute to do this and that's quite impressive. And you you just asked for a video and it got to understand what it needed to to do to achieve that. So I want a short video where you browse through Cloudflare developer documentation.

Send it to me as an attachment. Feel free to download Chrome and FFmpeg. By the way, when we said download Chrome, that's when we trick Malt worker to think that Chrome is browser rendering. So behind the scenes, that that's that's the glue we did. It's quite amazing how it actually sorted things out. is like having an intern that is actually very capable and it does things for you. Especially if it's on the web, you can definitely That's kind of an insult to interns, but yes, I understand the metaphor. [laughter] A good intern. Um, anything more?

Uh, no, that's it. I mean, the malt worker also became hugely popular. We now have like 10,000 stars on our GitHub repo. We made it open source and we're still supporting the project just out of respect for everyone who decided to to try and experiment that. Uh so we're still fixing bugs and improving things but but again I want to say this twice. This is not a call for our product. Uh it's a proof of concept and people should uh keep an eye on agents SDK because it's going to it's going to be amazing to build things like this in the future in the near future.

Makes sense. Uh, regarding the uh the examples that you've seen that were more m mindb boggling uh may that be mold worker or even other religion to a agents. What are the the use case you have seen? I mean I mean ever ever ever since the year started I I'm constantly being surprised on a day-to-day basis on how people are using AI, how sophisticated AI coding and personal assistant tools became. It's just unbelievable. Um and I'm I think I'm not alone. [snorts] You probably feel the same. I feel the same. Yeah. uh everyone's using AI and [snorts] uh I don't think a AI is taking anyone's jobs.

Uh it's intensifying everyone's jobs in a good way. Um and if we I think that if we stop being skeptic about AI and we just embrace and we know how to use AI, it it can it can really become a very powerful tool for our uh for our professional lives. Yes, makes sense. Uh, so you don't think it's overhyped? I think we've passed that phase. Uh, I think now we're realizing how useful it can be. Um, it's a long discussion, but uh, but I I'm I'm more on the side of embracing now than being skeptic.

And that's it. Thank you for inviting me. Thank you for being here. And that's a wrap for this week. Visit thisweekendad.com to subscribe to the podcast wherever you listen to podcasts and explore nearly four years of conversation about how the internet works and evolves. For deeper technical dives, check out the Cloudflare blog. See you next week.

More from Cloudflare

Get daily recaps from

Cloudflare

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.