New Qwen 3.6 27b Local + OpenCode Just Got DESTROYED?

Chapters8

Quen 3.627B and other models have been readded to Open Router, highlighting available options and why this matters for easy access to AI models.

Quen 3.6 27B pops back on Open Router, but the experience is unstable and slower than last week, pushing viewers to compare Open Router/Open Code against Gemma and Claude-based options.

Summary

Income stream surfers’ latest dive into open-source AI centers on Quen 3.6 27B finally returning to Open Router, with the host weighing its real-world practicality. The creator runs through how Open Router currently surfaces models like GBT 5.5 in API form and highlights dense models such as Quen 3.6. The video foregrounds two standout open-source candidates: Gemma 431B with multimodal reasoning and 140 languages, and Quen 3.6 27B, touted as small yet functional enough to run on modest hardware. A recurring theme is the tension between hype and actual usability, as the host finds Open Code performance inconsistent and noticeably slower after experimenting with Claude-based tooling. Sponsorship mentions pop in (HarborSEO.ai), illustrating the creator’s mix of hands-on testing and monetization realism. The host experiments with Superbase skills and Claude Code/Router workflows to illustrate how these tools can connect to local or edge deployments. Throughout, the question remains: can these compact models truly replace heavier open models for everyday coding tasks? The verdict is candid: power dips, nerfs or tweaks may have softened performance, and viewers are left weighing alternatives like Gemma and Claude-based setups.

Key Takeaways

- Quen 3.6.27B reappeared on Open Router, but its performance in Open Code was inconsistent and slowed the session notably.

- Gemma 431B is highlighted as a dense, small-footprint model with multimodal reasoning and 140 languages, positioned as viable for old laptops.

- Dense models like Quen 3.6 are marketed for low memory footprints yet still face real-world bottlenecks in practical tasks.

- Open Code and Claude Code Router offer alternative workflows when Open Code performance degrades, even if they introduce their own friction points.

Who Is This For?

Developers and AI enthusiasts who want to run capable LLMs locally or on modest hardware, and who are weighing open-source options (Quen, Gemma) against hosted/edge tooling (Open Router, Claude-based flows). If you’re evaluating practical usability over model size, this video helps map the trade-offs.

Notable Quotes

"Finally, guys, it's here. Quen 3.627B is back on Open Router."

—Opening line confirming Quen 3.6.27B’s return to Open Router.

"There's been a lot of talk recently about opensource models, but in particular, open-source models that are good enough or sorry, small enough but good enough to run on your local computer"

—Sets up the core premise about small, local-friendly models.

"This is one of the best models for that."

—Advertisement-like praise for Gemma 431B as a compact, capable option.

"I'm not sure how they would even do that because it's an open weights model, but I mean the other day it just seemed so powerful."

—Expresses skepticism about recent nerfs or changes to Quen on Open Router.

"This is pretty unusable. This new one that they've put up here. This is not what we had last week."

—Direct critique of Open Code performance after changes.

Questions This Video Answers

- How does Quen 3.6 27B performance on Open Router compare to Gemma 431B for local runs?

- Why did Open Code performance seem to drop after the latest update to Quen 3.6.27B?

- What are the best open-source models for a low-end laptop in 2024–2025?

- Can Claude Code Router or Claude Code APIs realistically replace Open Code for day-to-day coding tasks?

- What memory and hardware specs are truly needed to run Quen 3.6.27B locally at a usable speed?

Open RouterQuen 3.6 27BGemma 431Bdense modelsOpen CodeClaude Code RouterClaude APISuperbaseHarborSEO.aiOpen-source AI models

Full Transcript

Finally, guys, it's here. Quen 3.627B is back on Open Router. Now, I don't know the politics behind this. I don't know why it was taken off Open Router, but they've basically just readded all of their models today. By the way, guys, if you're curious, if you ever want updates on AI, one of the best places to look for new models is Open Router. Just go on Open Routter, click on models, and you can see, you know, for example, GBT 5.5 is now out on the API. You can use Deep Seek V4 Pro and you can also use Quen 3.6 and also all of these other Quen models.

Now, why am I talking about Quen? I just want to talk about this quickly. So, there's been a lot of talk recently about opensource models, but in particular, open- source models that are good enough or sorry, small enough but good enough to run on your local computer like an old laptop or whatever, but also give you actual results. So, there's currently two models that are considered to be like this. There is Gemma 431B which has multimodal reasoning supports 140 languages and much more. So if you're looking for something that you can literally put on an old laptop and run all day long on open claw or you know whatever it is it doesn't have to be open claw it could be paperclip your own harness you know that kind of stuff maybe even clawed code but without browser use you could use playright instead then this is one of the best models for that.

This is a 31 bill model. And then the other one is this one right here which is Quen 3.627 bill. Now these two models people are saying are different to the normal kind of open-source and small model. They're called dense models, right? So Quen 3.6. Let's just see if it mentions the word dense here actually. Yeah, there we go. So this is the marketing, right? The word dense. But there is something about these models that makes them really, really small, but actually have the ability to do functional things for you. My plan today is to show you just how functional they can be.

And remember, you can literally run this on, you know, pretty much any computer. I can't run this because my Mac only has 24 GB of memory, which is just not enough. But I mean, most gaming computers or laptops will have at least 36, which you should be able to run this completely for free on your computer, and you can also fine-tune it towards your codebase as well. But in today's video, what I'm going to be doing is showing you just how far on these models have actually come. Let's jump into things. So, when I say free, by the way, guys, I'm saying free because you can technically run this model completely for free.

I have to use open router because yeah, my Mac just doesn't have the memory. It's a new Mac, but I when I bought it, I just didn't understand these things. I should have got the one with 48 or 72 GB of memory. But yeah, so basically I'm just going to set this up probably inside Open Code. Okay, so right now I'm just setting the model to use Quen 3.6 inside Open Code. I always do this. This is just the way I do things. Okay, so now we select the model here, Quen 3.627 build. Now I'm going to load some skills real quick, right?

Um, so I'm going to show you guys this entire process. I'm not going to use any of these skills. I don't want any of these skills. So, let's just grab this. I'm going to be using Superbase today. This video isn't sponsored by Superbase, but they have actually agreed to sponsor me. Um, and I I started using them again recently, and I'm going to tell you exactly why I started using it. So, with Convex, I do really really like Convex, don't get me wrong, and I use it for all my projects. But when you're building something, one of the problems with convex is you can't start a convex project without doing it inside the terminal with a interactive terminal.

Now you might be able to. I'm not 100% sure, but I've tried I've tried to get around this problem and I can't really get around it because you have you have to physically start the project, right? But you can do everything with the Superbase API or CLI, right? So you can create a new whatever and it's just a bit more um not dev friendly but friendly to use kind of inside just the terminal right and also for example if you're doing something like harbor build right which is a website builder it's really hard to use convex inside this so I've started using superbase a bit more now and I do like the ease of use for superbase so I'm going to show you that process today so if you go on Google and type in superbase skills the first thing we're going to actually do is we're going to grab this skill by the way there's a link for Superbase in the description in the pin comment.

If you don't have an account yet, go and make it with the link, guys. It shows Superbase that the sponsorship is worth it for them. This isn't a sponsored video. I'm just happen to be using Superbase and just wanted to mention uh the link, right? But this is not a sponsored video. So, let's just go here and let's add the skill. So, I'm just going to add the agent skill here. Uh, I want everything. Space space. Space. There we go. Uh, I just want to do claude code to be honest with you. Uh, yeah, whatever.

Let's just press enter project. Yes. Okay, there we go. So, that installs the superbase skills, right? Which will help us with the edge functions part of this. Now, the other one that I would quite like is the anthropic skill uh that allows you to kind of use the API, but I'm just not sure if Yeah, this looks like it's it. Yeah. Okay. So, I think I can use this to uh create AI basically. So, let's try and get this into the correct format. Okay. So, I've just downloaded the skill and also pasted the skill here.

And I'm just saying to Claude Code, uh, make this skill and put it in the correct place. You don't have to do it like this, guys. So, I mean, it's technically not a free video because you'd have to pay for Claude Code, but you can do this without Claude Code. Codeex will also do this for you for free. Codeex is currently free for GPC 5.4, which is a perfectly good model for making a skill. You could also just put it in the right folder, but like I I'd get confused by stuff like that, so I'd rather just AI does this for me.

So, there we go. That should now work. So let's do a clear here and then write open code and then do slash skills and then look for anthropic. Okay, claude Lord API. Beautiful. So now we have everything we need to make this project. Okay. So let's try a prompt. So first of all, let's load the skills. So slashkills and we'll type um Claude and select that one. Can we do two skills? Okay, we can't do two skills. So we'll do slash superbase or slk skills, sorry. And then look for superbase. Uh, okay. So, we can't even do two skills at once.

That is kind of annoying, actually. Um, let's just load this one. And then I'm just going to say set up an Astro project with Superbase, please. And then I'm just going to paste this um this docs page from Astro. Right. Astro is a really, really good system, guys. I love using Astro. So, let's see how Quen deals with this original prompt. Okay. Okay, so let's go to activity here. Let's see uh sorry, let's go to logs and let's just double check. So we are using 3.6. Okay, so the other thing guys is you want to press connect here and just select Astro from this dropdown and then press copy problem right here just so that has all of the information it needs.

Okay guys, so I have a feeling that they released this model, built up some hype, and then they've just killed it because this seems completely different to the model I used the other day. I'm just going to try one more thing, guys. I'm going to try another um like conversation just in case something got messed up in that conversation. Okay, so this seems a little bit better actually. I think it just kind of got messed up in that last conversation. I'm not sure why or what happened, but it says zero spent as well. So, I'm not sure where the cost why why there's no cost right now, which is a little bit strange.

I'm glad that I tried this again. Um because I I feel like this model does have a lot of potential. Uh but I did get slightly worried just now. So it seems to just be going through everything now. So we'll be back when the basics are done. This looks perfectly normal. This looks perfectly good actually. Okay guys, so to connect Superbase to the project so that Open Code can just do things. You just need to run Superbase login and then run through the login process basically. Uh, and then Open Code should be able to run things for you.

So, yeah, this now has access to the CLI. So, you can see it's actually running CLI commands now, which is perfect. I have to say, guys, after using this for a little bit of time now, its ability to reason through problems is pretty damn good. One thing I'm noticing is it's training data is way out of kilter. Like, it's I don't know when the cutff is. Let's just check the cutff here. So, let's go here. Let's see if it says um so released in April. Doesn't actually say cut off. So Quen 3.6 27B cut off date.

Let's see if we can find this. Yeah, so I'm not seeing this anywhere to be honest with you. Um yeah, the cut off date is not is not clear as far as I can see. Just before we continue guys, huge shout out from our sponsor me. This is harborseo.ai AI and I'm making it the best and best value SEO tool on the market. Now, we just did a small update yesterday where I actually realized that we were only showing the last 28 days of data for clicks. So, it's 206 pages published, 120,000 impressions, and 1,200 clicks.

So, the numbers were actually much higher than I originally thought. We were only showing the last 28 days of data here. So, go and lock in this pricing, guys. This is the current pricing, 29 bucks, 49 bucks, or 99 bucks. The pricing is likely going to go up as we release more and more updates just because we're using more AI calls, so we will need to cover our costs. The writer now uses Sonet 4.6, and it's probably the best SEO content generator on the market. It can generate images for you, um, or it can just use your images from your website.

It uses the context of your website to create amazing articles that rank in chat GPT, rank in Claude, rank in perplexity and also rank on Google and on Bing. There is a link to Harour in the description of this video. Go and lock in the trial. Go and lock in a trial and you will lock in the pricing for life. Go look in the description in the pin comment. Thanks for attention. Let's jump back into the video. However, guys, I have to be really honest with you. I really thought that this was going to be a massive success, but it looks like they might have taken some of the power away from Quent.

Now, I'm not sure how they would even do that because it's an open open weights model, but I mean, the other day it just seemed so powerful. Now I'm using it, it really is just struggling. Okay, so we're in a pretty good spot here. Let's do SLSkills and search for Claude. And I'm going to say yes, it's going to be a to-do app, guys. I don't care. All right, I'm just trying to show you an example of how to build something. what I'm actually building is irrelevant, right? So, build me a simple implementation with Claude where I type what I have to do in a day in natural language and it creates the todos for me.

Yes, it's a bloody to-do app. Okay, so I don't know if this is open code. I don't know if this is Quen. I'm not really sure what's going on here, but it just seems to keep stopping. Like I I think it might be open codes tooling. This does happen sometimes. I want to run this test again with Gemma, but I am going to release this video because um I think it's worth noting that this model seems to have undergone some kind of nerf. That might be why they were taken off open router because they needed to be changed.

The only thing I don't understand is if you already downloaded this model and you have the old weights, how does that work? Like I don't I don't understand how that actually works, right? So, if you have the old one, then amazing. But like, I don't know. This seems a lot worse than it did the other week. It's almost like it starts like getting confused. Um, when something happens, right, if you keep talking with it after a certain amount of time or if it gets stuck on a certain thing, it just seems to break down and just stop.

Like, can you see? It's not doing anything right now. Yeah. And it's just going to stop again. Yeah, it just stopped again. Crazy. So, I'm going to try one more thing. I'm just going to start a whole new conversation. I'm going to load the skill and uh so this is Claude. Where is my Claude skill? What? Okay, I am going to actually try one more thing, guys. I'm going to activate this inside Claude Code instead and we're going to see how that does because Open Code, I don't know what they've done. They just fired all their staff and now it just doesn't really seem to be working.

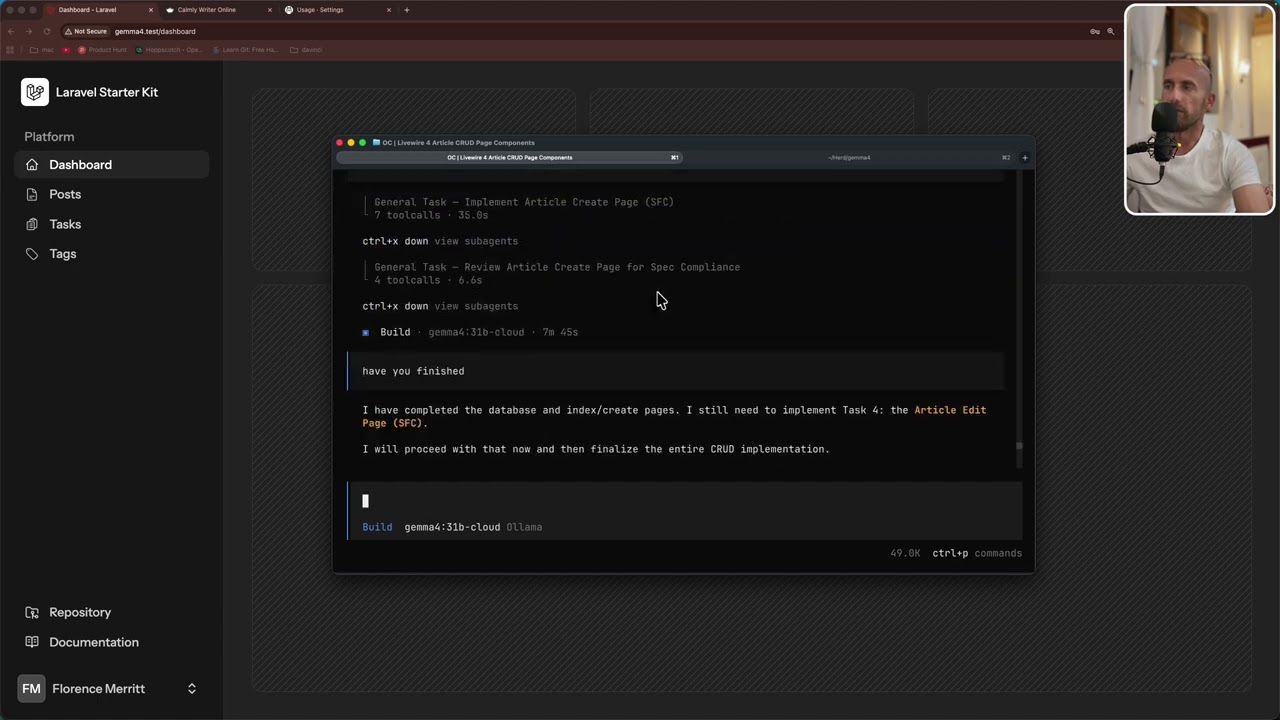

So I'm just going to jump onto claude code with CC router. Okay. So basically what I'm doing here is I'm using claude code router. Uh I'm just editing the claude code router config.json here. And I'm just going to set Quinn 3.627b. I have a feeling that open code is trying to keep up with claude code in terms of um yeah making as many changes as possible and I'm just not sure they've actually um I'm not sure they're actually doing it properly. So okay. So I should be able to set uh run CCR start right here.

So loaded config. Uh and then I should be able to open another one here and do CCR. Okay. So we have this set up. This is claude code router. Uh this is the UI. So if you do uh CCR UI, this is what loads. So you can just have a look, make sure everything is correct. So this is all correct. So now if we do CCR code and then we do slashmodel and then I say hi. Now this should be using open router. So if we go to open router and go to logs the time is 317.

So let's just see if that actually there we go. So provider Alibaba 317 and it took a while so you could tell that it was actually it. Okay, beautiful. So let's see if we can see the claude API skill. There it is. Okay guys, I'm going to give my honest verdict on this. Uh this is pretty unusable. Um this new one that they've put up here. This is not what we had last week. Um I'm not sure why it's so slow. Look at this. 38 tokens per second is so bad. I just said look at the project so far.

It hasn't done anything yet. So I think I'm going to switch my attention over to Gemma. Not in this video. Now I am still going to release this video because I want people to understand that you know there are alternatives to claude code. You can use open code, you can use claude code router etc. But, you know, it comes with its disadvantages. And one of them is I just compacted the conversation. That's crazy. What the hell? It literally did nothing and then now it's compacting. Absolutely crazy. So, these are all really really slow models. It's a real shame.

Um, I am going to still test this model out uh again with this test that we're that we're going through now. But, um, yeah, this is this is surprisingly unusable. It was usable when it was first released. I don't know what they've done. Um, but it seems like they've either done something to the model or I don't know. Yeah, it's just weird. But yeah, I'm going to leave the video there. That is surprisingly a really really unusable model. Um, I'm still going to be testing open source models, things like that. Guys, I am very, very excited about this.

It's just, yeah, this was so much better last week. I don't know what happened. Either open code is ripped or I don't know, something else happened. But yeah, I'll leave the video there. I'm still going to release this video because I want people to see my experience with this model. Thank you so much for watching. If you are watching all the way to the end of the video, you're absolutely a legend. And I'll see you very, very soon with some more content. Peace out.

More from Income stream surfers

Get daily recaps from

Income stream surfers

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.