I HAVE A PODCAST NOW

Chapters8

Hosts discuss how to start a podcast, casual format choices, and setting the vibe for TBPN (The Theo Ben Podcast Network) before diving into topics.

Theo and Ben riff through the chaos around Anthropic, Claude Code, and open-source coding agents, with sharp takes on missteps, policy chaos, and better harnesses like Pi and CodeEx.

Summary

Theo kicks off a candid, yarn-filled episode about launching a podcast and the post-launch chaos around Anthropic’s Claude Code, Cloud Code, and the broader AI tooling landscape. Theo and Ben dissect rate-limit madness, the source-code leak saga, and the questionable publishing processes that let leaks slip out. They connect how poor CI/CD practices and clueless comms fed a vicious cycle of bugs, DMCA drama, and public backlash. The duo contrasts Anthropic’s turbulence with OpenAI’s steadier, more transparent approach, while praising OpenAI’s responsiveness and the open-source ecosystem that has grown around Cloud Code’s leaks. The conversation pivots to tooling philosophies: Pi’s minimal, extensible approach versus Cloud Code’s heavy, token-hungry design, and why lean tooling often beats feature-rich hubs for real-world coding loops. They also explore practical workarounds, praising Code Rabbit for code reviews, and highlighting the value of open-source runners like Pi and Clerk’s signer experience. Interlaced are personal anecdotes, hot takes, and a peek at how subscriptions, subsidies, and token economics shape developer choices. The episode blends hot takes with nerdy tutorial vibes, inviting listeners to rethink which harnesses actually improve coder velocity. Finally, Theo teases future topics, local-use policies, and the messy, fascinating path forward for coding agents in a competitive landscape.

Key Takeaways

- Claude Code subsidies can distort usage: the $200/month plan effectively subsidized up to $5,000/month in inference for some users, creating a skewed incentive structure.

- Anthropic’s publishing pipeline lacked CI/CD safeguards, allowing a local dev build with source maps to creep into npm and leak, which exposed fragile build hygiene.

- Cloud Code’s rate limits and cache behavior are central to user costs; caching mechanics (and a per‑million-cache-read charge) are a major pain point compared to other platforms with easier caching semantics.

- OpenAI’s ecosystem, including easier token economics, broader tooling, and more transparent comms, currently edges out Anthropic in developer experience and market sentiment.

- Pi (T1 code) proves that minimal, extensible tooling can outperform heavyweight harnesses for coding agents, thanks to its clean extension system and strong grounding capabilities.

- Code Rabbit, Expo, and Clerk offer practical, proven tooling and onboarding advantages that improve developer experience and code quality in real-world workflows.

- The Open Source vs Closed Source tension in code-execution agents remains unresolved; the best path forward lies in more openness, better tooling integration, and fairer subsidy models.

Who Is This For?

Essential viewing for developers and AI researchers who work with Claude Code, Cloud Code, or any coding agent harness. If you’re evaluating OpenAI versus Anthropic ecosystems, or exploring Pi, CodeEx, and other open-source options, this episode helps you separate hype from practical tooling decisions.

Notable Quotes

"There is no world where they want you to build an agent on top of it because it is not theirs. and it is not controlled by them."

—Theo explains the tension around Anthropic’s agent SDK and the control they exert over tooling ecosystems.

"The only world that benefits at all from this is our friends over at OpenAI. It is. They have just handed them layup after layup."

—A pointed comparison of how Anthropic’s moves have benefited OpenAI strategically.

"Less tools is better. This has been figured out for quite a while. Pi is minimal but incredibly powerful because you can hijack extensions and tailor it to your needs."

—Discussion of tool design philosophy, praising Pi for minimalism and extensibility.

"Code Rabbit is an incredible code review platform that deeply understands your codebase."

—Advocacy for Code Rabbit as a practical, well-integrated tooling option.

"More tokens is better. More tokens, more better. That’s how this works in Anthropics world."

—Humorous take on token economics and model context philosophies.

Questions This Video Answers

- What caused the Claude Code source leak and what went wrong in Anthropic's publishing process?

- How do rate limits and caching work for Claude Code compared to OpenAI's models?

- Why is Pi considered a superior coding agent harness for some developers?

- What are the pros and cons of Cloud Code versus Claude Code for local development?

- How has OpenAI benefited from Anthropic's missteps in the AI tooling race?

Anthropic Claude CodeCloud CodeCodeXGitHub DMCARate limitsCI/CD for AI toolingOpenAI OpenClawPi coding agentCode RabbitClerk O providers

Full Transcript

How are you supposed to start a podcast? I mean, all the like super official ones, they always open on like a question and then the guest dives right in. I'm pretty sure they open with like cheesy music and [ __ ] but none of the good ones do. There are good podcasts. I mean, the ones that at least look good and get a lot of listeners. Do they have a folding table and handhold their mics? No. Then why are we replicating them? Cuz we're better than them. We need to take what they do right and do it better.

Alla, the folding table. That's the whole point. I think this is an intro. Is it? Sure. All right. Okay, so on this week's episode of the Theo Ben Podcast Network, we are going to be talking about um we're going to be talking about Anthropic Week. I am sorry y'all. Believe me, I don't want to still be talking about Anthropic, but uh I I was going to say Anthropic seems to disagree, but they seem to not want me to talk about them either. They should probably stop being [ __ ] then. The source code leak was fun. I hope everybody listening has heard about that already.

Yeah, that was great. I'm very very pleased with the shitty codeex fork I did of it because as soon as that leaked it was like what 4 in the morning or some [ __ ] like that. I just immediately downloaded it, set up a GPT 5.4 extra high run, let it go for 4ish hours and it got me a fully working version of Clock and I haven't touched it once. I've not used it for any real work cuz it sucks. But I got the screenshot. It was funny. It was very funny. God, you were not kidding. How do you [ __ ] It's amazing that this mic has such good characteristics when handheld despite being entirely impossible to hold in your hand.

Yeah. I I think the best strat is to like between the fingers like claw. Oh. Oh yeah. I guess I could flip. Yeah, that's pretty. Yeah, but then how the [ __ ] am I going to touch my computer? Just talk to it. Whisper flow's not a spor yet. Don't talk to it. Well, they can't all tab, so they can't be a sponsor. Like I can't do Well, I guess I can do that and then they'll figure it out everything. I hope they do. I really need them to computer use me an app. How long was it?

How far into this recording are we? How long did it take for the first dad joke to come out? That was a Rich Harris joke. It's still a dad joke. I miss having a chat to appeal to. It's so nice to be able to just be like, "Yo, chat, am I right or am I right?" I think chat would be on my side more often. I I I would I think I could win them over. You're not wrong. Anyways, welcome to whatever the [ __ ] we are doing. I wanted to do a podcast, I guess, for a bit.

Never been a podcast person, but uh you know, the the Theo Ben podcast network needed to start and uh I I've heard that TBPN isn't taken, so hopefully we can go with that. Oh, just got news that it is taken actually. And uh well, it's taken by opening. Actually, that's unfortunate. It's a good thing we're not paid by OpenAI. Okay, so we're officially calling this the Code X podcast. The TVPNX podcast. Well, okay. Ser serious for a moment. I wanted a place to talk about things like general things rather than just the things that I could clickbait into a title and a thumbnail.

things that are interesting and fun and also just kind of replicate the conversations that I have with Ben here offline because I've had many a conversation where I've broken this poor kid's brain and I figured it'd be better if we recorded them instead of just doing them privately. So, uh, I spent way too much money and kicked out my roommate to get us a spare studio. And here we are staring at three cameras at 11:00 p.m. in my apartment pretending that we're real podcasters. And I'm trying to figure out how to hold an SM7B and I still can't figure it out.

Just watch some episodes of Safety Third. They figured it out pretty okay. Yeah, I watched I didn't like any of the ways they were doing it. It's I don't think they do either to be fair, which maybe is the point, probably. I'll hit up Will in the future. Sounds good. Two main things we plan to do in this show are talk about things that are just generally like the news of the week type stuff. the things we cover in other videos, the things we wanted to talk about more and couldn't, and the things that we want to get each other's opinions on and maybe give each other a little bit of [ __ ] about.

And the other thing we want to do is nerd snipe each other a bit because I already do this a lot off stream, and I know Ben's been holding on to some fun nerd snipes for me for a bit now. I have been. I see some notes on his computer that have me kind of scared. It's going to be very, very entertaining. Hopefully, someone's entertained. Welcome to whatever the [ __ ] we decide to name this. Hopefully this isn't a mistake. So, speaking of mistakes, shall we talk about Anthropic? How many mistakes did they make this week?

Uh, well, we had four videos recorded for it. There probably should be a fifth, so at least four or five. There was the Cloud Code leak. There was the subscription debacle. There was the horrific coms around all of it. a bunch of people making dumb posts about why it's really good that they made the changes they did. Um, is there any I'm missing? Clarification on the terms of service around using the models and using the subscription outside of their harnesses. That was then immediately retconed because I decided to post about it. That was great. That was very fun.

I legitimately in like I know this is somewhat conceited to say, but I'm legitimately starting to feel as though some of the bad things Anthropic is doing is purely because the alternative is accepting I was right. And Anthropic would rather burn themselves to the ground than admit a single [ __ ] time that Theo was correct. They hate admitting they're wrong. And they also I don't know. I I half agree. I also just feel like there is a massive amount of incompetency happening here. Like I feel like they just do not have any idea what they're actually doing with any of this and things are just falling apart in very very stupid ways.

Like the fact that DMCA's went out to every single solitary person who forked the normal cloud code repo, not the leaked one. We should probably go from the top because people might be confused about what was being forked and why DMCAs were being sent out. But the on the comms thing before we do that, I think their comm strategy was built before they had lost the positive sentiment. Like they built their way of interacting around the assumption that everybody just liked them. And even from the employees I've talked to, that's just kind of how they worked.

And many of them are still in this delusion that everybody likes anthropic and that anything that is anti-anthropic is some paid propaganda or some temporary thing that will vanish in time. And that is just not the case. And I think that comm strategy is going to burn them to the [ __ ] ground. Yeah. Cuz I mean if you look back at the last what year and a half, which feels like an eternity at this point. But if you go back 9 months ago, Anthropic was so far in the lead in the developer world, everyone loved Cloud Code, everyone loved the Max Plan, everyone loved the cloud models.

OpenAI was not a serious contender for any dev mind share at that point cuz they got there first. And it took OpenAI a long time to catch up. It took until I mean I would argue they probably caught up with 5.2 codeex but no one really caught on until Januaryish. That's when the sentiment really really swung. I don't think that they've ever had to play from having real competition before. And then it was a horrible timing where OpenAI is being super chill and easy to work with and really pleasant. We get codeex rate limits reset every what week at least.

Yep. Open AAI strategy was clearly built around being perceived as the like harmful player, I guess. Like they went into all of their coms and their way of doing things is it you can tell that they are scared of being seen negatively. So, they're trying to overcorrect, which is almost never a bad strategy. I'm going to give an example that I probably shouldn't, but [ __ ] it. That's what the podcast is for. Yeah, I obviously have friends at both labs. One of those friends is somebody at OpenAI that is concerned about the optics of OpenAI. I sent them a tweet from somebody replying to me something along the lines of, "Oh, clearly Theo is paid by OpenAI.

That's why he's so anti-anthropic." And somebody else replied, a really good reply along the lines of, "Every lab kind of sucks and does terrible things. Open AAI does fewer of them and owns them and tries their best to fix them when they're given feedback. It's not Theo's fault the other lives don't do that. That's why he has to crash out all the time. I sent this post to my friend at OpenAI to which they responded, "Do you want me to go in and clarify that we're not paying you?" That's not why I sent it to this friend.

I sent it to this friend with the like message. I feel the sentiment shift happening. I just wanted you to see this. I think it's cool that finally people are noticing that OpenAI is for better or worse the only lab that's listening to the feedback. And the response from this person wasn't, "Oh, that's awesome." It was, "How can I help you prevent disastrous comms because they're just all in that mindset still?" And as grading as that surely is for them, it has prevented all of the disasters that Anthropic is currently experiencing. Yep. Yeah. We have come a very, very long way since the GBT video last August where the entire Yeah, that was a rough couple days.

Um, we were both on vacation when that happened. that that sucked. But um yeah, we've come a long way and genuinely it feels like Opening Eye is trying their damnedest to be the good guy here. I do think that there are a lot of other labs who are trying really hard to be nice and easy to work with. Like all the Chinese labs, yeah, they're great. They are super pleasant to work with. They're trying really, really hard. We're just in such a brutal state right now where unfortunately at the frontier, which is kind of the only thing that matters.

That's a spicy take, I know, but I don't care about openweight models all that much cuz they're not that useful compared to the frontier of 5.4 and Opus. So, we don't see that much about them, but they are trying. I I just I wish they were like 20% better because every interaction I've had with Moonshot, every interaction I've had with ZI and every interaction that I've had with the uh their mini team. Yeah, they're awesome. I've had nothing but great experiences chatting with all of them. I've been done calls with a handful of them. They care a lot and like Yeah, sure.

Some of this is definitely propaganda that is being fed into our DMs, but being pleasant to work with is a very underrated benefit. I said this in the stupid show we did with Jaden a few days ago, but like yeah, everybody thinks that I'm nice to OpenAI cuz I'm a paid chill or whatever. Reality is much more embarrassing. I'm nice to them cuz they're nice to me and they're nice to work with. Like it's just it's crazy how simple it is to be decent and how few companies and labs are willing to do that. On that note, we should probably go through the actual series of events that occurred over the last week that led to us being here.

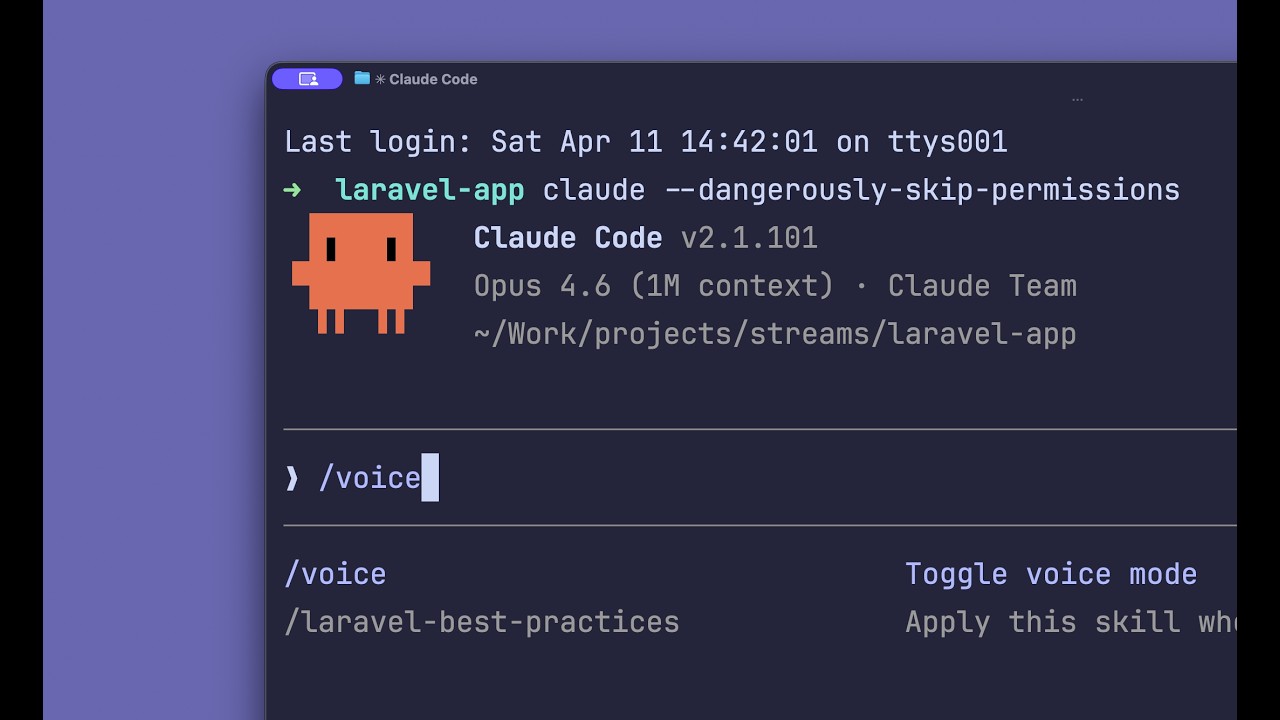

Yeah. This week started with a rate limit crunch. A lot of people started noticing that their rate limits were not going quite as far as they were used to them going when they took advantage of the subscriptions they had with the clawed models. That blew up fast. What appears to have happened is Anthropic chose to during peak hours between 7 and 11:00 a.m. Pacific time where there's a lot of people working across the United States to reduce how far your rate limits go when you're using the subscription plans. And I can totally understand why they would do that.

What I don't understand is how they would announce this the same day they made the change at 12:46, 2 hours after the rate limit change stopped being applicable. So, a bunch of users just couldn't really use their subscriptions that day. And if they weren't following Thoric on Twitter, don't even know why. And they probably have to rely on Reddit and whatever other sources because Anthropic does not post about things they're doing wrong on any official account ever. If you want to see what's going on and why you're getting less, you have to go to people like Thoric.

And if you want to see why you're getting more or what new features are cool and coming, they'll surely post that on the Cloud account. But you as a user cannot stay informed unless you're following Thoric and you're following people like Ben and me. It's absurd that that is the case that if you want to know what's going on with your Claude code plan, it's much better to follow me than the official Claude account, but that's their fault. And this is one of the many things feeding into their insane consistent toxic cycle of not really communicating with their users.

Well, and what's even worse is watching that whole thing unfold, it at least publicly looks like they didn't even really know what happened. Like some like they did not understand why the rate limits were getting hit so bad cuz it was really really bad. There were a lot of people who were doing two prompts and then their whole usage was taking off. That's issue number three. We're only on issue number one. Fair. Issue number one was the rate limit change. Issue number two was the or actually was that two or three? I think that was two.

The order of events I think was the rate limit changes, the rate limit bug, then the source code leak, then the what happened after that? Uh so the source code leak, then they posted their updates to the rate limit stuff. It was poor Lydia that had to be the one to break that news because was too depressed to keep sharing negative things. Yeah, it was a brutal one. Yep. Yep. Poor thing. And then people realizing that those rate limit collapses were going to stay. And after all of that, there was the fund announcement less than 24 hours before they would make the change that every person who used Claude code in a harness that wasn't Claude Code got an email saying that within I think it was 18 hours from that email, they would be cut off from that.

And if they're using something like OpenClaw with their subscription, it would just stop working, which is very funny for me because I'm not necessarily a big OpenClaw user, but I do have it set up. It was actually still called Claudebot on my machine because I set it up like launch a week because I really like Stipy and I think he's awesome. I only use it for backing up random media. Like when I have a YouTube video I want to save so I can access it later or put it on my NAS, I'll just send it over Telegram and get it downloaded.

That was pretty much the only thing I use my Cloud Code sub for other than front-end work. And now it just kind of feels useless. And I was way under the usage. Like I was using 10% of what I was allocated in any given four or five hour window. But now I'm probably going to cancel that $200 a month sub both out of principle cuz they're [ __ ] [ __ ] but also because I'm just not really using it. It would be cheaper for me to pay API prices for the three things that I post unless one of those front end loops goes slightly too long and then suddenly I'm paying $600 for some shitty CSS.

Yeah. Or if you accidentally do the slashfast command thing and then it takes six grand to do anything. Well, since there's like 15 confirmations and a bunch of weird UI noise when you do that, I hope fingers crossed it's a little hard to do that. But if you have a dimension ultra think in your prompt, then you'll certainly be paying the price. Oh, I forgot about that one. Yep. Yeah. Yeah. So, thing one was the rate limit change that they intentionally did. Thing two was some skepticism on Reddit and other places that there might be a bug in cloud code causing the cache to not be hit because a fun fact if you don't know this about how inference works.

When you send tokens to a model to respond to and has to generate the next set of things that it is responding with, it needs to process all of the text before it to get to the right spot. So like effectively all of the parameters and things in the model are adjusted based on the previous history to make the next token more likely to be correct. Doing all of the computations to get to that point is not cheap. It is certainly not free. So a thing that a lot of the labs do is effectively snapshot when you're at a certain point so that you don't have to recalculate for the 15 messages prior.

You can just recalculate from the message you're on down and then you don't have to run everything in the history and it's a lot cheaper to serve. But caching it does cost something and Anthropic is one of the labs that is the most proud to charge you for the caching. And also it is very easy to break this because if anything changes in that message history, the math has to change entirely. So if you have like a system prompt that has a timestamp in it and the time stamp changes when you do a new generation.

Now everything in the history is different because of the top being different and now you lost the whole cache and you have to eat the cost accordingly. The rumor on Reddit was that Anthropic had accidentally made a change to cloud code causing the cash to get nuked thereby causing you to use way more usage. But it seeming more and more like what people were upset with was the actual genuine rate limit changes that they didn't think would be as bad as they were because Anthropic did not buy enough GPUs and now we all have to pay for it.

So for Opus, you are $5 in $25 uh out for the million input output. Then for the cash read, it's 50 per million read and then $6.25 for cash right. That's the killer. 625 for cash right. And it's also worth noting that I'm pretty sure I could be wrong here. I'm pretty sure the only lab that charges for cash, right, is anthropic. It is. I'm looking at the GPT 5.4 one and it is 25 cents per million input tokens read from cache and that's it. There's no cost for writing to the cache. It just kind of happens.

Yep. And I believe OpenAI does automatic caching now when you send messages. And I know Gemini does cuz Gemini's caching used to be utter [ __ ] garbage and then our friend uh Logan flipped a [ __ ] about it and they fixed it. Not even 20 minutes in and I'm already realizing the difference between this podcast and all of the others that exist. We actually [ __ ] use these things. We do a lot. Way too much to you could argue, but yes, we do. Yeah. I This is going to be fun. This is going to be very, very fun.

There is There's a lot that we're going to get to. Speaking of things that we're using, you want to talk about the source code leak? Oh boy, that was a fun one. Hey, we've I'm not using the source code leak. I am fully not to be held liable. There is no code. You can see most of my code cuz my shit's open source. There is no lines of code shared despite the fact that in said source code leak if you happen to search for something like open code in it you'll find multiple comments referencing the things that they are getting ideas from not just ideas copy pasted snippets from open code just realized both of my background lights are dead uh that's fine I'll solve that problem later it is what it is we don't need them but can you tell that I threw this together totally didn't take 10 hours of my day spent on this four of which were in OBS and then I gave up and working on this since like 11 a.m.

and it's 11 p.m. I took a brief break to bringing groceries, but that's about it. Yeah. Anyways, we should probably go over the source code leak, like how it happened and why these things happen and why they're a big deal. Yep. So, one fun historical fact is that when Claude Code first came out, it was within their first four or five, if I recall, releases on npm where they first accidentally leaked the source code. to go all the way back to launch when Boris first started talking about Cloud Code. He did an interview with their Devril on their weird YouTube channel at the time.

He said one of my favorite quotes that I will use to haunt him for the rest of his and Anthropic's existence. We thought it was our secret sauce and we weren't sure if we wanted to put it out there like everyone at Anthropic is using this. What if this gives our competitors an advantage? What's really funny here is that they gave their competitors an advantage by doing the shitty things that they continue to do by treating it like this magic secret sauce. I know and honestly they gave their competitors an advantage because no one copied it.

I've read a lot of that source code. It is an advantage that no one has been forking it and copying it and building on top of it. It is rough to say the least. They should have went the Google Kubernetes strategy where they open sourced it and made it look like a good standard just to force all of the competition to make really shitty stuff and never be able to compete meaningfully. It worked great for Kubernetes. I'm sure it would have worked well for cloud code too. It would have it had such an insane first move.

Is MCP one of those? MC Okay. at the time. At the time I it's gotten better, especially since the Linux Foundation stuff happened. They're taking it in a decent direction. It has a lot of problems, but I will we I'm sure we'll have a section where we I defend MCP and you get mad at some point. Absolutely, we will. It'll be great. So, back to history lesson. Anthropic kind of sees themselves as I anything I say about how they see themselves will be flipped on me as though I'm the bad guy. So I won't go any further.

They see themselves in a way that is special and as such they seem to have believed that the claude code source and that cloud code as a whole was this magical thing that they needed to reserve and hold for themselves in some way which is really silly like absurdly silly. And as such they refused to release the source code. There were already three separate open-source terminal-based AI coding tools at the time. Ader was the one I was most familiar with. There was a few others as well. And Claude Code was the first that was made by one of the labs.

But it's also worth noting that when cla dropped, you had to use API access for it. You could not do a subscription for the first four or so months. You could not subscribe to use cloud code. You had to have an API account as an enterprise and use that because cloud code was doing better than expected and anthropic didn't really know how to build product. If I recall, they were still contracting out the cloud.ai site at the time. Are they still doing that? Has that been fixed? I don't know. I don't think the site's been fixed.

I think the desktop app is now managed internally, which I think is worse, not better for them. I've touched it twice and they were not neither was good. Yeah. So, because of that, my assumption was that both Boris and Cat weren't being invested in properly internally. They knew they could do something bigger with this. They wanted to do something bigger with this. They got acquired by Curser. And when that happened, and this is where I really start getting into speculative territory. I have no inside information here. This is just my interpretation of the absurd events that I watched unfold at the time.

They were only at cursor for 2 or 3 weeks and then went back to anthropic. And in that window, a very interesting thing happened. The subscriptions were introduced where you could pay a hundred or $200 a month to get a very generous amount of cloud code usage in the terminal app. And it would just not have to bill your API. It was a thing that you could do on a personal account. You would pay one or 200 bucks for the max 5 or 20x, whatever they call it. and then you could use cloud code without having to pay the absurd API prices.

It was found that they were actually subsidizing inference through this by quite a bit as much as like 5 to 10x that has since went up to 20x plus but at the time it was still absurd and this caused claude code usage to spike massively. So now imagine that you're Boris and cat who made this thing cared a lot about this thing I would argue cared way too much about this thing. you moved to a different company because you didn't feel like Anthropic cared enough to invest in you and let you do this thing. The optics for Anthropic were terrible.

They wanted to make Claude Code happen. So, they decided that they would spend what was effectively marketing money to subsidize tokens, get a bunch of people on Claude Code, and brute force Boris and Cat to come back because nobody wants to see their baby suddenly sprout wings and fly weeks after they left because the company finally decided to invest in it. So, Boris and Cat went back and the rest is utter [ __ ] chaos. My hot my hottest take here and we'll get to this in a bit with the rate limit stuff is that they never should have done the subscriptions in the first place or at the very least not subsidized them the way that they did.

The only reason they did that was in order to get Boris and Cat back and every optics disaster that's happened since stems directly from that mistake. Yep. Everything from the shutting off open code what two months ago to what's happening now and all of the rate limit dramas of it going up and down and people being worried that oh are they shadow reducing my rate limits now and this weird opaque box that people can make conspiracy theories about. They [ __ ] themselves. And honestly, I think that they kind of [ __ ] the industry in a weird way because now all of the different agent platforms, all the labs, even like the cursors and open codes of the world feel compelled to make their own subs to try and compete with this because it's really really hard to compete with someone who is offering you $5,000 for $200 versus a guy who's offering you $200 for $200.

That math just doesn't work out. Yeah, it's pretty brutal. You know, there's a lot of things that Theo and I disagree about, but the one thing that we do agree on is that code review just kind of sucks. Especially these days when agents are putting up dozens of PRs every single day. It makes it really hard to keep on top of it, read every single line of code. It's just not feasible anymore. Unless you have something like today's sponsor, Code Rabbit. They are an incredible code review platform that deeply understands your codebase. And don't just take my word for it.

Take a look at this PR on the Expo repo where Expo was pretty hesitant to bring in an AI code reviewer. They didn't think it'd be that helpful or it would even really understand their codebase all that well. So, they tagged it in because anytime you're in a PR, you can just tag at@code rabbit.ai and ask it literally anything and it will answer. And they asked it to analyze the codebase to figure out how tree shaking in Metro actually works. And the answer they gave it was so good that Expo is now a very happy Code Rabbit customer alongside 15,000 others including Clerk, Bun, Indeed, and so many others.

It's an incredible code review platform that's not just for your PRs on GitHub because they just introduced an incredible new CLI tool that makes it that you can get Code Rabbit's really good reviews on your machine without having to push it up to GitHub. All you have to do to get your changes reviewed is type CR into the terminal. It'll do the review, which is incredibly helpful on its own, but this is even more helpful when you have agents working in your codebase. They have a cloud code integration that makes it so that as your agent is making changes, once it's done, it can automatically trigger the review itself to find any issues that were made during those changes, get those addressed before it even goes up to GitHub.

You no longer have to push everything up, wait for the review to happen, grab the changes from GitHub, grab the review comments from GitHub, pull them back down. That whole process was kind of tedious. They're doing incredible work here to close that loop and make it so that code review is something that just naturally happens in the background so that by the time you do actually look at the PR on GitHub. It's already gone through multiple layers of review and is going to be of much much higher quality. And it's not just for cloud code.

They have integrations with Cursor, Codeex, Gemini, and the fact that it's just a CLI means that any coding agent can comfortably call it whenever they need to. You can make these incredible autonomous loops that's going to result in much better code coming out of these agents. And considering it's two clicks to install with a 14-day free trial, there is no reason not to try it at nerdnipe.link/code rabbit. Okay, back to source before I get distracted by the rate limit thing. So, that was all a while ago. Since then, the Cloudco team has expanded massively. Last I heard, it was over 80 people.

Might be even more at this point. It's a big team, lots of people on it doing lots of different things. Claude Code has blown up, as we've all seen. It's even giving people psychosis, even billionaires, which is hilarious. No comment. Cloud code is still closed source. It is on npm. You can install it other ways. It is bundled with bun. It is a package of JavaScript. And this is an important detail because JavaScript is not a language that is compiled. It can be built because we don't write JavaScript in a way you run it. We usually write TypeScript and include all these other tools and things.

And when we do that, we have to have a bundler step that takes all of the things we imported, all the stuff we built, trims out all the things that aren't actually JavaScript, like our type definitions, our rules, and all those things we use to make sure our code is accurate, and then it minifies it and makes it this bundled up nonsense that is really hard to parse or change, but still works and runs in JavaScript runtimes. And then that is what sent to the user. Technically speaking, there is code that can kind of be read whenever you run any application written in JavaScript.

How hard that code is to read and reuse and do things with varies based on what tools they use to build it, how much they're trying to hide and offuscate and all these other things. And anthropic has tried very hard to hide those things. There is a problem with these strategies though. How do you deal with errors? When I work on code on my machine and I have an error, it will tell me in my terminal what line of code caused that error and I can go into my editor and see that code and make the change and fix it.

But once the code ships, since it doesn't have the same lines of code because everything's been flattened and messed up into this nonsense uglified thing, you can no longer trace back to where the error happened and get the original thing in your source code. So, how do we map from the thing that we bundled to the original source? We use a thing called a source map. Source maps are effectively the binding between your source code and the thing that gets sent to the user. so that when an error happens, you're able to use your source map, ideally on your own servers, to link between the error the user saw and the actual code.

So you can go make a fix and actually ship the thing. But there are some problems here. Specifically, if you accidentally include the source map in your package, you're effectively just handing out the source. This is something that is not too hard to do because when you publish a package to npm, it is effectively just taking the folder where your code is and where you have this build that you're planning on shipping and it grabs all of that and then ships it up. If you have any semblance of a decent process here, it is pretty easy to prevent any issues because you have just the source code on the server.

You have a build command that runs, puts all of the things in, and then ships it. Do you know actually, Ben, why this leak happened? like what the actual issue was that caused this to go out like that. No idea. You didn't I I'm so excited we get to get your reaction to this on film. Um they didn't have a CI step for publishing. Every single publication of quad code has been run on one of the team's machines since day one. What? Yeah. And different people every time. Okay, this makes a lot more sense. Continue.

How can we take a moment to appreciate that just just for context for people who aren't as in the dev world because I know for some reason podcasters don't code as much. It is what it is. When you want to put out code, usually the first step is you put it on GitHub and then your team reviews the code, accepts it, and then merges the code. And when you want to release it, you can trigger a release. Usually what that means is on GitHub or a platform like it, you have the code get downloaded on a server.

It runs all of your checks. It runs the build. make sure everything is good and then it sends it up to npm or wherever else so that users can use it. If you are less uh competent, you might just run it on your own machine. I'm not saying this because I'm trying to belittle them. I'm saying this because I'm trying to belittle myself. When I make a new package, the first time on the like the 0.0.1 release, I will usually publish it from my machine and then I try to put it in the GitHub action so that it will run when I click a button on GitHub instead.

And when I do that, it usually breaks in all sorts of ways because actually getting things to publish on GitHub is obnoxious. And npm has changed how they do this, I think, three times in the last four years or so. Is somewhere around that. The current system is really not that bad. It's a pain in the ass to set up, but once it's set up, it works. Yeah. I actually have a really good agent that helps with this. His name's Julius. He's from Sweden. Oh, I love the Julius agent. A bit expensive monthly costwise, but very very efficient.

also like weirdly like selfcont controlled like I don't have to prompt it even like Julius is able to just go and fix things. Mhm. It's almost like he's an employee. I know. So usually when I have problems with my mpm publishing strategy, he'll come in and fix it. I've actually gotten a little better at fixing it myself. I even make changes to our current one. Very small but meaningful. Usually I just let him take it over. But I want to make sure it is clear that every single thing me and my team publish do go through an actual CI publication step because it's annoying.

But it's annoying in the sense that it takes half a day to a day of fighting it. It's not annoying in the sense that you have to fight it and maintain it and keep doing this forever. So why the [ __ ] does my threeperson team have this right across 20 plus surfaces that we have to cover and their 80 person team couldn't find one person 3 hours to go [ __ ] figure it out. Especially considering half of them used to work on things like React at Meta and know how to do this. They're just [ __ ] lazy because their agents aren't smart enough to do it and they're too lazy to open the terminal up themselves and set it up.

Yeah. And it's like ah now that you said that I'm 99% sure what actually happened is whenever like in the package JSON you just define what folder gets published to npm and they probably did like a dev build to test it on their machine and that dev build outputed source maps and those just ended up in that build directory on that local machine which is get ignored so it wouldn't be a problem if it was in CI but since they did that test build or some random [ __ ] it just snuck in there. No what happened even dumber here really.

Yeah absolutely. So I I I spent a lot of time in source maps through my career. I've had to do like weird crazy debugging at like big companies, small companies, everything between. You don't have a different distribution folder in your project usually for when you are testing your source maps when you're actually publishing. So what you're supposed to do is every time you do a new build is nuke the disc folder. If they're completing files like the index.js, which is the thing you're actually shipping, then it will override those. But if you don't nuke the directory first and there's source maps in set directory, they don't get deleted.

So you have this folder, you did a build that included source maps at some point because you wanted to put them in Sentry or whatever. You didn't delete the folder, you do another build for the release process, it overrides the files that you actually release. It doesn't touch the files that are the source maps, but they're still there cuz there's no reason for them to have been deleted. And then it's published. This is very trivially solved by having an ephemeral system where it gets reset because it's running in a fresh server when you do it, which is how all of CI for all of history has worked.

But that requires using your brain, not an agent. Yeah. Yeah. Yeah. That is what I was trying to say for the record. Cool. You get the idea. So anthropic being bad at engineering resulted in the world getting to see their bad code. Yeah. And to be honest, like I don't even know if that this is like bad engineering just being lazy because it is so because I guarantee you they are having the agent run the build step and it is so when you are deep in agent land and you are barely looking at the code which is what naturally happens.

It is so damn easy to just have a dirty directory somewhere that you're just not paying attention to and it doesn't show up in any tools cuz again it's get ignored. Very easy to not overwrite that. Yep. Yep. And here we are. Subtle mistake. You'd think if they cared so much about their secret sauce, they would care enough to make a 20 line of code build script that does this properly. But, uh, they care about the optics, they don't care about the actual thing, which kind of seems to be Anthropic's whole thing. It feels like they're very quick to jump on things and make changes when they need to GPU crunch, when they want to free up GPUs for whatever the hell they're cooking.

When it comes to problems that we are experiencing as users, oh, we're working on it, but it's really hard. And then it keeps cycling. And this is where we transition into mistake number four, Anthropics, utter [ __ ] chaos, where they decided to announce that anybody using their subscriptions for anything other than clawed code can go [ __ ] themselves just under 24 hours after this leak and under 24 hours before this would go in place. Yep. And yeah, I honestly I don't know. I don't know how they planned this and how they couldn't like how is there no one in the room who's like hey guys if we're going to like nuke all these things that a lot of people have entire systems built around.

What if we gave them like two weeks heads up like like even just like a grace week would have been really nice but they didn't even do that and they announced it at on Friday night after working hours. So they tried to do it at the most dead time possible. I have an idea. Let's let's let's do an exercise here. Let's embody our inner anthropic. Oh, yes. All right. I'm getting a little I'm going to be the researcher that is pissed off that we don't have GPUs. You're going to be the claude code comm's person that on one hand doesn't want to get flamed on Twitter, but on the other hand is delusional enough that you think every single thing Anthropic does is out of the kindness and generosity of the heavens.

Yes, cool. It's the benevolent machine god that they're building to save us all. Hey Borick, we're out of GPUs again. How are we supposed to make the god model if we don't have if you don't have GPUs? Sir, sir, you know what it is? It's those open claw people. [ __ ] How How could they do this? How could they steal our GPUs? So, here's what we're going to do. You're going to kick them all out and give me the GPUs. Done. Okay, we are assuming you care about the Twitter optics, remember? So, you're a little upset about this.

Remember, anthropic, the product people and the researchers kind of hate each other. So, let's let's reset. Okay. All right. All right, my bad. My bad. Two steps back. But sir, what about the Twitter followers? What about the optics? What about how is this going to look to all of our paying customers? They're paying us billions a year. Models are great. So, what if we just give them $100 of API credit if like we don't want them on the subscription plan? We'll just give them credit and then go do that and we'll get back to just taking their GPUs.

I think we can make that work, right? Like, who wouldn't want a $100 of anthropic credit? That's like $300 of gold. Like, that's a gift, right? We should be charging a thous going down is concerning. What we should do is reabel the models so that opus is now sonnet and then we can make something even bigger and more expensive. Oh, sorry. We weren't supposed to leak that part. No, no, no. Yeah, no watching, right? No, no, no. Okay. So, what actually happened here is they probably genuinely believed internally that the 20 to $200 of credit you get based on your subscription was enough to cover their [ __ ] and let them get away with this because they just so fundamentally don't understand the optics of somebody who doesn't work at anthropic and isn't drunk on the Kool-Aid.

They just cannot see it. And I know this because I talk to these people. I I have more friends at Anthropic than I do at OpenAI. But when I talk to an anthropic friend about a thing that Anthropic is doing wrong, the response is them trying to convince me that Anthropic is holier than thou and I just don't understand their perspective. I'm sorry that I don't sympathize properly with a 300 to800 billion company, but maybe if they bought the right number of [ __ ] GPUs, none of this would be my problem. Yeah, it's unfortunate that I have to be the one out here talking [ __ ] but somebody has to hold them accountable.

And I'm thankful that their reputation has finally caught up to what I have been saying and I no longer get [ __ ] for explaining how shitty they are. Yeah, it sucks. Yeah, I really wish they weren't like this. Genuinely, there's something there's some really special stuff in the cloud models. They are actually very nice to talk to and there's some really cool [ __ ] they're doing. We need more competition. I just wish that their heads were not so far up their ass. Speaking of which, let's talk about the the most head up the most ass in my opinion about all of this.

Oh, yes. Yes. Yes. Boris was the poor guy who was stuck with the task of telling people publicly on Twitter that this change was happening because again remember anthropic will never announce anything negative on their official socials with the orange logo. They will only ever do that over a very limited set of emails that sound kinder than they are and through the accounts of the people who work on the thing because the employees are stuck explaining this. Not even the employees who do coms, just random people on the team and the official accounts are reserved for things that people like.

Yep. So, poor Boris was the one stuck with explaining this publicly to people. And there's another thing that a lot of us have an issue with with Claude. I personally am invested in this one. So, I'll go on this rant really quick. They subsidize the [ __ ] out of tokens when you use Cloud Code subscriptions. With Claude Code, you spend up to 200 bucks a month and get up to $5,000 a month in inference. The problem with this is that if you're not using a subscription, you don't get subsidized. So if I'm just paying API prices, I would have to spend the $5,000 to get $5,000 of inference.

So a lot of people want to use those subscriptions in other tools, things like Open Code or PI or OpenClaw or any of these other things, but you can't because Anthropic only wants to subsidize their own tools. I will steal this for a second and say that Claude Code being controlled fully by them means they can do a lot of optimizations like they can be careful about how it does things in parallel. They can go out of their way to make the caching work better. They do all these things to reduce the cost to them when you use it.

But if you choose to not use their tool, you have to go spend significantly more money as your punishment for not using their lackluster. I believe in the official rankings using Opus with Claude Code. Not only is it not in the top three harnesses for performance with Opus, it's not even in the top 10. It's currently in 12th place. If you use Opus with literally any other harness, it will write better code like Cloud Code. And to be fair, probably some of the optimizations they've made here make the model perform worse and it makes it dumber.

That said, they have built their economics around the assumption they have a little more control in cloud code. So steel maning to the best of my ability, the amount of inference they give you is not sustainable in other places because they also suck at tracking these things. So they're not holding people to their limits properly. And instead of fixing this, they've decided to just kick anybody out that isn't using the official tool. To Boris's credit, he's also filed pull requests on things like OpenClaw in order to reduce the cash misses and make it less token hungry when using Claude models.

But that's far from enough, especially when they at the same time come out and ban cla models through the cloud code subscriptions from being used in tools like OpenClaw. But what if you decide to actually use cloud code underneath? What if you have, let's say, an open-source user interface that is significantly faster, more performant, more featureful, way easier to use, open source, and just a much better experience. But since the creators of this thing, let's just call it a T3 code. I don't know. It's actually T1 code. Oh, that's the official version. How far do we get in before the first Maria mention?

46 minutes. Cool. Yeah, not bad. Not bad at all. It would be really nice if we could use a good harness in that situation. But if we want people to be able to use their expensive subs that get you even more expensive inference, we are kind of backed into a corner and have to just wrap this closed source poorly documented, often failing and obscure ways in super ramhungry harness that they gave us with cloud code. And as such, we are now suffering. We have done our best and we have implemented cloud code in T3 code.

So if you have a subscription, we are as far as we know fully above board and good. but as far as we know is doing some heavy lifting. Well, it was. But one of the nice things, one of the very few nice things that came out of all of this and came from Boris being on firefighting duty is a reply he had to an Eric Baress. Eric asked, "Subs can still be used for personal local tools that use or wrap the Claude harness like Claude code and cloud code headless as well as agents SDK." Right?

To which Boris replied, "Yep, working on improving clarity here to make it more explicit. This seems like a very very clear yes. You are now allowed officially like relatively clearly to use custom wrappers for cloud code and agent SDK with your subscriptions for local use at the very least. This seems pretty cut and dry. Totally fine. So I very excitedly took the screenshot and posted one piece of good news. T3 code is confirmed safe for cloud subs. We finally have explicit confirmation that tools wrapping cloud code for local use are allowed. I made this post because I had been DM'd by multiple different people and also saw the post and was excited because there is effectively no reading you can possibly do of what Boris said here that can be interpreted in any way other than yes, you can use these things locally with rappers like T3 code.

And yet that's probably not going to be the case. Would you like to read Thoric's reply? Oh god. Let me pull it up. To be clear, this is not guidance or an update on the agent SDK. We're still working on clarity there, which probably means that well, actually, I want to go over to a Matt PCO tweet because I think this one very nicely sums up the weird issue we're having on the where is the line with this thing because running using your cloud code sub and cloud code is fine on the online platform. It's fine.

Using it in personal software is okay probably, but using it in commercial software is not okay. But what if that commercial software is open- source software that's free that you can just download on your machine and can run it? What my personal guess here is is if you're using it for like some super basic task that barely uses any tokens, they just won't notice and you'll be okay. But for anything that is an actual agent loop, like if you're using it to generate code, they're probably going to nuke it. That is my guess. I think we both agree that the best off experience either of us have ever had is Clerk.

It is so damn easy to get it set up in literally any project. Nex.js, React, even my spelt nonsense works beautifully with Clerk. Getting a sign-in with GitHub button on there is just trivial to do. But they do so much more than that. Their dashboard is incredible. The components make life so much easier. The fact that you can just bring in their sign-in component, mount it on the page, and it just kind of works is frankly incredible. And that's not even talking about the fact that they actually have the best organization system of any O provider I've tried.

There are a lot of other o providers out there and none of them handle orgs the way Clerk does. It is so seamless. All you have to do is mount the org component, set in the dashboard that orgs are turned on. It is trivial to get a really great B2B experience set up from scratch. Stack on top of that the fact that clerk billing now exists. So all you have to do to get payment set up is turn it on, integrate the pricing table component into your app, and you have a full checkout experience hooked up with the user subscription now attached to their O object.

What makes it trivial to actually gate different features and handle all of the annoying awful edge cases of billing. I honestly don't know how to put it other than Clerk just kind of gets it. the fact that whenever I look at their SDKs, when you look at their raw JS SDK, it is trivial to implement in a normal HTML page with a script tag or you can put that in a spel component and easily mount that directly to the DOM. Or if you want to work in React, you can use the React components which are built on top of their raw JS components because the whole thing is beautifully intelligently designed.

It is the O provider that just works. I have fought every single O provider except for Clerk. It is an incredible experience every single time and you will not regret using it at nerdnipe.link/clark. I want to qualify Matt here, who hopefully is in between us on the screen right now. Matt PCO is one of the kindest people I've ever met in my life. I would put him next to like Kensy Dods in the dev world for just absurd levels of kindness. It was so much so that when we first started interacting cuz he was the we were both the Typescript guys on YouTube.

There was a while where he didn't know how much he should interact with me cuz I am not the nicest guy on Twitter. He eventually came around when he realized that my intent was good and that I was just fighting for the things that we mutually cared about and mattered that trying to push the technologies we care about forward. But it took him a bit because again he cares so much about kindness and being a moral good acting human that the way I interact was scary to him. Like that's the type of nice guy he is.

He's the type of nice guy that is scared to talk to Theo publicly. Now we're good. I also had to be a bit less of a dick in general and I'm proud of the progress we've made here. So, with that qualification, I would like to emphasize how rare it is for Matt to do anything other than be really nice in his glazing of the things that he enjoys. I have almost never once in my life seen him say anything even vaguely negative, which is why when he first had questions about how we can wrap and use your Cloud Code subs, he was super kind and generous with it.

I don't care enough to find the tweet to put it there. What Matt said at the time was something along the lines of, "I am still confused as to whether or not I can do this. I'm working on a course that uses Cloud Code. I want to wrap it with some things. Is this allowed or not? To which Thoric replied, sorry, we haven't clarified this. We're working on it. We'll have an update soon. To which Matt replied, 3 weeks later, any ETA on this? No response. It has now been another 3 weeks. We've now had even more drama.

We have now had this change that got sent to us over email and Boris post. And then a reply that seemed like we were allowed to do this. And now we're being getting more push back that we might not be able to do this. And this has broken even poor Matt, the kindest, most thorough British TypeScript developer I've ever met in my life. Who, reminder, just put out a course two to three days ago that you should check out by the way. He's a good friend. He deserves money. Not a paid endorsement of any way, shape, or form.

Just Matt deserves the money for the things he does because he makes really, really good educational materials. He built the whole [ __ ] thing around cla code. I don't know if you know that, but literally the entire course top to bottom does not work if you're not using cla code. Yep. It is probably going to sell millions of dollars of subs. Probably. And he still doesn't [ __ ] know if he's allowed to do it or not. Yep. There is a line in his post, I have never before experienced from any developer tool such a frustrating lack of clarity over the basic terms of usage cuz there is no clarity here.

Frankly, my personal guess is that they regret the clawed agent SDK thing. I think that that is just going to bite them in the ass in a lot of ways. There is no world where they want you to build an agent on top of it because it is not theirs. and it is not controlled by them. I assume what their intent with that was was to allow you to do like automations within a PR or something like that, like some one-off generations on your computer, like little cool ways you could hook into it without building a full competitor on top of it.

But now that this thing exists, where the [ __ ] is the line? What are you allowed to do with this? We'll see. I I'm going to make yet another mistake. I have a feeling that this podcast is going to be full of these things I'm going to regret. I'm going to tell you about a text that I haven't even shown you yet that I got from an anthropic employee that I will not say by name. Oh, we're going to get in so much trouble for this. I don't [ __ ] care. I'm pissed. I [ __ ] you not a text I got earlier today from an anthropic employee was, I feel like you guys don't appreciate the complexity of the problem.

Effectively, that individual's response to Matt's post saying that he's never before experienced from any dev tool such a frustrating lack of clarity. We are the ones who don't understand the complexity of the problem here. Ben, did you know that? That we don't appreciate the complexity of Anthropic's hundreds of billions of dollars poorly spent on [ __ ] PR and worse harnesses. Well, to be honest, I feel like I kind of do understand what the complexity is. They just do not have enough compute to satisfy the demand. So, they're trying to figure out a way to not have the max plan used all that much.

The only place they want it to be used is in cloud code. and they now have this gun pointed at them that they pointed at themselves called the agent SDK that they now have to reckon with and they're going to have a lot of fun doing it. They seem totally okay with subsidizing themselves to hell and back as long as you were using their tool. Yes. But we need to be clear, the 5x and 20x max plans for Claude Code from Anthropic are marketing spend. So if you are not using their tool, they are not interested in giving you that discount.

I've seen all sorts of funny conspiracies like the reason that they do this is because they want to collect all the data from the people on the sub and the data is not as good if you use other tools. Oh, that's a fun one. Yeah. And why would they ever allow you to use those other tools, that's just a free advantage to their biggest competitor, OpenAI. And if some startups have to die in the process, that's a sad side effect of what they're doing. Which is so funny because the literal only company that benefits at all from this, the literal only one is our friends over at OpenAI.

It is. They have just handed them layup after layup. Every time Anthropic does something shitty, OpenAI comes in to take the free sentiment up from the ground. They are buying sentiment at 50% off at the discount store that Anthropic keeps bringing in and selling for nothing apparently. No. Open AAI figured out a god tier strategy to win the AI race. Don't be [ __ ] Or even just sit there and do nothing. Just sit there and watch as your competitors shoot themselves. [ __ ] what? We've got Grock burning itself to the ground. Llama is on fire. Google is Google.

They have a bunch of geniuses siloed away who can't talk to each other so that we can get a really smart model that can't call tools. The Chinese labs are just naturally 6 to 12 months behind eternally. And then OpenAI is just sitting there watching them all fall apart and laughing as they rake in the free wins. What's funny is they're not actually laughing. For all you talk to at Open AI, they're still stressed about this. They're still going hard, but yeah, it's just working. It is. It's a beautiful strategy. I can't believe we ended up here.

I If you had asked me a year ago where we would be, this is not what I would have predicted. I really like I loved anthropic stuff 6 months ago. Like I remember when all you were first getting suspect on Anthropic. I kind of disagreed. Like I was a big claw guy last fall. I have videos on my channel of me talking about how much I like I think it was like Sonnet 4 at the time, something like that. I loved that model. It was so much fun. It would have been Sonet 4 6 months ago probably.

But uh yeah, Sonet 4, whatever it was, I had a really good time with it. It was a great model, but they just keep getting worse. I don't know. It sucks. We're going to lose the only real competitor OpenAI has, which sucks. I have enough faith in them and from everyone I know, I think that we should be okay. But I am genuinely scared of the day when OpenAI does not have any real competition and they can just do whatever they want. I don't want to live in that world. I want Anthropic to do well.

I am rooting for them. I don't like that they're doing this [ __ ] Fun realization I just had. In the week where Anthropic made more mistakes than ever, [ __ ] more bugs than ever, and had more issues with the rate limiting than ever, Codeex reset the rate limits twice. They did. And you can guess how many times Enthropic did. Yeah. Never. They never would. And like I think this is also a weird thing too, which is how the models turned out from both of these companies. The GBT models are so much cheaper to run than the anthropic models that they can get away with doing stuff like that.

They're cheaper, they're smarter, they can be reset, they have enough compute to do all of these things and they're actually pleasant to interact with. And the core of the things they built with the Codeex app server, it's open source. The CLI with Codeex is open source. The app isn't, but there's a better app called T3 Code. Check it out. codes that is open source that is built on top of the app server because again all of the pieces we need to rebuild the codeex app are open source. Yeah, it's crazy. And I've personally had many conversations with the people working on the Codex app about open sourcing it both publicly and privately.

They're cool to talk about it. They are legitimately thinking about it. They are taking feedback. They are listening. They are learning. Versus another company where when I explain things to them, they don't learn. They try to convince me I am wrong is the person explaining the optics. I can't be wrong about the optics. I'm describing what I'm [ __ ] seeing. Yes. And it's a weird game that I don't know. It's It truly is the cult thing. Like, I genuinely just believe that it is the they believe that they will save the world type thing. And that that rots your brain to such a horrific degree that anything becomes excusable.

Like once you get to the point where like the whole world dies if we lose, you will do anything you can to win. And they believe that this is what they have to do to win. And probably behind the scenes it might be. Like I don't know what their financials are. I don't know what info they have, what they're looking at. Like I remember that um it was the Daario interview on Darcesh listening to him talk about the economics of scaling up Claude over the last couple years and into the future. Every single year they have to spend 10x more to get the next big model.

The last model recoups its money, but still if you make a billion dollars off of your billion dollar model, but then you have to train a $10 billion model, you're kind of [ __ ] and he's very scared of that scaling up and making sure that they can keep up with the economics of all this stuff and they're this is what's happening. So he declined to buy the compute. Meanwhile, when OpenAI is asked, "How much compute do you want?" They say all of it. Yes, literally all of it. They don't give a [ __ ] and they were right for it.

Have we exhausted this topic? I think we beat the dead horse. I uh I'm sure there will be more in the future, but I don't I don't know. in the panicked moment that Anthropic had during the Claude code source leak, which reminder was caused entirely by them. This was not some malicious actor going in and stealing the code and publishing it. They distributed it themselves on npm. NPM has some very strict policies around package publication and most importantly unpublication. Because if you publish a package and I publish a package that is building on top of yours, so you have some thing that's on version one and I use it in my thing that's version one and then your thing goes away, people can't install my thing anymore cuz it relies on your thing.

As such, npm has a very strict policy around unpublishing where effectively you just can't do it unless you go the copyright route. So, not only did Anthropic copyright strike npm to get their own package taken down, which is hilarious in and of itself, they also went after every single individual developer who did the same thing, but also then went and shared the thing that again, Anthropic published publicly. They were taking a public thing that Anthropic put out and was out for over 18 hours on npm where anybody could go to a publicly accessible URL and look at it.

They took this and they put it on GitHub because they wanted it to be easier to look at than having to download the zip and open it themselves. I can hear the argument for that being a copyright infringement and needing to be taken down. I think that is while dumb and gross, at the very least that is legal. Yes. What isn't legal is false digital millennium copyright act strikes, which is when you knowingly send a copyright strike to an individual who has not violated any copyright or the strike that you are issuing is not for a copyright violation.

If I violate copyright in place A and you send me a strike for place B where I didn't, that is still illegal. Very important to understand here. Yep. I happen to have done a lot of work on copyright law when I was in college. I have written and contributed to research on this. It's a fun topic for me. I've been deep in it. I've fought Microsoft way back about all this. I I care about these things a lot and I really care about the Digimon Copyright Act being used as written and intended legally. Yes. Which is why when I woke up on April 1st, April Fool's Day to a message from my executive assistant telling me that there was an email in my inbox that I had gotten a copyright strike from Anthropic on GitHub and she was wondering if this was me trying to bait them or something cuz that's a thing I do.

Yeah. When I hadn't. The copyright strike is because I had filed a one line of code change to the public cloud code repo. Again, not the one with the source code. The public cloud code repo is where they put the markdown for like the read me on how to use it. It's where they put the skills, the plugins, and it's where they have issues for people who have problems so they can have a conversation there. The cloud code source code is not in the cloud code GitHub. The cloud code GitHub had a lot of PRs opened where people were trying to add the source code from the source, which is very funny.

Like very good joke. I am proud of every person who did that. That is actually hilarious. My fork of the cloud code repo was to make a oneline change to the front end skill because they have this awful call out for a retro futuristic design that just is a slot factory. And I was actually genuinely trying to help them by removing this to make it so that the model would make better front ends. The PR was never touched. It is what it is. I don't expect them to accept anything that Theo contributes. I get where they're coming from.

Hell, that oneline change forked from a legitimate repository was DMCA. So my fork of the official repo where I made a oneline change caused me to get my first ever DMCA strike on GitHub, which when I think about it makes sense because there is no company in history that has sent more DMCA requests to different repos on GitHub than Anthropic. Yep. They are the single most prolific copyright striker in the history of GitHub, which is why GitHub didn't even bat an eye when the report they received told them to ban 8.1,000 repositories. Mhm. And I think the thing about all of this too that's just like it's kind of a side effect of it, I think this entire saga is just the sloppiness at play right now where, you know, I love these coding agents to death.

I do a lot of stuff with them. I write the vast majority of my code with them. And I get it. It's easy to just fall into the trap of letting the agent do everything. And I would bet money that the agent handled doing all these DMCAs and finding all the repos and sending them out. I am 50/50 as to whether this is GitHub's fault or Anthropic's fault that I specifically got DMCA. First and foremost, I know for a fact An Enthropic would never choose to DMCA me because whatever they get out of the DMCA is way less than the hit they have to take for me doing content about it.

That video went viral as [ __ ] across all platforms. It was great. It was one of the best birthday presents I got this year. So, thank you for the DMCA notification, Anthropic. The thumbnail for the video was just a screenshot of the email. You handed me a free layup. It was great. And I spent half the video kind of defending them because I don't know if it's their fault or not. My theory for I would put this at like 70% confidence what happened. They sent a long report to GitHub about the repos they knew were violations and there was one in particular that was forked a shitload.

So, they said ban this and all of the forks. Also look through the poll requests here where there's dozens of people trying to file pull requests to the official cloud code repo adding the source. All of these should be DMCA as well, which means they probably left the link to the pull request for the official cloud code repo in the report. And when GitHub went to honor it, they saw that link, they saw ban forks, and they put the two and two together and banned all of the forks of the wrong repo. It's something along those lines most likely.

I still like I don't know how DMCAing works. I've never done it. You send an email with links and you trust them to take down the right links. Can you just tell them, okay, download kill everything that looks like this? Like, don't they have to at least give them specific links? Yeah, but also like like some of the most schizophrenic things I have seen in my inbox are people trying to send copyright DMCA requests to my videos randomly on YouTube. You can just go back and forth with the holder of the like like the publisher for months.

And what's really funny is if the DMCA strike is honored, all of those coms have to be forwarded to the affected users, which didn't necessarily happen here, which I actually think now that I think about it, is another violation of the law that I should hit up my GitHub conducts about. It is also worth noting, this is a very important detail. GitHub has not had their DMCA process stress tested. I am almost positive the DMCA GitHub email is some guy who once or twice a week has to go through it, figure out what is and isn't valid, and then go remove them.

I don't think it's an agent. And I think it's some poor underpaid individual that has to do just this or it's just one of their five jobs at GitHub. This whole process is not a system. And a lot of people are looking for like, oh, here are the steps. There's no [ __ ] steps. There's no [ __ ] system. You send an email and you hope they do the right thing. Someone did the wrong thing. It could be that email linked the wrong stuff. It could be that GitHub hit the wrong repos. It could be that they spawned and hit all the forks from the anthropic claude code and not the malicious cloud code that they were upset about.

M it doesn't really [ __ ] matter though because I was illegally struck. Yep. And I got free content out of it. They did reverse it which also means Anthropic is now the single company to have reversed the most DMCA strikes in the history of GitHub as well. So congratulations Anthropic breaking records left and right. Notably not records for coding models, not records for usability of models, not benchmarks as they haven't broken those for a while. But uh yeah, they broke the record for GitHub DMCAS. The Metallica of the AI world. You're not wrong. Oh, Anthropic. Yeah, my guess it was an email with a fuckload of links and that fuckload of links from Anthropic came from them just doing some scraping process or some whatever and there were so many thousands of links that it wasn't checked.

I would be very very surprised if my GitHub URL was in the email. Yes, that's what I'm saying. I don't think that they had t3.gg/cloudcode. I think they had anthropiccloud code polls. It said go through here and ban all the ones adding the source code and they missed the adding the source code part and they just banned every fork that was in there. I think every open PR on the anthropic cloud code GitHub was it is my guess. I would love to know that. If only this company was competent enough to share details instead of just vaguely telling me it's not their fault.

And this is the problem. I will continue to give them [ __ ] as though it is their fault because they have not given me the resources I need to know if it is or not. And to be clear, I think it is their fault. I think that Enthropic put your link in there personally. I think that's what happened. And then that just got on the list and they just went down the list and did it. And Enthropic didn't review the list of links that they sent because they just grabbed all of them because there were so [ __ ] many of them.

And that's how it slipped through. Like if they were manually auditing them, they wouldn't have done that one. Anthropic, I'm trying to defend you guys. I'm under an NDA. I'll gladly sign more. If you want to show me the specific email that was sent to GitHub over this DMCA that struck me personally so I can know whether or not you included me and who the party at fault is here. I would love to see that and finally be able to issue a corrected statement about what the [ __ ] went wrong here. But I can't. You don't give me what I need to defend you, but you give me lots of things to tear you to shreds.

I just want to report what's going on. And I can't do that when you hide the things that I could use to defend you and you rub in my face the things that you do [ __ ] wrong. Yeah. And the thing about this too is I don't know. I know how it works on YouTube where DMCA strikes can be a very very big problem if they actually go through. If you got falsely hit, how many others got falsely hit? Like that's the even scarier thing here where like 8.1,000 is the number. Yeah. And some of those I'm sure were valid.