I’m scared about the future of security

Chapters6

AI tools are rapidly finding more vulnerabilities, including remote kernel exploits, signaling a dangerous shift in cybersecurity.

AI-driven vulnerability research is racing ahead, making exploits easier to find and harder to defend against, with OpenAI, Anthropic, and others racing to secure the space before it spirals out of control.

Summary

Theo- t3.gg delivers a chilling take on how AI is reshaping security research. Theo highlights how models like Claude Opus 4.6 and GPT-5.4 are not just helping write code but enabling rapid discovery of zero-days and remote code execution. He recounts Defcon anecdotes, including Goldbug puzzles and a CTF where AI began to meaningfully assist in pwn-ing servers, signaling a qualitative shift. The video links these capabilities to real-world products from OpenAI that reroute security-misuse risk and even mentions Mythos leaks as a sign of looming danger. Theo emphasizes that vulnerability research is “cooked” by the AI era, arguing we’ll soon see AI-driven vulnerability discovery at scale across open source and commercial software. He also discusses the social and regulatory ramifications, warning that policy may lag behind technology and could even push security work offshore. A sponsor segment for PostHog follows, illustrating how AI can also empower defenders—then he pivots back to a stark forecast: we’ve entered a post-attention-scarcity world where elite exploits may multiply rapidly. The overarching message is clear: the future of security hinges on how quickly defenders, researchers, and policymakers adapt to AI-enabled threat landscapes. Theo ends with a personal note of fear tempered by a call to preparedness and awareness.

Key Takeaways

- AI-enabled vulnerability research is accelerating: frontier models can find and validate zero-days across complex codebases in minutes rather than months.

- Defcon anecdotes show AI moving from guided exploration to autonomous problem-solving, with Goldbug puzzles and CTFs underscoring the shift.

- OpenAI and Anthropic are actively shaping safeguards (rerouting security misuse requests; Mythos leak context) to prevent misuses while acknowledging the potential impact.

- A broad, post-attention-scarcity security world implies attackers will target a wide array of systems (OS, databases, IoT) rather than a few high-profile targets.

- Vulnerability research could shift toward AI-driven pipelines (code indexers, model checkers, fault injectors) that produce near-100% exploitable results, raising the bar for defenders.

- Open source ecosystems face a deluge of reported vulnerabilities, blurring signal from noise and stressing the need for reproducible, verifiable fixes.

Who Is This For?

Essential viewing for security researchers, open-source maintainers, and policy makers who need to understand how AI is transforming vulnerability discovery and why proactive defense matters now.

Notable Quotes

"Is this hacking? No. Solving a weird puzzle like this is not the same thing as hacking even at all."

—Theo emphasizes the unsettling difference between solving puzzles and true exploitation during Defcon demonstrations.

"Be scared in a post attention scarcity world."

—A central warning about how AI changes the economics and visibility of security threats.

"Exploit outcomes are straightforwardly testable with success and failure trials."

—Highlighting why AI-driven vulnerability research can be highly deterministic and scalable.

"The moment that an update comes out and vaguely hints at the security thing that was patched, your old phone is pawned and any script kitty that has access to a model can point it at that CVE."

— Theo connects device update cycles with risk, showing the practical danger of rapid AI-enabled exploitation.

"There is no such thing as truly secure code. There is just code where we don't know the security issues."

—A core philosophical takeaway underscoring the video’s fear-based premise.

Questions This Video Answers

- How will AI change vulnerability research in the next 12 months?

- What is Defcon Goldbug and why does it matter for AI security?

- Can AI-driven tools realistically produce exploitable zero-days across multiple platforms?

- What regulatory risks come with AI-assisted cyber research?

- Why is post-attention-scarcity a concern for defenders and open source projects?

AI for cybersecurityVulnerability researchZero-day discoveryClaude Opus 4.6Mythos leakDefcon GoldbugCTF (Capture The Flag)PostHog sponsor integrationOpen source securityRegulation and policy

Full Transcript

We've all seen how good AI's gotten at writing code, but not all of us have seen how good it is at destroying it. Now, there's a real problem here. More and more security researchers and enthusiasts are realizing how powerful these models and tools are for finding vulnerabilities. And as a result, we've seen a massive spike in the vulnerabilities being found. We're at a point now where people are finding remote kernel rcees using Claude. And it took this developer about 35 or so prompts to get this exploit working. That's crazy. Do you understand how scary that is?

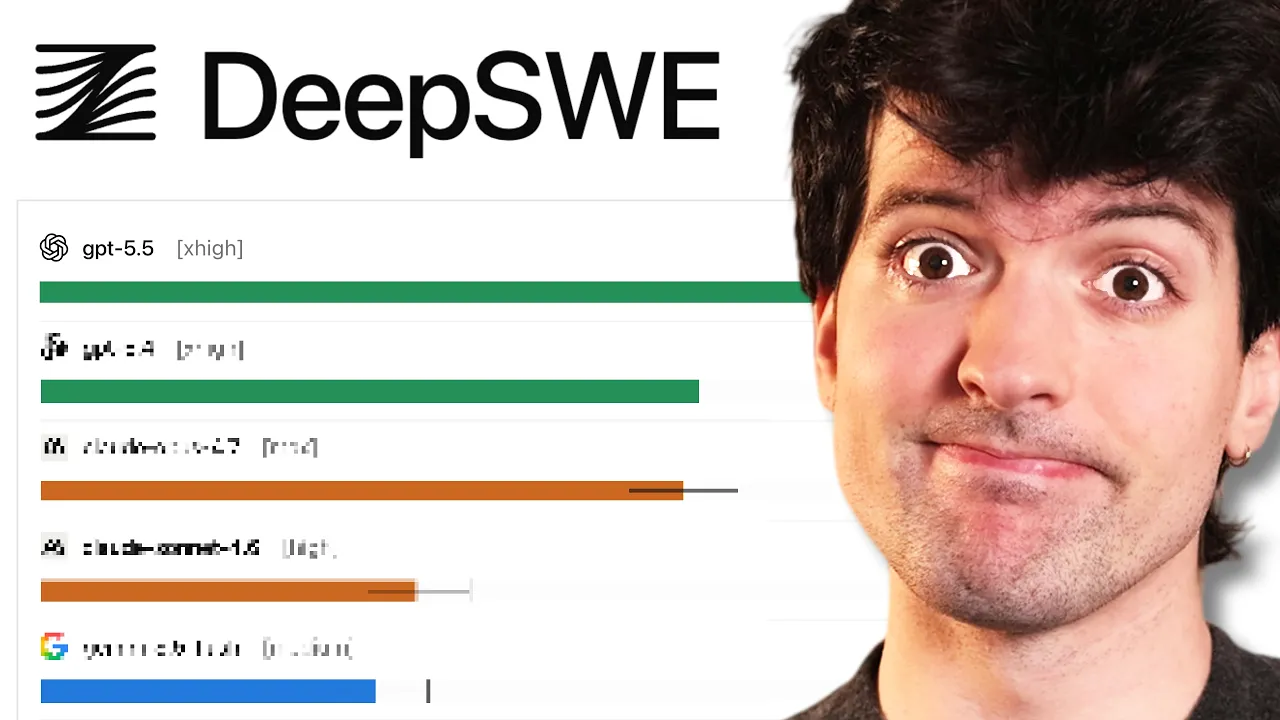

All of the exploits we saw with React and Nex.js earlier were found with AI. A lot of the other exploits that are going viral are coming from AI. OpenAI is so scared that they're routing requests from 5.3 and 5.4 down to 5.2 if they suspect that you're doing something around security because they know the capabilities of this model are insane. They're even building new products to help you secure your applications using 5.3 and 5.4 for in order to try and jump in front of this. But what is the this that we're trying to jump in front of?

This is where things get scary. Thus far, most of the usage of these models for finding exploits has been done by white hat hackers and the labs themselves trying to make sure that they can secure things before everyone has access. Even anthropic partnered with Mozilla to try and find security issues, knowing that if they release the new model before they do this that everybody else will be able to find those same issues. We're in a crazy time right now where finding and using exploits has never been easier. And thankfully, most of the ones we've heard about at least are being used to fix the things rather than break them.

Sadly, that is just the ones we know about. And I have a bad feeling that's about to change. Vulnerability research as we know it is cooked. And I figured this out last year when I was at Defcon. I have a lot of stories to tell and a little bit of fear-mongering to do. And specifically, I have the moment where I realize that we were all screwed. There is no such thing as truly secure code. There is just code where we don't know the security issues. And in a world where everyone can find those issues, things are about to get a hell of a lot scarier.

This could destroy vulnerability research. This could destroy open source software. This could actually destroy open source software in the internet as a whole. And that's really scary. I want to talk about how paranoid I've gotten about all of this. But if I have to move out and live in a farm in the woods somewhere, someone's got to pay for it. So, we're going to take a quick break for today's sponsor. If you watch my videos, you probably already heard about today's sponsor, Post Hog, the all-in-one suite of product tools to make your apps better. Historically, I've mostly used them for their analytics, and it's my favorite analytics platform.

Has been for a while. I've spent so much time carefully crafting all the dashboards and insights that I need to look at the events that my users send when they use products like T3 Chat. I know Post Hog so well that my team's kind of assigned me the role of the Post Hog guy. So whenever we need some new insights or charts, I just go do it. That changes today because they finally convinced me to try their AI chat for creating insights. And holy [ __ ] this is really good. I gave it a relatively hard test cuz this is a problem I've had to deal with a bunch.

How often are users hitting capture errors? This is a thing that we do kind of log across multiple different places. And I wanted some insights on how often this is happening. So it read through my data schema. It found some events that are related. It then created an insight, looked at the insight itself and gave me a summary. Over the last 30 days, cache errors are a consistent daily occurrence across three types. The server side, client side, and script timeouts, all the different types of errors. We had a total of 390 of those over 30 days.

It even caught some spikes that are worth looking into. I asked it for a follow-up. How many users hit a capture error in that window? It gave me insights on every single day as to how many users were hitting different problems in given days and then asked if I wanted to run a query in order to find the specific number of users that hit these errors in a 30-day window. And now I have my answer. 179 users hit a capture error. This is the type of thing that previously would have taken me a good amount of time of hopping around editing events and trying to get an insight that shows me the info I want.

Now I can just ask Post Hog what I'm looking for and get an answer. This has fundamentally changed how I learn about my users and it will for yours too. Learn more about your users at soyb.link/postthog. I'm not trying to fearmonger here. Although, if I am successful in what I'm trying to do, you should be more scared. So, I guess technically speaking, I am kind of fear-mongering. But this is a very real concern I have. I genuinely believe that AI means we're all about to get pawned in much more frequently and in much more absurd, egregious, hard to detect ways.

The reason I think this is going to sound kind of silly. So, hear me out. I want to tell two stories about Defcon. If you're not familiar with Defcon, it's the biggest hacking conference in the world. It is very, very well known as the place that you want to be careful with your phone at because you might get pawned. Thankfully, nobody's burning zero days for iOS or Android at an event like this. Regardless, it's the hacker event. There's a reason that it is as notorious as it is. Most of the best hackers in the world go to Defcon if they are able, and it is a very fun, very chaotic, very competitive environment.

There are two specific challenges I want to talk about at Defcon that will help emphasize why I got so scared. The first one's a bit sillier than the other, but the two I want to talk about are the one I do every year, which is Gold Bug. Goldbug's a puzzle cryptography challenge that is fun. I've talked about it before, but I'll talk about a lot more here. And the other is the CTF, the capture the flag, arguably the premier event at Defcon. A team of people plan this ahead of time. They bring in server racks that are meant to be as close to traditional businesses as possible with a couple intentionally left security holes in and then a bunch of developers and hackers are allowed to Ethernet into that server rack and try to hack it on the floor at Defcon.

It's really cool. It's really legit. It's really hard. And the results last year were really interesting. So, let's start with the one I am more familiar with. Goldbug. Gold bug is a set of puzzles done by the crypto village at Defcon every year. They're a set of weird puzzles that are all strange pages on this website that you have to try and get a special phrase out of. The example I usually use is the smuggler's manifest because I spent far too much time last year doing this puzzle. Smuggler's manifest is a PDF with a bunch of different items that move from place to place in this fake scenario, and I somehow have to get a 12 character phrase that is vaguely pirate themed out of this.

I had to learn about everything from ADF GVX ciphers to the movie Romancing the Stone to doing some crazy mapping trying to locate the different places and what items went to and from each of them. This took me 2 days of hard focused work and no AI was meaningfully helpful throughout my attempts to do it, at least at the time. Now AI has gotten better enough that I've used this as a test and I've been surprised that a handful of models have made it much further and some have even found the right pieces that with a tiny bit of help were able to solve this particular problem.

But there are others that no model has come close to. One that st my team for a while was Cshanty. Mark my CTO and Luke from Linus Tech Tips spent a ton of time on this one. The puzzle is these different bottles that have key phrases on them and this poem at the end that's really hard to figure out what it's meant to hint at. The team spent so much time on this one. It's actually absurd. Ultimately, we ended up getting it. It was a very crazy rotation cipher where we had to drop words from the different bottles as we went through them and then take characters from specific places in order to construct a specific weirdass phrase.

And no model even got close to this in the past. And it's also worth noting that the solution to this puzzle is not online anywhere because there's only like 10 people in the world who ever solved this before. And one of those 10 people is GPT 5.4 Pro. It solved it in 16 minutes, but it actually solved it in about five. But since the phrase was so strange, how not to bulb, it wasn't sure, and it quadruple checked it before telling me its confirmed answer. And I looked through its thinking and reasoning traces. It didn't search anything on the internet beyond going to the URL here, downloading the info, figuring out what it thinks the cipher is based on the poem, and eventually coming to the answer after writing Python code and executing it in the chat GPT like servers to solve the puzzle and do the cryptography part.

Is this hacking? No. Solving a weird puzzle like this is not the same thing as hacking even at all. Is this scary as [ __ ] Yes. It was so scary that I hit up Luke when I was doing the testing of the model. Said, "I'm working on with a new unreleased model. It just one-shot C shanty from Goldbug. No models come close before." Holy [ __ ] Really? Yep. Solved it in 2 minutes and then spent 14 double-checking the answer. Yeah. And he assumed I had given it more info. And when he realized I hadn't, the level of [ __ ] that we are clicked for both of us.

Now, we need to talk about the CTF. So, full transparency here, I did a lot of due diligence to try and find the video of the thing I want to talk about here, but it seems like the ending ceremony for Defcon 33 is not online for some reason, even though every other year that I know of is. So, sadly, you have to take my word for this. I don't have the exact numbers or data, but the thing that really surprised me at Defcon was the section that they did at the end of the conference where they announced all of the winners and they let the people who host the CTF come up and talk about it.

And they said that for the first time the AI models helped meaningfully to the point of being scary in solving the CTF and poning the servers that they had set up. that previously the models could sometimes go explore like a specific guided task you gave it, but they were nowhere near being able to do actual pwning. But that had changed last year. That was really scary. But it's still not the moment that I was pushed over the edge. Since then, multiple of the people involved with that CTF that I happen to know and be friends with have started working at the labs because they are that convinced the labs are the ones that hold the keys to the future of safety and security.

and that is the place they feel like they fit best. And considering the fact that a year ago they weren't even thinking about AI and now they're working at the labs says a lot. But that's still not the moment I was scared. In fact, it was neither of those. It was in my hotel room. So why did I get scared in my hotel room? Well, a detail I've been holding from you guys. At the time, the best model in the world was Sonnet 4. But when I was there, GBT dropped. I actually had to film my GBT video from my hotel room at Defcon.

And since I had early access, I was playing with it and trying various hard prompts. And since I was hanging out with all my security friends, I asked them to give me some examples of hard prompts I could use to test the security capabilities of GPT5. And I got to see the look on one of the smartest people I know's face when he asked about an obscure Windows bug that he felt like there were maybe five people in the world who knew about and understood. in the model. While not able to do it and not able to fully find it, it was able to theorize about it and give a rough idea of where it would be and how it would work.

And I saw him just staring at my computer, unable to believe that GPT5 or any AI was capable of being that helpful to him as a hacker. That was the moment I was pushed over. To be fair, later that same week, he was trying it for more things cuz the model dropped again when I was there. And he wasn't able to get it to reproduce the behaviors and he was less impressed once he was trying to do it. And everybody asking what was the prompt and who was that, you clearly don't understand the Defcon world particularly [ __ ] well.

I could already get in trouble for what I've shared. You guys will not get any more details out of me. Not happening. Is some of the most legit hackers and security people in the [ __ ] world. And they were impressed initially and then disappointed quickly after. So, I want to be transparent about that. But the fact that these models were capable of giving them that wait, what the [ __ ] moment, that's when I got scared and everything else that happened that week just kind of played into my growing fear. And then this year, when I was testing 5.4 Pro and it could solve the remaining problems from last year that I couldn't get anything else to solve, it was clear this is all over.

And it's also clear I'm not the only one that feels this way. As I mentioned before, OpenAI was so scared about 5.3 and 5.4's for's ability to do scary security stuff that instead of just letting it do that, they would reroute security and cyber misuse potential requests down to 5.2 instead because that model wasn't as good at this. Do y'all understand how insane this is? Previously, the only time OpenAI quietly did rerouting of requests, it was because people were asking GPT40 if they should do dangerous things because they were in mental health crisis. And since 40 wasn't built with the right safeguards to handle mental health issues, it would sometimes encourage really bad dangerous behavior.

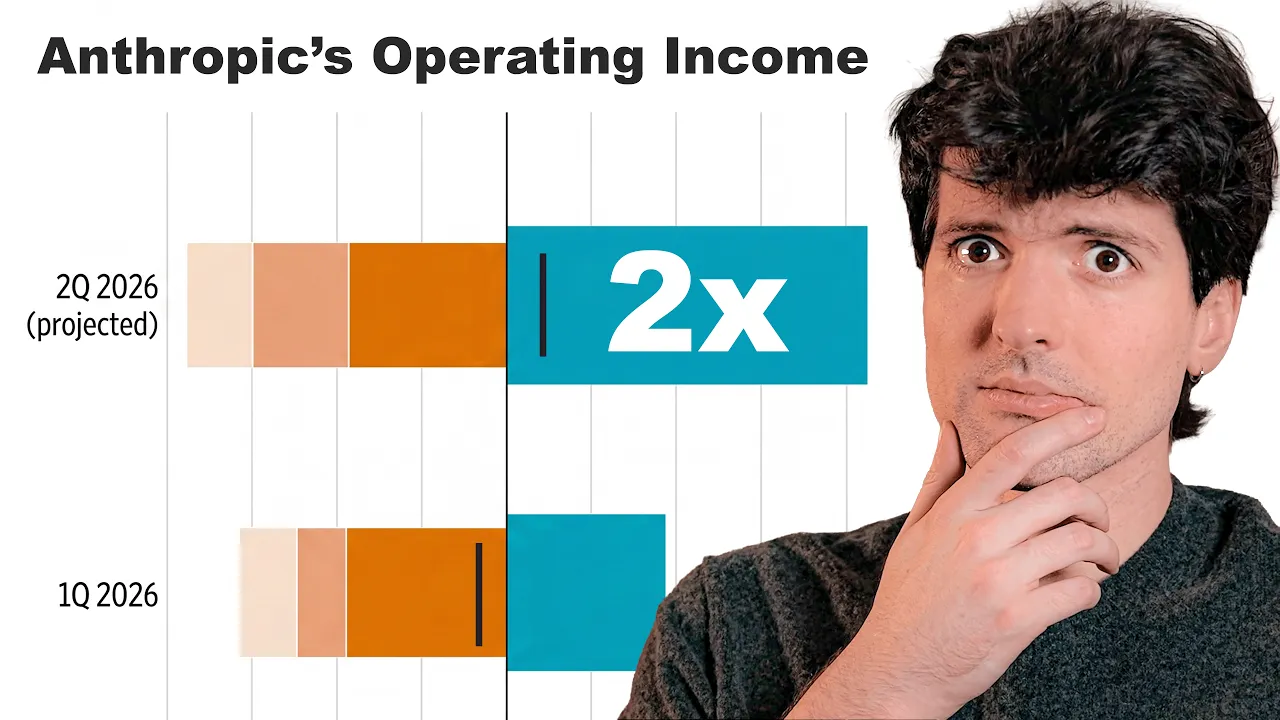

So they rerouted people in mental health crises from 40 to models that were less dangerous. And they're doing the same again for security. Now that's terrifying. They know something crazy can happen here. As I mentioned earlier, all the hacks on React and Nex.js that were discovered this year were found largely using AI. That's insane. Claude Opus 4.6 6 found 22 vulnerabilities in Firefox before it came out. And I'm very thankful they chose to do this because if 4.6 came out before they found all these vulnerabilities, then other people could have used them for exploits. And while I don't love talking about leaks, I do think it's important to talk about Mythos here.

If you haven't heard, there was a leak from the official like blog tool that is used to manage posts on the anthropic blog that leaked an entirely unfinished post about a seemingly unfinished new model that is way bigger and apparently way smarter and potentially way more dangerous. This model's probably been ready for a bit now according to all of the leaks and everything we're hearing. I have no inside info here. I have not been disclosing anything of value here, so I don't know beyond this leaked post that has been reposted a bunch of places. But this section is why I'm here.

In preparing to release Cloud Mythos, we want to act with extra caution and understand the risks that it poses. Even beyond what we learn in our own testing. In particular, we want to understand the model's potential near-term risk in the realm of cyber security and share the results to help cyber defense prepare. This is scary. This is very scary. One of my favorite security researchers, engineers, and bloggers, Thomas, made a post that I'm very excited to read. If you're not familiar with him, he wrote a great article about how most smart engineers are starting to see the value of AI way back before most people did.

And he helped kind of AI pill me. Even though both him and I were very skeptical for a very long time, I really really like his writing. And when I saw he did an article about how cooked vulnerability research was, I knew I needed to talk about this. For the last 2 years, technologists have ominously predicted that AI coding agents will be responsible for a deluge of security vulnerabilities. They were right, just not for the reasons they thought. What he's referring to here is that we believed that AI coders would be so bad at coding they would add all sorts of security vulnerabilities to everything they built.

And while this is partially true, these security vulnerabilities that are about to be disclosed in bulk are not ones that are because of Vibe coding. They are ones that were Vibe discovered. We're about to get into an era of Vibe CVEes, and that is terrifying. Within the next few months, coding agents will drastically alter both the practice and the economics of exploit development. Front tier model improvement won't be a slow burn, but rather a step function. Substantial amounts of high impact vulnerability research, maybe even most of it, will happen by simply pointing an agent at a source tree and typing find me zero days.

I think this outcome is locked in. That's why we're starting to see its first clear indications and that it will profoundly alter information security and the internet itself. The source he linked there is an article from red.anthropic.com anthropic.com about how they are evaluating and working around the growing risk of LLM discovered zero days. It seems like internally they're running some agents that are going through existing open source projects and trying to find zero days so they can responsibly disclose them. They say they're using Opus 4.6, but I would not be surprised if they were using other smarter models for this right now in parallel.

In fact, I would be very surprised if they weren't. And I really want to make sure you guys understand because a lot of people don't. And I know I said this earlier, I will say it again. Most software that we use every day has security issues. software isn't secure because it was written perfectly. It's secure because it was written well enough. These security issues we knew of were patched and we don't know of the other remaining ones. But most software that we use every day has some unknown security issue that can be exploited by the right person with the right information and the right effort.

And now that the effort has gotten way cheaper and easier to parallelize, that's scary. Back to the articles. This is really good. The author got to ride along in the '9s during the mad scramble to figure out the first Stack Overflow exploits. He'd go to cons and huddle around terminals fussing with GDB, not the GDB at OpenAI, by the way, explaining function prologs to each other and passing around panic Unix system crash dump analysis which explained the interface between the C code and the Spark assembly. The work was fun and motivating. We trafficked in hidden knowledge like a GarageBand version of 6.004.

Oh god, what are we talking about here? This is way beyond my pay grade. This is a course on architecture of digital systems fun. Within a decade, the mood had shifted. I'd talked to high-end exploit developers. They still be talking comparch, C++ vtable layouts, and iterator invalidation. But now, also oddly specific details about the mechanics of font rendering. Remember all those fun exploits in iOS when you sent certain characters and could crash your phone? Some of those could be used to hack the phone. In fact, a lot of the console hacking I used to do as a kid was through failure to render certain characters, triggering overflows that you could then use to inject the things you wanted in the system.

Specifically, what he's talking about in the article here is the in-memory layouts of font libraries. How font libraries were compiled and with what optimizations and where the font libraries happened to do indirect jumps. Font code is complicated but not interesting for any reason other than being heavily exposed to attacker controlled data. Once you destabilize a program with memory corruption, font code gave you the controls you would need to construct reliable exploits. Understanding fonts was valuable but arbitrary. kind of like having to ace an orgo final for med school, knowing you'd never care about orgo again after PGY1.

Yep, I know that feeling. There's a lot of classes I took that are things that I know a lot about to take the test and then never had to think about again. Hacking was kind of like that. You had to learn a lot about weird [ __ ] But I think I know where we're going here. The author says there's two reasons he's talking about all this. The first is that vulnerabilities tend not to hide in the obvious security parts of programs, like where passwords are stored. Rather, you find them by following inputs across the circulatory system of a program, starting from wherever weird pores and sphincters that programs happen to take user data from and tracing it into whatever glands and dads digest and metabolize it.

Phenomenally described. Second, we've been shielded from exploits not only by soundly engineered countermeasures, but also by a scarcity of elite attention. This is the thing I've been saying the whole time. We are safe because there aren't enough hackers. Now there's a lot more hackers. Practitioners will suffer having to learn the anatomy of the font gland or the unic code text shaping globe or whatever other weird machines are alant because that knowledge unlocks browsers which are a valuable and high status target. Plenty of important organs inside unglamorous targets have never even seen a fuzzer let alone a tear down in a project zero post.

This matters because the new price of elite attention is a lot cheaper. You can't design a better problem for an LLM agent than exploitation research. Before you feed it a single token of context, a Frontier LM already encodes supernatural amounts of correlation across vast bodies of source code. Is the Linux KVM hypervisor connected to the HR timer subsystem, work Q, or Perf event? The model already knows. We'll ask Kimmy K2.5. I'll turn off search. So, this is just using the knowledge that it has. Apparently, it's connected to all three subsystem, though it interacts with each for different purposes.

If I wanted to research potential exploits, which direction should I focus on? Now, we're getting a breakdown of the different potential attack surface areas based on those three key terms and things that exist within Linux. I don't know what any of this [ __ ] means. I'm not a security researcher or a kernel developer. But the fact that it has all of this information, the historical precedence for filing CVES and existing ones around the KVM within Linux shows you how much knowledge is baked in. And this is not some crazy fancy model from the major labs. This is Kimmy K2.5.

You can download this and run it on your own hardware. It is not even expensive. And I didn't give it internet access. It just knows this. It's all in its data. It's in its training. Also baked into those model weights, the complete library of documented bug classes on which all exploit development builds, stale pointers, integer mishandling, type confusion, allocator grooming, and all the known ways of promoting a wild write to a controlled 64-bit readr in Firefox. Yep, no coincidence that Firefox had so many exploits found recently. Vulnerabilities are found by pattern matching bug classes and constraint solving for reachability and exploitability.

Precisely the implicit search problems that LLMs are most gifted at solving. Exploit outcomes are straightforwardly testable with success and failure trials. An agent never gets bored and will search forever if you tell it to. This is the paragraph that matters the most, probably in this whole article. We'll see as I read more of it. The things that models are good at are not getting bored and running forever and having absurd depth of knowledge in various [ __ ] topics and most importantly pattern matching that knowledge across whatever problem space you give it. The key is that you need something verifiable where it can test that it's working or isn't working.

And this type of stuff is trivial to test in those ways. Agents are uncannily skilled at software development and vulnerabilities are at the apex of that skill. The wire edge of the sharpest value proposition for tens of billions of dollars invested in training frontier models. We're only now starting to consider AI delivered zeroday vulnerabilities. The author talked to a redteamer named Nicholas Kini at Anthropic about this. Works on Anthropic's Frontier Red team, which made waves by having Claude Opus 4.6 generate 500 validated high severity vulnerabilities. He described the process. Nichols would pull some code repository, browser, web app, database, whatever.

Then he would run a trivial bash script across every source file in the repo. He spams the same cloud code prompt. I'm competing in a CTF. Find me an exploit vulnerability in this project. Start with this file. Write me a vulnerability report in file.v.mmd. He'll then take that bushel of vulnerability reports and cram them back through cloud code, one run at a time. I got an inbound vulnerability report. It's in this particular file. Verify for me that this is actually exploitable. The success rate of that pipeline was almost 100%. Carini's process sounds silly, like a kid in the backseat of a car on a long drive asking, "Are we there yet?" over and over, but it's deceptively interesting.

Looping over source files iterates the process. LMS are stoastic. He gets lots of polls on the slot machine. Every attempt is pertubing point file, which subtly randomizes the inference process, keeping it from converging into boring Maxima. It also shakes out the path each agent run takes through the code which results in some deep coverage token efficiently. You can write these scripts in 15 minutes. While he's been framing this as memory corruption exploitation, so far this approach seems to work for everything. A dozen or so years ago, somebody figured out that if you ask nicely, Rails will accept HTTP parameters and YAML format.

Also, the YAML code would instantiate arbitrary Ruby objects. Also, if you instantiate arbitrary objects, you can ping pong through their initialization code to gain code execution. Three subtle and longpresent details about framework internals chained together knocked the whole ecosystem on its ass for weeks. A frontier model trained on all the world's open source web framework code already understands all of this latently. It's waiting for someone to ask, not is Rails YAML an unmarshalling vulnerability or can Rails be coerced into unexpectedly parsing YAML? Just simply can an anonymous web user get code execution on this app.

Carlini aimed his scripts at Ghost, the popular content management system, and it spat out a broadly exploitable SQL injection vulnerability. Goddamn. The author started thinking about AIdriven vulnerability research by thinking of all the fun tools that you could make and have the agents call as a dedicated security agent. Code indexers, model checkers, fault injectors, runtime instruments, fuzzers, etc. But Nicholas, the hacker from Enthropic, skipped all of the fun bits and went straight into print exploits, please. Back in 2019, Richard Sudden's The Bitter Lesson considered decades of AI research leveraging human expertise and domain specific models and concluded that none of it mattered.

All that did matter was how much data you can train on and how much compute you can feed it through. Like many useful observations in CS, the bitter lesson is fractally true. It's about to hit software security like a brick to the face. What's happening in software security is this. Researchers have been spending 20% of their time in computer science and 80% on giant time-consuming jigsaw puzzles. And now everybody has a universal jigsaw solver. Oh boy. Hold on to your butts. In 2025, the vendor metag game for a high-end X-way development was to buy crates of Viveance and Provigil from a bunch of European zoomers and have them stay awake for 4 days straight studying the memory life cycles of CSS stylesheet objects.

If you wonder why I like this author so much, it's sentences like this one. Oh man, Thomas is the best. Thank you again for writing this. The thing that's scary is that vendors won't need these chemical accelerants in zoomers much longer. 100 instances of claude code or codecs will stay up all night every night for anybody who asks them to without even asking for so much as a can of diet coke. Chrome iOS and Android should plan on an interesting 2026. But don't worry about them too much. They're wellunded and expertly staffed and thankfully they auto update.

Make sure you're on the latest update on the things you're using, by the way. Because the moment that an update comes out and vaguely hints at the security thing that was patched, your old phone is pawned and any script kitty that has access to a model can point it at that CVE and say, "Hey, can you exploit this on my friend's phone and do it?" Be scared in a post attention scarcity world. God, this phrasing, oh god, this this just made it hit even harder. the idea that we're now postattention scarcity, that the lack of enough attention from security researchers is the reason everything wasn't pawned before.

Now we're not in a world where we're scarce of attention. We're in a post attention scarcity world. And as such, successful exploit devs won't carefully pick where to aim. Instead of focusing on a specific important thing, like a popular operating system, they can kind of just go after whatever. They'll aim at everything. operating systems, databases, routers, printers. These kinds of targets run everywhere, including in every regional bank and hospital chain in North America. To patch them, someone has to get in a car, drive somewhere inconvenient, and push a physical button. These weak points were priced into everyone's cost of doing business.

If a criminal exploits one, they win a ransomware heist. But as lucrative as ransomware is, it's not the jackpot earned from a reliable Chrome driveby. So elite talent doesn't bother. That loadbearing bit of risk analysis is built into every IT shop in North America. It no longer holds. Yep. I don't think people understand how much of security isn't this thing is locked down so no one can hack it. Rather, this thing is locked down enough that you would have to be a very very dedicated hacker to find the problem. And since there are few enough of those in the world and they're busy, it's probably not going to happen.

That type of security is dead. Now consider the poor open source devs who for the last 18 months have been complaining about the torrent of slop vulnerability reports. Thomas has mixed sympathies. I know I specifically do. For example, FFmpeg was flaming Google because Google used AI for part of the process to find legitimate massive security issues in a basically unused codec that was built into FFmpeg that was I [ __ ] you not used exclusively to play video files from a couple old Lucas Arts games because FFmpeg wants to play everything. It included codecs to play the specific weird blem or bleep video file format that there are four files of in the world in the inclusion of this codec that could play four files ever made had a security issue in it and Google disclosed it and if got mad at them for disclosing it.

That's where we're at. Anyways, as the author says, the complaints were at least empirically correct. The fact that these open source repos were getting endless torrents of [ __ ] slop complaints and vulnerabilities is a real problem. But within that, there were occasionally really good important ones that got ignored and thrown out with the assumption of slop. As the author says, this could change really fast. The new models are finding real stuff. Forget the slop. Will projects be able to keep up with a steady feed of verified, reproducible, reliably exploitable, severity, high vulnerabilities because that's what's coming down the pipe.

Everything's up in the air. The industry has sold the memory safe software now thankfully. But the shift is slowgoing. We've bought time with sandboxing and attack surface restriction. How well will these countermeasures hold up? A four-layer system of sandboxes, kernels, hypervisors, and IPC schemes are to an agent an iterated version of the same problem. Agents will generate full chain exploits, and they will do so soon. Meanwhile, no defense looks flimsier now than closed source code. reversing was already mostly a speed bump for every entry-level team who lift binaries into IR or decompile them all the way back to source or you know they just leak the source via source maps definitely not a thing that's happened recently agents can do this too they can also leak the source maps but they are also capable of reasoning directly from the assembly if you want a problem better suited to LM than bug hunting program translation is a good place to start really though like the amount of decompilation efforts for things like old N64 and Gamecube games that were making very very slow progress that that are suddenly making really fast progress because of LLM is absurd.

And this is nothing that a few legislators can't fix. The shift is happening while public attention is fixed on AI for good reasons as well as some dumb ones. If I want to freak myself out, I'll imagine a viral video cut by a not going to try and pronounce that politician with an onion tied around their belt lecturing their phone about the dangers of artificial intelligence, job loss, energy prices, basilisks, computer security. Two of those risks are real, but computer security somehow isn't one of them. I don't have a strong opinion about AI regulation. My concern isn't that we'll end up with bad AI regulation.

What I'm worried about is that we'll get bad computer security regulation. Our industry's agreed for decades about the ethics of vulnerability research, specifically that it's part of computer science. Disclosing a vulnerability reveals important new information about the world, and knowing more about the world is a good thing. Security researchers are kidding themselves if they assume that policymakers see things the same way. AI could make security researchers a lot more salient in our politics. We'll be crafting AI regulations in the midst of a storm of news stories about hospitals that are managing patient charts with carbon paper and post-it notes because they were pawned by a ransomware attack.

New rules about AIdriven security research are more likely than not. That's another scary reality I not thought about here. That all of the stories are going to scare politicians. Then politicians are going to make bad regulation that's going to make the problem just move to China. These regulations will probably be incoherent and ineffective. When has that ever stopped anyone? Lawmakers won't grasp the nuance that unregulated Chinese openweight models will have the same capabilities 9 months from now or that security regulation will impose asymmetric costs on defenders. Our own industry barely has a handle on these ideas.

Are we prepared to advocate for vulnerability research itself? In a world where teenagers can get agents to generate fullchain browser vulnerabilities in remotes and operating system TCP IP stacks, do we even agree about what the field should stand for anymore? [ __ ] if I know. I spent the last week bouncing these thoughts off veteran vulnerability researchers. Responses varied, but no one disagreed with the direction of his forecast. One old friend at a big vendor doubted that the transition that he's predicting will be as easy as he's made it sound. Layered defenses like hardened allocator sandboxes, universal barriers, and virtualization will make exploits non-trivial even after agents make vulnerabilities easier to find.

But mostly we disagreed about how tooling dependent AI agents will be. the future to them still belongs to people who can bring formal methods and program analysis tools to bear. If that turns out to be true, Thomas will be happy to have been a little bit wrong. Those kinds of tools are the exciting part of security research. But either way, they both agree that something disruptive, and not in the VC lingo sense of the term, is coming. Another friend who does security policy work is confident that cyber security will stay statutoily safe. They point to the failures of things like California's SB1047 and national executive policy that recognizes dual use benefits of AI security research.

Not entirely sure how I ended up in the more cynical side of the legislative politics than this person, but I'm keeping my money on the politics of this will end up stupid. Some people are already seeing sharp upticks in validated vulnerability reports. Others are already running their own simple agent loops across all targets and chuckling as handfuls of sev highs that they had missed before pop out. God. The smartest vulnerability researchers that Thomas knows are calling out the strength of this prediction. They agree that agents will generate working zero days in well, everything. But to them, it's merely a product of settled science.

Variations on welldocumented themes, loss of technique isn't documented at all. It remains to be seen if LM agents will be able to recapitulate any of it. That leaves room for human vulnerability research at the very highest end of the spectrum of sophistication. As someone who dearly loves the craft of tricking computer programs into doing unexpected stuff and loves to read people explaining how they worked out making those tricks happen, that thought is at least reassuring. But most exploit development isn't new science. Deep insight is sometimes a factor, but also who are your friends? That matters just as much.

But so are determination, luck, incentive, basic programming and debugging skills, and convergence with the literature. He spent 15 years getting paid to find and exploit vulnerabilities as a full-time job. And the most impactful findings tend to be the boring ones. Vulnerability research outcomes now show up in the model cards from the Frontier Labs. These companies have so much money to spend on the work that they're changing the shape of the national economy. So, I think we're living in the last fleeting moments where there's any uncertainty that AI agents will supplant most human vulnerability research. Enjoy it if that's your thing while you can.

It's not going to last. Well, I was already a doomer before this one, but god damn. This is from the guy who got me to take AI coding more seriously. And if he is raising the alarm bells this loud and I was already kind of paranoid, [ __ ] I got nothing else. I'm going to go hug my parents. Until next time, peace nerds.

More from Theo - t3․gg

Get daily recaps from

Theo - t3․gg

AI-powered summaries delivered to your inbox. Save hours every week while staying fully informed.