LIVE: Watch me build a brand-new project from scratch

Chapters7

The host outlines the goals and constraints for a new project, emphasizing daily usefulness, a frontend and backend, meaningful complexity, and a focus on AI coding tasks.

A rare, candid live session where Matt Pocock brainstorms a brand-new coding-agent observability platform and maps out from idea to research docs and architecture.

Summary

Matt Pocock spends a long-form stream wrestling with a single question: what should his next project be? He tosses out crowd-sourced ideas, weighs constraints, and leans into a playground project that blends frontend and backend work with AI coding. The big pivot comes when he hones in on a coding-agent observability platform, aiming to measure tokens, session success, models used, and context windows across an organization. He riffs on tools and agents like Claude Code, CodeEx, Pi, Open Code, and Copilot CLI, and experiments with Rust vs TypeScript for the backend. The stream is a living notebook: he drafts ADRs, sketches domain-driven design, creates research documents, and even experiments with naming (ultimately landing on “Slopwatch” as a working project label). He uses Whisper Flow for transcription, hosts polls, and navigates a sea of implementation choices—from per-session sidecars to a pluggable ingestion strategy and self-hosted on-prem architecture. The session also doubles as a meta-workout in making decisions quickly: defining ubiquitous language, DAG-based session turns, and the relationships between listeners, back ends, and admin dashboards. By the end, Matt commits to a two-pronged approach: accumulate research into a repo (repos/slopwatch) and pursue a concrete, self-hosted stack with clear deployable units, all while preserving a DX-focused, transparent, “watch me work” style for viewers. It’s a blueprint for turning blue-sky ideas into content-worthy, buildable projects.

Key Takeaways

- Define a focused playground project to anchor long-form content and skill development (coding-agent observability is the chosen playground).

- Capture early research in Markdown ADRs and domain modeling to constrain design and reduce rework later.

- Expect heterogeneous agent surfaces (Claude Code, CodeEx, Pi, Open Code, Copilot CLI) and plan adapters per agent instead of a single universal hook.

- Choose a self-hosted, on-prem architecture with a pluggable ingestion path, local listener, and a Postgres-backed backend for DX and data ownership.

- Adopt a domain-driven design approach with a shared ubiquitous language (sessions, turns, DAGs, parent IDs) to reduce ambiguity and misalignment.

- Prioritize a two-piece deployment: a local listener/collector for data capture and a backend server for storage and dashboards, avoiding cloud lock-in.

- Iterate on naming and identity management early (e.g., “Slopwatch”) while evaluating authentication flows (admin-created tokens vs full IDP) for speed and practicality.

Who Is This For?

Software engineers and tech leaders who want to see a real, iterative process for turning a freelance livestream idea into a concrete, self-hosted product. Ideal for viewers curious about AI tooling, observability, and domain-driven design in practice.

Notable Quotes

"This is a very very rare live stream for me."

—Matt sets the scene for an unusually candid, long-form live session.

"Should I build a coding agent observability platform?"

—The pivot moment where the project focus crystallizes around observability for coding agents.

"Hooks alone are insufficient for most agents."

—Claude-code-level insight into why the design needs adapters per agent.

"We're building Slopwatch."

—A working project name emerges as a focal point for the stream.

"Architecture decisions... domain modeling."

—The stream doubles as a live domain-modeling session, locking in terminology and structure.

Questions This Video Answers

- How do you design a self-hosted coding-agent observability platform from scratch?

- What are the trade-offs between using hooks vs JSONL ingestion for coding agents?

- What is a practical domain-driven design approach for AI-assisted development projects?

- What stack choices work well for a local-first observability tool (TypeScript vs Rust)?

Coding Agent Observability PlatformClaude CodeCodeExPiOpen CodeCopilot CLIDomain-Driven DesignADRSand CastleSlopwatch

Full Transcript

How we doing? This is a very very rare live stream for me. Um, but I have a kind of gap today before sort of figuring out my next course on Monday. And I've been reading a lot about DDD, domain driven design. I've been thinking about kind of retooling my skills. And also I've been thinking about this. I want to do more kind of watch me work long form content and I want a project idea that I can basically use as my playground for this kind of content. Going back to the voyer of the mat. Are you the voyer of the mat, Colin?

Hello. Hello, Mark. Um, so what I want from you guys is project ideas because the to-do app could do. I I love a to-do app. Who doesn't love a to-do app customized for your needs? You know, could make it work. There's some good ones here. These are my constraints. I want it to be useful in my everyday work. I want it to have some kind of front end and backend pieces. And I want it to have a decent amount of complexity. I also want it to be ideally something that's useful to the viewers as well.

So something AI coding related I think would be cool. Maybe like a coding agent observability platform. Interesting. The Horde is here. Yeah, we're we're vibe coding. Absolutely. That's what we're doing. We're vibe coding. Feel like we need like a definition of vibe coding. You know what I mean? See my uh see me craft my skills. Absolutely. Use Nux. Yeah, I mean I'm not averse to using NX. Give my opinion about Claude. Um or quite a complicated opinion about Claude given I just taught a course on it and e-commerce. Yeah, maybe. Maybe. I don't know. It's not very useful for my people though, for like my squad, for you guys.

A complete AI coding company. A live stream chat manager. I mean, I do so I stream so rarely. an app that defines vibe coding. My own simplified open claw. See, I've never This is This is gonna kind of I don't know uh denigrate me in your eyes maybe, but I've never used open claw. I've never tried open claw. Never really felt the need to actually. But some kind of my own platform, something that's useful for you guys would be super cool. Also, I don't want to get in trouble with anthropic, right? You know, that's a cheap joke.

a codebase skills, rules, adherence, observability. Maybe I think we'd sort of reach that by actually building the thing itself. Everite v2 I wanted to be from scratch. I wanted to be from nothing. Greenfield coding agent skills prompt eval system. Leave it for various tasks. Canon AI coding canon board kind of thing. See what actually people said here. What do people actually say? What's this? Using relatively newer third party libraries agents not using library specific skills during implementation. Yeah, maybe editing system. H I don't know. I mean, this is terrible, but I just think my own my own idea is the best is the best idea.

I use VS Code as my code editor. GitHub alternative. Maybe maybe it's kind of like you get you in the weeds of git stuff really quickly and I feel like form wizard I don't know I mean these these are good like interview level tasks you know you can imagine people like doing these at interview and then being impressive demos bookmarks manager yeah Obsidian vault integration I want it to be pretty complex pretty complicated I don't know. This is the one though. I mean, this is the one I'm feeling because I think this is something that I noticed that teams need all the time is that if you're I'm thinking about like if you're running coding agents in your organization, you want to see how many tokens people are spending.

First of all, you want to see if they're having successful sessions or not. You want to see if those sessions are um you know, productive or not. and how many tokens they're using, how much context window using up, how what models people are using, all that stuff. Monor repo. Yeah, probably could be a monor repo if it gets big enough. An oss SDK that can be used independently from the platform. Abs, that's what I'm thinking is that you would have a version of it that was deployed and then a version of it that was local.

What am tokens am I expecting to burn here? Uh, I don't know. I'm on um uh Anthropic 20X Max. Yeah, I mean that's what I'm thinking. Ralph needs a dashboard. How do I recommend using my skills along with relatively newer thirdparty libraries? Um, if you're having trouble with those third party libraries, I would create a skill for that third party library and then pull it into like um, basically create documentation for that skill uh, that sort of tells the coding agent how to use it and then you can pull it in during the review phase.

This is the kind of stuff that will just be really like this is I think why you guys want to watch me is so that you can pick up because I feel like actually I'm sort of I don't know I feel like I'm just based on the conversations and the questions people ask I'm sort of quite I don't know far ahead is not the right way of framing it I think but I have very clearly very clear opinions about all this stuff. I don't know if they're good opinions but I have very clear and definite opinions.

Vel workflows. Isn't it a coding agent observability platform? I don't think so. Yeah, that's what I'm thinking. Like you need somewhere to gather the data for your organization for a coding agent observability platform. I'm sort of talking myself into this now. You needed like a central place for all this data. And I feel like you might want that to be a kind of pluggable storage mechanism as well. You know, you might want to store it in your own servers. There's sort of there's a lot of complexity there which I like. I've gone deeper. Um I use Opus most of the time.

Yeah. Um yeah, most of the time. I'm really testing um 4.7 now. Yeah, I think maybe maybe I should just do it coding agent observability platform because yeah, I feel that's the missing link in the way I'm teaching as well because I'm I'm not observing my own sessions in the same way. But I should I think a bios I'm not building a bios. Hell no. For a new project, why not build like Cody Kent agent? I'm going to leave Kent's, you know, I I can't just copy Kent all the time. My whole career is basically copying Kent.

It's just doing the Kent playbook, but worse than Kent. Haven't moved past agent in the ID. Can barely control that, let alone a swarm of agents yet. I mean, oh, I just want to dive in. You know what I mean? I I I What do we think? I mean, is there a way I can add votes here? Can I add votes? I think I can. Feel like I can. I feel like there's a way of doing it. Oh, yes. Hang on. Start a poll. Uh, should I build a coding agent observability platform? Just did a bit of dictation.

I'm just going to say yes or no. Start poll. I never done this before. I have no idea if this is going to be any good. Thanks forever. Oh, sweet. Thank you. I haven't been working on it as much as I should. Um, yeah, we need to do a slideo. I don't know. Can you see that poll? I'm just going to check if actually I can see that poll. Uh, go over here for a sec. Is it is there is there one there? I don't know. Can you guys see a poll? Oh, yes. Okay. Yes.

Okay. 78% for Yes. That's pretty good. Hide my Discord DMs. T3 code. Do I have any alter? I suppose all I've given you really is um a one idea. I haven't sort of developed it yet. You can see it. Good. Claude's moderator. Woof. I know. when we when we get big enough to need mods, then we'll we'll get there. Um, am I into piano? Yes, I am into piano. I used to be a singing teacher. Weird question. You saw the poll before I saw the video of Really? Interesting. Yeah. I mean, because there's AI observability like for uh AI in applications, right?

But then I feel like there's a layer missing which is observability for um your own coding agents. I don't know maybe we should probably validate this, right? Let's actually open up a terminal. Uh and let's just ask Claude about this. So I'm just going to run Claude in my home directory. Yes, I trust this folder. Claude, I've got an idea for an application I want to build. It's an observability platform and it's an observability platform that essentially is personal. So, it's something that you run yourself targeting um claude code or whatever coding agent you're using.

I imagine what we're really trying to do is just upload the session to somewhere shared and then we can do um let's have a think. I'm using Whisper Flow by the way as my transcription tool. metrics and analysis and maybe human feedback, rating different sessions to see how they're doing and have this done per user so we can see across our organization what's going on. And I'm going to use um let's use just a standard grill me for this. This is one of my skills. I want you to harden this into a decent idea that I can potentially build.

The stack will be TypeScript. Uh, you can't talk to it. I mean, this is just me using Whisper Flow basically. Yeah, this is not me replacing Claude. It's just adding a layer of observ observability on top of it. Grimm is great. Grimm is so good. So, it's loading the skill. It's okay. Here we go. Gez boy, that's a lot of text. Okay, this is a good question though. Who's the primary user? What decision does this tool help them make? There's a big fork here. Individual developer, engineering manager, or team lead, or platform devx at a company?

That's good. What's our agent ROI? What prompts, tools, MCPs are worth the spend? Where are we bleeding tokens, costs, and adoption analytics? Ha, these sound compatible, but they're not. They dictate completely different UX. So good. A is a personal timeline with a deep dive in one session. Correct. B is cohort dashboards and comparisons which creates surveillance anxiety. C is aggregate metrics and doesn't care about individuals. Yeah. So I suppose our what's our ideal user here? I have tried caveman. Yeah. Um I mean I haven't tried it properly. Maybe I should try caveman. I do have a caveman skill available that's sort of my version of caveman.

Whisper flow is fine for me. You think one individual developer? I mean uh let's see a first with C star aggregates as a natural rollup. See B though I think you do want groupings of individual developers. Right. We think one or A. Are we choosing A? Yes. I think you do want a manager to be able to open and review a specific engineer session. I imagine that the way these are going to work in teams is you're going to need to think about or someone on the team is going to be responsible for making the AI better on that team and is going to be the DRRI for that.

So you need the ability to dive into someone's session as well as to debug that session with them. There's also the potential for actually doing this live. So if someone's having a problem in their session right now, the engineering manager can view that and see what's going on with it. I really like this idea. I'm really talking myself into it. So I'm going to end the poll. You know, by the way, I'm not really answering the question. I'm sort of it's prompting me to think of things that I'm saying. That's something really important with Grill Me.

No, I can't do a poll for each one of these. It's going to take forever. Okay. Consent and visibility model is session sharing opt-in per session always on by or policy or developer control with reduction. Oh, how good are these questions, man? How good are these questions? Coding sessions often contain secrets. Half form thoughts. Yeah. So, PII, personal identifiable information is going to be really important here. Um, manager can watch live without a clear consent model. Will either I get the tool banned by legal security. Absolutely right. or make developers self- censor and route real work elsewhere killing the data quality you need brilliant so always on augandated like corporate endpoint monitoring simple but hostile um per session optin yeah so you share this session I mean h this is bad you don't want per session opt-in because you want stats on what the devs are doing when the DRRi opens someone passction Does the developer get notified?

I don't think so. I think that's gross. I don't think so. I think what you've got to realize is that your sessions are public to the organization. I think the developer when they're plugged into the system, it should be kind of tacitly understood that their coding sessions are public within the organization. Having that data is incredibly valuable to companies and so it's important that it's uh visible to all stakeholders. We're grilling on the idea of an observability platform um for agents. That's what we're up to. Um I have sort of tried Pi. I mean I haven't really tried Pi.

I need to try more. Where am I going here? I think, oh, my brain's gone. Um, so I think we need to be less concerned about the privacy of the individual developer and more about the importance of the data that we're getting. I also think that there's we need to support on premises data so that the data never leaves the organization, never leaves their servers because I'm not interested in building this into a massive company. I want this to be an open source tool that is uh useful everywhere. Something I noticed people do with these grilling sessions is they just answer like A, B, or C, right?

And when we're in the kind of blue sky phase here, when we're really not sure um what's uh how we're supposed to be working, then it's or what we're even building. It's not good to be railroaded that much. Okay, here we go. Q3. How do sessions get into the system? What's the ingestion mechanism? This decides the entire shape of the client, and it's the technical spine. Gh, I love the way it chooses really nice words like this. technical spine here. Um, okay. Yeah. So, this is this is important, but it's sort of like a um implementation detail tail the JSON.

Yeah. So, you got the my initial thought here was hooks. You've got claw code hooks, post tool, use stop session, end hooks. So, or you've got something like this where it creates a bunch of transcripts on disk. Um, you've got a little demon that watches the directory, uploads deltas, um, sort of works with cursor codeex ader. That's a random one. No hook config required. Survives across agent restarts. Yes. Um, so now this is tricky because I don't want to commit to something super early before we understand the trade-offs. Um, so I think I need to get it to do some research here.

I'm getting high on Claude's poetry. Absolutely. I mean, wherever good ideas come from, you got to give them credit. I think I want you to do some research here. I want something that's coding agent agnostic that at least works with the top coding agents. I'm going to define the top coding agents as claw code, codeex, pi, open code, and github copilot cli. So if we have hooks there, I want to I want you to go and investigate each of those with different sub aents to ping me back some information on what they support and whether they support open telemetry or whether we would need some kind of proxy over them to grab all of the right information.

I think it's inevitable that they will emit uh different schemas and different shapes and we might just need to handle that inside the application. For instance, the shapes that pi emitted changed very very recently in a patch version. So whatever we do, we're going to be kind of on the hook for these systems. There we go. That was a big one. Whisper flow does a really good job of like turning this into transcription or like um whoops of uh making the transcription nicer. And notice that I'm using I specifically call out sub aents because I noticed a um oh not sure which agent you mean.

Pi is the agent. I mean ulite. So it's kicking off four in parallel. It's kicking off a claude code guide agent which is nice. That's it kind of built-in agent that understands how to teach claw code. Then it's doing one for OpenAI codeex CLI observability. Open code observability. GitHub Copilot CLI observability. So yeah, there's probably going to be a ton of permission requests here. We're on claw code. We're on 4.7. Yeah, exactly. Colin, you can just add your own JSL files. Yeah, grilling. I mean, grilling sessions are amazing. So, if you got any questions, ask me them now because I'm probably just going to be um answering permissions requests for a few minutes.

Can't wait for the day when Claude starts to learn how to code from a Claude code course. Well, I mean, skills are basically that, you know. H I mean the lites are often right. That's what makes them uh historically notable. Will you ever return to talk about pure software principles that is not in the rounds of AI topics? I I'm really loving this new era because I get to talk about software principles uh while dressing them up as AI gossip. I'm not on bypass permissions mode because I'm not running inside a sandbox. I could run this inside a sandbox.

Um, but I'm just not at the moment. Have I tried auto mode? I don't think I have access to auto mode yet. Do I think it would be beneficial to have Codeex go over my Clawude P plan? No. I think people um over review their plans and I think this is a classic mistake in web development. We got Bristol. What's up? This is like people go over and over the specs that they're going to create when what they need to be doing is getting to code. Um Cloudflare is releasing some amazing features. Am I planning interested to cover them?

Don't know maybe can I touch on my AFK implementation method? Yeah, I've really made some big updates to that recently, which is I have my uh uh wind is blowing outside is a uh repo here called San Castle. And S castle is a coding agent orchestrator. And this is incredible because it allows you to run some agent inside some sandbox. So, it allows you to uh really do amazing things with your setup. It is really really cool. Yeah. And um when we actually get to build this then I will be using Sound Castle for it because it's incredible.

Uh yeah, don't worry about raw GitHub user content. We got a lot of folks in this stream. Hey, 250 of you. I'm your inspiration. Oh, thank you. So, it's done all of its fetching it seems about copilot CLI observability just added an issue requesting Docker SPX as a provider. Haha, interesting. Let's just quickly check that out. MicroVMs. What were some challenges when creating Sand Castle? Don't know. usual software development challenges really. sort of figure out the language, figuring out the API, figuring out what we're supposed to do. Yeah, this is it. This is um this is what we're building.

Clawed code observability. Um trying to or coding agent observability. Let's see what it says. It's been going for about four minutes now. Use sand castle to automate. The app kind of works, but it's not structured at all. becoming tough to change things. Um, if you end up with an application that's really difficult to change or you um there's a really nice definition of complexity in an application, which is complex apps are hard to change. Uh, simple apps are easy to change. The way you turn a complex app into a simple app is you run this improve codebase architecture skill or at least this will give you opportunities for doing it.

This uh explores a codebase like an AI would surface architectural friction, discover opportunities for improving testability, and propose module deepening refactors as GitHub issues. So yeah, it's it's difficult to turn a crap codebase into a good codebase, but this will set you on the right road. Could you please recommend a technology stack for projects so I can create bots, backends, and my SAS projects? TypeScript. Just use TypeScript. Typescript node is brilliant. It's what I've built my entire career on. So, okay, here we go. Here we go. All five agents have a similar shape thankfully. So, so they have a hook surface here.

Okay. So, claw code has some sort of hook surface. Um, pi has a hook surface too. Open code has a plug-in system. Copilot CLI has some hooks and every agent gives you an event hook and an append only JSONL on disk. Interesting. Schemas all differ and all evolve. There's no single just use hotel answer. Copilot and PI have no useful hotel at the CLI level. The proxy path is blocked for copilot. Okay, interesting. a per agent adapter is unavoidable. Yeah, that's what I thought. Each adapter uses that agent's best surface hooks for live events. Jason Taylor's back stop and normalizes into your internal event schema.

Yeah, one local demon loads the right adapter. Uh, does it resolve Q3? Chill out, mate. Chill out. AI is always so aggressive in like trying to create consensus, you know. Yeah. So, I'm I'm not going to answer Q4 yet because we haven't resolved Q3. Go back to Q3 for me. I still haven't decided yet about whether we do a hooksbased system or whether we track the JSONL locally. Happy to catch me live. Happy to be live. 279 of you in the chat. It's huge. This is big for my streams. I mean, I almost never stream.

So, yeah. I haven't decided about a hookbased system or whether we track the JSON locally. I guess I mean this is just something that we could experiment with an implementation. It's kind of an implementation detail. It sounds like either option is viable. Need your opinion about models. Miss the edge cases in the product you're making. We always have to write it manually. These can be sold by making a skill or something that gives you edge edge cases. I mean, catching edge cases is hard. Like, it's a hard thing. You have to um accept that you're not going to catch all edge cases when you're planning.

So, you have to build in the idea that you're going to QA your application. You're going to make sure that nothing got missed. You know, it's just hard thinking about all the edge cases ahead of time and it's largely impossible. Okay, here we go. Let me lay this out properly now that the research is in. The research shifted my thinking. It's not really a binary choice. Hooks alone are insufficient for most agents. Claude code hook payloads don't include message content, so you still read the JSON. Interesting. Copilot hook payloads are thin. Codex hooks are flaggated and Windows excluded.

Ha, I'm on Windows. I'm on Windows. JSONL alone is efficient and sufficient for some. Right. Right. Right. Right. Right. So what we need to do is change the approach per agent. That makes sense. That make sense? Yeah. No, I dig it. So we are trying to investigate um building a coding agent observability platform. That's the plan. Uh, I switched to Windows so that I could expense my graphics card to the business. What's my opinion of people saying that UI front end is the differentiator in the AI era? I don't know. I'm not a pundit. I'm just a teacher.

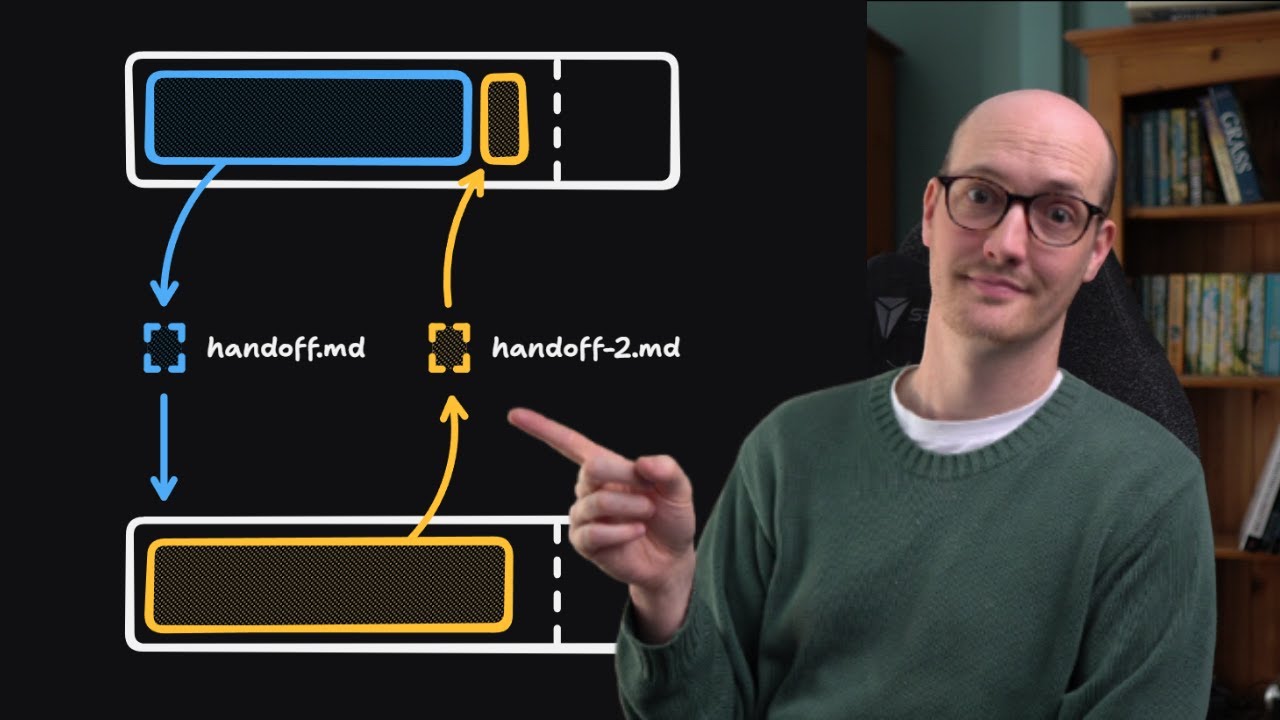

Um, okay. So, at this point, I'm thinking, right, this this context window is now really good, right? We've got a really cool context window. It's full of um interesting information. How do we preserve this going forward? Because I'm I'm not in a directory here. Um we've like invested uh 42 or 45.2K 2K tokens here into researching different coding agents into figuring out some early decisions and I want to save this somewhere and the best place for that is to actually kick off a repo I think. Should have gone with Mac OS and remote Linux Windows system for the GPU.

Yeah, that's a good idea. That's a good idea. Uh so I need I want to save this somewhere. I want to save it in a markdown file. But I feel like at this point, you know, we've um sort of figured out the first few bits of the project. Have you used speech to text in Windows 11? Yeah. Uh it's not great. The native one is not great. I don't love it. Uh I use Whisper Flow. Yeah. We got to create a research document. Um, yeah, let's do it. Okay. I love the things that we found here.

Um, I want you to create a new repo for this. This new repo is going to live inside reposi. What? What should we call this? Okay. Yeah, let's figure out a name with Opus. What name should I give to this project? I want a just quick placeholder name I can put down to differentiate it from my other projects. Opus is really good at choosing names for stuff. Um, it shows the name Sand Castle, which I really like. I think that's a clever one. Agent scope. Okay, guys. I need your help. This is not good. Agent scope.

No, I don't like that. Loop a jeweler's magnifier to inspect sessions or peak. I don't like peak. Peak is sort of, you know, like you're peeking in the peeking in the park. Dirty little peaker. Agents watchtowwer. I barely use, by the way. I suppose it is a a good by the way, but it just feels by the way just feels so underpowered that I just never use it. We're building an agents. Uh, in fact, we can recap, can't we? Look at that's actually really good for streams. There you go. I don't need to talk now.

I could just Wee agent master telescope telescope agent guard peeping Tommy. See cam Harry Potter inflected. Peak. Peak. I kind of want it like metrics based, you know, panopticon. Sauron Barador. Palante. Palante is taken. Mother agent. Vantage. Isn't Vantage a Yeah, Vantage? No. What am I thinking of? This is tough. Give me more ideas. I want them to be kind of metrics based token scope. H. This is an accidental beard. It's not an intentional beard. ACQ. Oh, here we go. Tally H yard stick ledger rubric quant readout Argos agents agent reef I mean all of these ideas are taken right surely I don't think I can do fullbeard fullbeard for me is like a sort of a row of dead hamsters all just sort of gathered along they don't join up you know rubric Quick readout.

Quant. God, it's all just rubbish, isn't it? Caliper agent index. Quant yard stick. Sort of feels British, you know. I know that. Burning a lot of tokens on it. I know. Yard stick. Yeah, we're looking for a temporary name, right? Let's just call it yard stick. token site agency. Espe um something in Latin. We need a motto. That's what we need. We need a uh what you call it? A coat of arms baseline. Where my token go? Where do token go? Where do token come from? I mean, yard stick seems like it's got a bit of uh metrics.

Let's just go with yard stick. Okay, I want to save all of the research that we've done apart from the name stuff in or in fact, you know what? Let's um let's take Claude out of this. Let's actually go back and resume to um just here so that we kill all of that context. Basically, we just sort of clip off that bit of context. So now we're just right at the end of the research phase because that little sort of diversion is not something I sort of want to go back to. So it's almost like we've gone back in time.

Now we don't have to keep that in our context. So, we figured out yard stick dilly. That's good. Dilly tally. Crow's nest. That's nice. I mean, how many observability companies that Let's just look for the uh Crows Nest observability company. Crows Nest software. There we go. Monitor key business metrics. Uh Yardstick observability company. Yardstick Trust reimagined a technology platform that makes human credibility into something you can continuously see. Yes. Build your dream team with Yardstick's AI powered interview tools. I mean, every one of these people just asked Opus, right? Uh, I cut the context by uh just doing resume basically.

So, I went back to a previous conversation. My next call for real engineers is happening. Um, I don't know. I'm going to work it out on Monday, but probably miday, I think. Token autopsy. Let's just go with yard stick. So, we've produced some really good stuff inside this context window, and I want to take it all and put it inside a research document. I want you to create a new repo for me here. Slopwatch. Slopwatch is really good. That's really good. Slopwatch. Slopwatch is a is a winner. Sorry. That has just pipped yard stick to the post.

That is incredible. So, it's going to be inside reposi slopwatch. That's genius. Wow. Eddie. So every everyone just go and follow Eddie for that one. Where's Eddie? Eddie [ __ ] There you are, pal. Is my guy Eddie? Go and follow Eddie. A funny funny man. Did Was it Was it you? Yes. Slwatch is great. Perfect. H That's really funny. That just makes it feel more real, doesn't it? Eh, I want you to create a new repo for me here. Put it inside the research directory as a markdown file. I want you to specifically focus on the valuable stuff that we learned here on the differences between the coding agents and the fact that we'll need to take different approaches in recording their data and uploading them somewhere.

Cool. There we go. stopwatch. Incredible. So, we've um kind of let's let's just walk through what we did there, which is over the past how long have we been going? Like 40 minutes. Hey, we've gone from idea to essentially uh a research document. That's sort of where we're at. And this I think is like an early is the way that I treat Greenfield projects is they're essentially until you get to code, until you start sort of putting PRDs down, until you start um working through your ideas properly, seeing your ideas reified into code. You're really just um working with research.

That's what you do. It's a research task. Registering gift the slotwatch domain name. It's the danger of doing this stuff. Actually, I remember I was doing a um I was streaming something about TS Reset, I remember, and someone nabbed the nabbed the package name from me. I use Whisper Flow. That is not a Garmin. That is a Pixel Watch. It's got loads of scratches on it. Very annoying. How do I get Claude to focus on System D's scalability to a million users? Uh, well, scalability to a million users is a hard problem. So, once you realize how difficult the problems are that you're asking Claude to do, um, oh, slop cop.

Slop cop. That's good. You'll realize that maybe you should just, um, AI is going to do better when you help it. So yeah, I think human plus AI is always going to perform AI no matter how good AI gets. Pebble 2. Hey, we had a Pebble 2. Okay, so we got you initialized repos AI slot watch as a git repo and we wrote research coding agent ingestion. Okay. Um so let's cancel out of this. Um going to cancel out of this. So, we're going to code up repos AI slopwatch. Uh, I'll push it to GitHub as well, so we get it.

Uh, did I get any update from Anthropic around the terms of use question you've been waiting over a month for? I did not. No, anthropic are still ghosting me on that one. Um, okie dokie. So, we got a read me stopwatch. I like that. Extremely low effort. It's rare you see coding agents put that little effort into a readme. that really makes it funny. Uh, okay. Headline finding. I I generally don't read these research documents. The important thing for me is that the um uh conclusions that we came to. I'm going to commit this. I think I'm just going to use um GitHub copilot setup and let's publish.

So, slotwatch heading to GitHub public. Exactly. Help the AI help you. Um, okay. So, I didn't want to do do too much on this stream because this is a great idea. I'm really glad I came on stream. Slotwatch is a really really funny name, first of all, and it just crystallizes the the idea and that's awesome. What I want to do is I think set up the repo. I want to set up the repo. And for that, we're going to enter a new grilling session. For long-term agent memory, I'm thinking that I I think long-term agent memory is a is sort of a bad idea.

You want something that's super observable, that's super concrete, that you can edit immediately, um, and that you can fit into context window. I think so. My current idea for this, which is really only germinating this week, and that I will be testing on this project, is using ADRs, architectural decision records, and using um, a sort of minimal version of DDD domain driven design. So yeah, let's set up the repo because that's going to be boring to watch on a video and we've got nearly 300 of you guys here. We're going to use a fresh context window here and we're going to do another grilling session.

I think I'm going to go for a um this is my new version of grill me. It's not got a very good name. It's just called domain model. Um but it's essentially grilling an orbit name. I want to set up this repo to be a Typescript repo. Actually, no, we're not there yet. We can we can't figure out the stack yet because we haven't figured out the shape of the thing that we're building. So what I need to do is I'm going to do some domain modeling here uh with I'm just going to pass in research uh conig agent ingestion.

So I'm just going to what I've done is I've taken all of the stuff that was in our previous context window and I've moved it essentially summarized it compacted it into a research document that's going to persist in my system and then we're going to move on from there. It's obviously going to be TypeScript, but the shape of it is up for question. I'd like to talk about the potential architecture for this thing that I'm trying to build. I don't have a strong idea in terms of what the different deployable units are. And I know that I'll need some kind of front end.

I know that I want it to be pluggable so that people can host their own version of this. I'm not really that interested in producing kind of centralized service unless it produces a really good DX for people. I'm not interested in being in handling people's data. I want people to own their own data and own their own storage mechanisms for this. I'm imagining there will be some kind of CLI component to this that you run locally or perhaps a desktop application. I'm really uh totally open to the idea. I mean, this is wide open. We could choose many potential different things here.

Maybe a desktop app is like the most sensible idea because we obviously need some kind of persistent API, some some sort of port running locally that's going to capture all of the data. Then we need something to sort of Okay. Well, let's see what it says. Who is the primary user of slopwatch? A solo developer watching their own agents or a team or watching many developers agents? How we sort of had this, didn't we? Yeah, we sort of had this. Business-wise, Matt, have you found more people follow your funnels and become customers today as compared to the TypeScript courses days?

Are they more inclined to learn on their own using LMS? Um, my CLCO course was the most successful course I've ever run. So, um, I'm happy in terms of where I am, where I'm positioned in this market. Um, and I feel like there's a lot of good stuff for teachers to do here because people are using these tools wrong. Um, right, we already we already um answered this, right? I'm actually going to you know what I'm going to do? I haven't done this before, but uh I think this is funny. I'm going to run Claude back in my previous uh setup here.

I'm going to res resume the conversation that we had here. And then I'm going to say to it, I just got asked this by another Claude code session. How would you answer this based on my answers before in this conversation? So, I'm basically going to get one clawed code to answer another. That's funny. This is the reason I have to do this is because I um this conversation was outside of the repo where I'm now working. A peanut gallery. That's a great one. I don't have access to auto mode. Here we So, that's good. I'm not going to read it.

I'm just going to assume it got it right. I already answered this in another chat. I asked that agent and this is what that agent said. There we go. Bye, Colin. Have a good night. Okay, it's uh it's figured it out. Uh oh, yes. Okay. No, I don't think this is right. No, don't be so eager to create a context MD file. Yeah, I'm experimenting with effort. Um, Claude released XH high mode and and it defaulted it created the new default claw code is X high. I can reference another session so the agent can get its context.

Um, but that only works if they're in the same directory. We are building a uh coding agent observability tool. Really dreading AI coding until recently and your content helped me get there. Cool. I'm really happy to hear that. Okay. Given T-Oorg self-hosted with mandatory on-prem backend live spectate, the next fork is about the capture side. What runs on each developers machine? Whoopsie. Whoa. Hello, Claude. Come on. Update your blooming ghetto UI is terrible. Is terrible. Okie dokie. Is the on machine capture component a longunning background demon or a per process perification process that starts and exits with each agent session?

Um, it's a good question. A longunning demon that watches all configured agents continuously maintains FS watches on Jason. Yeah. Yeah. Right. Because in our research we found that just using hooks was not enough. Um because okay I'm going to explain this deal. In the research we found that hooks was not enough. The cool thing about the hooks model is that you get to totally delegate the process running down to the agent that's downstream and the agent that's downstream just gets to ping network requests off and we don't need to run a separate process. This potentially means that you could have a process running on a remote server that captures the or ingests the information and stores it.

What I'm worried about is that people will forget to run this demon somehow and then they although maybe we could start the demon via hooks. How much am I spending for a while? I was on um max 5x which is $90 a month, $100 a month, and now I'm on um 20x, which is $200 a month. Close UI is perfect. 4.7 x should burn tokens approx 4.6 high. That's interesting. 4.7 seems like a junior with a dangerous amount of technical knowledge. guessing at a fix until the build succeeds and assuming that that means the program is correct.

I think people overcharacterize LLMs um and anthropomorphize LLMs too much. You know, people say uh that oh yeah, 4.6 feels different to 4.7, that kind of thing. I always think that we can't really get a representative sample. You know, um everything that we do is sort of tainted by our own process and tainted by our own experience with these LMS. So, I think people put too much stock in that. Okay, here we go. So, you're right. I think my recommendation was wrong. Let me refactor the insight you're pointing out. The hook is the trigger that starts a process.

So, we don't need a pre-existing always on demon. Yes, that dissolves the people forget to run it problem because the hook configuration is the install. But the pure hook fires curls backend exits isn't enough. The research already established that hooks don't carry full message content and live spectate is a per session capture process spawned by a hook, not a machinewide demon. Yes, that sounds right. Session start hook fires slopwatch capture. Uhhuh. Only for the lifetime of one session. Yes. Cool. From the users perspective, this is demonless. They configure hooks extensions once. Yes, that's good. Yes, that's good.

Yes, that's good. The one thing that gets slightly awkward if you have two claw code sessions running concurrently, you have two different subprocesses. They're cheap and independent. Yes, this is good. This is good. Yeah, I like this. Does this per session sub process spawn by home instinct? Yes, it does. Am I okay for clco codex? There is a shortlived stopwatch process. Yes, that's fine. I really like this. You've done a good job here. Having essentially a sidecar process is a really nice model. I like that phrase, too. Sidecar process. I'm using I'm testing out medium.

Yeah, extra high is only for opus. That's right. Demonic plugins. What you talking about? Slobing away. All right. How does a session get attached to a developer's identity and how does the sidecar authenticate to the back end? I So, okay. One thing to notice when you start doing grilling sessions is when language starts to calcify when you start seeing different terms um be used here. So the sidecar is now our terminology for this process that's running inside the claw not running inside but running next to the claw code process. So that's just something to bear in mind and something I like to capture as part of my development Have I got the context window always displayed?

If you search for uh where is it AI hero claude code status line then you will find it. So this is uh some this is an article you can pass into your LLM to get this beautiful status line down the bottom which is really nice. Look at me in my nice shirt with no beard. Okie dokie. Huh? Huh, this is smart. So, it's asking me um how should we attach a session to a developer's identity? And it's given me some smart ideas here. So, it's going for first of all git config. So, it's zero setup.

It just reads the user email at spawn and it's trivially spoofable. Doesn't match the org's identity system. Yeah, that's interesting. Explicit login during npx slopwatch install. Yes. And again we have a kind of install thing calcifying Let's see. Yeah. So scikar reads a token from here. Token is minted by the back end when the dev authenticates once. Yeah. Yeah. Yeah. So I guess we're starting to get somewhere. We're starting to see something kind of come out of the mist here because all they need to run locally, they just need to run npx slopwatch install and then somewhere in some other process there's probably something deployed somewhere that they can do what you call it um oorthth with yeah oorthth device flow against the org's configured IDP with a long live refresh token stored in the OS.

as keychain. Yeah. Cool. Cool. The back end becomes an OIDC. Okay. Now, I'm starting to be a little bit freaked out by the language it's using. So, I don't know what an OIDC is. You may do. I don't. So, I'm just going to do a a little zoom out, which is one of my skills. Tell me a bit more about what OIDC is and what an IDP is. I'm a little bit out of my depth here. Zoom out is just a teeny tiny skill kind of like grill me that uh just gets it to kind of like an explain like I'm five.

So, what's it going to say? It's taking its time. All right. orgs IDP I suppose identity provider. It speaks OIDC. The OIDC protocol links to the slotwatch CLI and the sidecar on dev's machine and the slotwatch. Yeah. Oh, I see. Here we go. So, it's given us all this. Ah, I see. Nice. I only saw the thing at the bottom. So, yeah, IDP is the identity provider, the service that actually stores who works at your company and who authenticates them in a typical org. One IDP is the source of truth for does this person exist?

Yes. Cool. Um, GitHub or zero. Yeah, cool. Yeah, I don't know if we want to like I mean this is big. This is a big feature. Don't know if we want to do something simpler than this authentication and this is open ID connect. I see. I've heard of that. So the flow strips jargon. User open slopwatch. Slwatch redirects them to this login. User logs in there. Octa redirects them back to slotwatch carrying a sign token. Yes, that makes sense. Stopwatch verifies the signature against Octus's public key and trust the claim. Cool. Very cool. Yeah, OIDC is built on top of OATH 2.0.

More standardized way. Okay. I've used Oorth a bunch of times. I just didn't know what OIDC did. Got you. Normal OIDC assumes there's a browser. CLI don't have one reliably. Yeah, cool. Here we go. This is what you got to do to login like GitHub or and stuff. Slotwatch login calls the IDP gets a back a short code. CLI prints go to this thing. Enter this code. User does that in their laptop browser. CLI pulls the IDP every few seconds. Done yet? Until the user completes the brow step. Beautiful, beautiful, beautiful. Yeah, there you go.

Get all login. Nice. See you. Relying party got you. Okay. So I suppose the question I have is get out of here. Do I need author just to get something up and running locally? Yeah. My question here is that this seems pretty heavy and I wonder if there's a kind of crappier version of orth that I can ship for version one just so we validate the idea early. I don't want to have to go through the whole rigarole of doing this or protocol if we can get something working for v1 a little bit simpler. The important part of this is that we validate the idea early.

Right? This is a classic software engineering principle. We could spec all of this stuff out, but we actually need to validate whether this is a decent idea. We need to see it working. We're we're in the abstract bit right now. H works. I don't know what work I've got some friends who work at work OS, but I don't know what it is. Oh, here we go. Ah, for God's sake, Claude code. Okay, I can push OIDC to version two. Two genuinely light options, a per user admined token. The admin runs the self-hosted back end, opens up a little admin page, clicks add user, types a name and email, gets back a onetime token string, hands that token to the dev over Slack or whatever.

Yes. Dev runs slotwatch login and pastes it. Scikar stores it. Sends it as a bearer token on every request. The identity is trustworthy. Each token is bound to a user record. Cool. Yeah. So the admin creates the user that has a user record that owns the user and then that just pings down to slot. No IDP integration. Yep. Deprovisioning works because the admin just revokes the token. Yep. Upgrade bath is clean. Yeah. Yep. Yep. Yep. Yep. One admin CRUD screen. Perfect. Yeah, that's nice. A day or two. I like that. It gives me a That's like five minutes of work with AI.

I love that AI doesn't really understand how long AI takes to code something. Fascinating. Uh yeah, this is the one. This is the one. Option A is great. Is it one of Theo sponsors? Yeah. Yeah. Yeah. This this will um stay as a video for sure. Now, it's tricky because I don't want to go too far here because I want to this whole idea of this stream and the reason I came on stream is I want to turn this into a proper video where I actually go through and walk through this stuff. Ha, here we go.

What does deploy a slotwatch backend look like for the org admin? One binary with everything embedded, a Docker compos bundle or a Kubernet Kubernetes shaped set of services. This is the question that shapes what a back end actually is. One process or several what storage how the front end is stored. A single binary battery is included. Woof. So one go or Rust executable. Woof. We might be heading into a Rust build. How about that? Did a major migration last night. It estimated we take four weeks. upgrade swap the binary storage a DB file on disk.

Plausible self-hosted Grafana Loki single binary. Huh. could be. Yeah, I think I prefer a Postgress backend than an SQLite backend. I don't want to do Kubernetes. I think probably this is tricky, right? You can make single binary TypeScript executables with bun too. This could be a bun build. This could be a bun build. And when um what is it? When P4 is finished, then we can use P4 instead. P4 is crazy. Have you seen P4? And it's a terrible name. I don't know why they chose that name. Yeah. So, hybrid single binary by default. Mhm.

Mhm. Yes, hybrid single binary makes sense. I think pointing to a Postgress database also makes sense. I think that the database needs to outlive the changes to the application and I want it to be on a different deployable unit to wherever the binary is running. This I mean this is hardcore, you know. This is this is watch me work, right? That's the theory behind this stream and this is what I'm doing. This is how I work. I'm using 4.7. Yeah, it's doing good so far. I mean, I'm not really noticing a difference. It could be bun.

Could be bun. Could be bun. because I mean it would need to be some kind of executable plus an application right but then the application yeah this this is a question I have um how does live spectate work server side has an event from a sidecar reach a manager's browser tab in 100 milliseconds the sidecar on Alys's machine post events to the back and a manager watching Alice's session has a browser tab open. Something has to fan those events out from the ingest path to the watching tab. Now, I think it's going overkill here. Let's take a simpler approach here.

I want to focus on um I think we can do this with polling, I don't think it being as live as possible is really needed. Polling every 5 seconds or so or whenever the user focuses back on the tab is probably good This is interesting. Like we are really in blue sky territory here. So it's sort of going into funny implementation details. I'm on medium because I'm just testing it out. Yeah, exactly. TRPC or something. I mean, or yeah, this is interesting. We could build like a Rust back end, couldn't we? And proper binary. I've never done a Rust project before.

It might be fun. Might be really fun because we definitely need like a element of TypeScript and a front end here, but having an actual, you know, Rust project would be really fun. I don't know. Maybe I should just stick to what I know. What does the DRRi review inbox actually contain and what's the sessions life cycle? Okay, so at this point I feel like I'm sort of sick of like talking about the app now because we're in like I'm starting to get a bit of brain burn. What I need to do is actually I think I want to exit out of this and create a new research document based on what we found.

So I want to compact this session um because we're sort of going all over the place and then I want to start talking about the language because the language how we talk about the application is really important because the AI and I need to be synchronized in terms of the way we talk about it and there has been some really key terminology that's come out of this like the sidecar process like the um admin like the user you know that all that stuff. So, I think I want to exit the grilling session here and create another research document in this repo that captures all of the main decisions that we've made.

This obviously will be um a partial one. It will it will have unresolved questions that I want to be able to pick up later. Let's do that first. Opus is good at Rust. Yeah, I'm pressing a key on my keyboard. to trigger whisper flow. The grooming show, the grooming skill is crazy. We we're doing a grilling right now. We're inside a grooming skill. I saw someone uh that said the grill me skill had asked them uh like 200 questions the other day. It's such a game. It's crazy good. It's crazy good. I'm just going to have a little sort of brain break and I'm just going to check WhatsApp for a second.

My son's with his parents and so uh um Oh, he's having a good time. Oh, sweetie. Done. Yeah. I mean, like there's there's only so much so much deciding you can do, Yeah. What what what I notice here is that we don't have like um natural um breaks. Coding was kind of like a break from making decisions. It feels what because when I do this solo, when I'm not streaming, I will tend to have two grilling sessions happening at the same time, you know, one in either terminal. And it's just, you know, you're constantly making decisions, making calls.

Um, and it's, you know, it's exhausting really. I'm bored of talking now. Get on with it. Yeah. I mean, you sort of need to uh at some point just call it on the deciding because really you can only make meaningful decisions when you're working with an actual asset. Um, just working in this kind of abstract space is not good. So, eight resolved decisions. Team or self-hosted or web visibility dest cool. Again, I'm not going to review this research document. I'm just going to trust it because I'm going like I think of um the PRD as like a decision documents basically um or sorry, a destination document.

The product requirements document kind of describes where we're going. This is not a PRD. This is just like research that I'm doing into the idea that I have. But what I want to do now is sharpen up the language. Two week break to refactor all the slop. I mean, you'll see. I mean, there's there's not a ton of slop that comes out of here. Would it make sense to do different grilling sessions for back end or another for front end and take each side one at a time? No. Absolutely not. uh back end and front end is an artificial decision.

The domain, the problem space is the entire thing. Backend and front end are just two um deployable units. Um and if they are like we separate them because traditionally we've organized companies around hiring front-end teams and hiring backend teams. That has always been a bad decision because it means that you get too focused on your domain and you don't think about the entire problem space. Um, it's not so much a road map document. I'll show you what I mean. Um, I just want to firm up the language. So, I want to firm up the language a little bit and take some of the terms that we've got so far and put them inside a context.md file.

Let's do that now. But grill me about each decision. I want to have a lot of control over this. So you'll see what I mean in a second. You have to answer all the nuance details about every single thing that might not be of value on initial ideation. It depends. It depends basically because you want a lot of questions from the LLM so that you can tell if you're aligned or not. I mean, I can feel from this grilling session that I have a much clearer idea of what Sloatch is going to be. Eddie, what a great name.

What a great name. Sloatch. Absolutely killer. Okay. Um, so session. Ha, there we go. Starting with the low most. Okay. So, the point of this the point of this exercise that we're about to go on is that when we firm up the language now or start thinking about language ideas, we can be so much more precise going forward. So, the first term it's it's proposing to me is session. A single continuous run of one coding agent from launch to exit attached to one uh developer, one current working directory, one agent version. I love it. Yes.

Yes, it captures uh you're capturing data from a session. Yes, I love it. Love it. Love it. And it directly maps to a sessions table. Notice how the language, the way we talk about the application is so important here. If session is fuzzy, the whole data model is fudgy. Fuzzy. Um. Yes. Okay. Right. Right. So, there's some edge cases here. Oh, so good. So, resumes Is a resumed session the same session as the original or a new one that points back to the parent? So good. Such a good edge case because you're right. A session is like it's it can have you know you can branch right.

So yeah again we have forks here. How do you track that in a UI? Right. Dilly tally. Are you actually going to build something or is this pure vibe coding? Yeah, I'm going to build something. This is how I build stuff. No, no, this is ubiquitous language. This is ubiquitous language 100%. I think DDD is a fantastic match for uh AI coding 100%. So, clawkins code tasks spawn sub agents that write their own JSONL files. Is each sub aents run a child session or part of the parent? Wow. Here's a little TypeScript wizard. What you saying?

Agent version. H. Wow. Brilliant. So, all of these are questions that are really hard to answer. Yeah. A fork. The the issue is forks, right? And branching inside the session. Because yeah, are we really going to track that in the UI? Because when we think of a session, we're thinking of like a big list of sessions that attach to the user. Feel like I might need to do a diagram here. Gosh, it's this is a hard call. New session with parent ID. Yeah. Yeah. Because the language we talk use here is so important. The forks one is really tricky and it's really hard for me to work that out.

Can you throw some scenarios at me so that we can road test that language more? So this is what DDD does. They um domain driven design. The theory behind it is you take a bunch of if you have a sort of disagreement about language um then you take some concrete scenarios and you sort of see which ones are easy to describe with the language that you're using. And that will usually map on to uh whether it's easier to code or not. Yeah, loves patterns. Goes so well with DD dependency injection. Yeah, totally. Okay, so scenario A, the explorer.

Here we go. Alice is refactoring or module in PI. After 10 turns, she's at a decision point. Rewrite with middleware or refactor in place. She branches. Tries middleware for eight turns. Doesn't like it. Branches back to the decision point. Tries in place for 12 turns. Ships it. the middleware branch is abandoned. So is that three sessions or one session with a directed asyclic graph because yeah there's a concept of sessions here and then turns within that session and maybe the turns are sort of branching points right. Yeah we are um saving live streams on the channel.

The middleware branch costs real tokens and produce real artifacts. files were written then rewound. Does the DRRi inbox show all three? Does Alice's session count this week show plus three or plus one? Brilliant. Okay. Scenario B. Bob is using Pi. 40 turns in, he rewinds two turns to rephrase a prompt. Pi treats this as a branch. The old two turns still exist on the By the way, if you don't know what DAG is, directed a cyclic graph. How did Claw get detailed info? We did a research phase earlier where we looked at coding agent ingestion and we looked at basically did a bunch of research went and did some documents and then it has all this stuff in its context.

You're right. I I am a little bit suspicious about understanding its understanding that it does this but I'm willing to just sort of take it on faith Yeah. So I think we need a concept of a turn inside a session. Carol is Dr. Dave is in pi. Carol opens Dave's live spectate view. Dave branches. Now Dave is on branch B. Branch A still exists in the tree. Carol's tab is polling. Yeah. Which session is ID? Yes. Yes. Yes. Yes. Yes. let's think about there being sessions and then within sessions there are turns. Since turns maps on to API calls that agents make to the back end or maybe I mean we can argue about terms there might be I don't know there might be some other term there that we use but so a session can contain the directed asyclic graph of all of the branches that were created in the tree.

Does that make sense? I mean this is 4.7 so I assume its knowledge cutoff is fairly up to date but yeah I I don't know I mean pi's existed for a little while I was actually um at a conference recently I was sat across from Mario the guy who created Pi I didn't realize who he was and I actually um didn't talk to him for the entire time the only time we uh chatted did was um this was at the speaker's dinner for AI engineer London and he said um what is that and pointed to a place of food and is that for everybody or just you?

Obviously I was being very covetous over the butter chicken. Okay, here we go. Good move. Let me lock in the DAG inside session model and probe to residual fuzziness points. The new shape session is logical turn. One row contains a DAG of turns. Turn is a node in that DAG. Yeah. Yeah. Yeah. Has a parent turn ID. Most agents produce a degenerate DAG, a straight line. Only PI produces real branches. Don't know about that. Two things are still fuzzy. Turn terminology. You said turns map onto API calls. That's not right. In standard agent parliament and the gap matters in most agents own docs.

A turn means one user message plus the full assistant response. Yes, that makes sense. What if called API call a model request? I see. I see. Here we go. A turn versus a model request. Yes, that makes sense. What about resumes and sub aents? Now that we have the DAG model, the DAG resolves forks but not these. Okay. Context codeex resume context was compacted and reconstructed. Is it the same session just a resume marker turn in the DAG or a new session with a parent session ID? H. This is really hard, man. What are your picks?

Sub agent should be child sessions with a parent session ID. That's for sure. H yeah, I don't know about compaction we are building a um a coding agent observability tool and the thing we're working on here is when you finish a session and you or rather you're in a coding agent session and you compact how should that show in the imaginary UI? Uh I imagine memor is not the same artifact stitching session. I mean this is this is something that's so hard to answer because we're in such an abstract space. I think we're going to need to figure that out in implementation.

So I'll just say that. I'll see what it says. The resume one is really hard for me to figure out while we're still in this abstract mode. I feel like I'm going to need to see a basic version of this working first before I can make any reasonable calls This is we're building slotwatch. We're building an agent observability platform for either individuals or teams. Genius name. Genius name. Resume stays open until you've seen real data. Yep. Okay, so it's now writing a file to disk which is going to basically be um here Uh let's make this edit context and let's just review it then.

this is a file that I'm now using in most of my projects I don't I don't like this. This really Okay, let's slightly update the Okay, essentially what it's got here is a glossery which in DDD terms will be a ubiquitous language. So we have a session which is the logical run of one coding agent attached to one developer and one current working directory. A session contains a directed asyclic graph of turns. It's not done a good job in formatting this. Actually, you're not using the formatting that's specified in the skill. Can you go back to it?

Uh, not skill.mmd, just skill. All the coding agents model things differently. Get to rediscover all their opinions. Yeah. How do you manage AI not doing crap code like 500 lines file, bad separation of concerns, junior code sometimes? Um, well, I mean, that's a pretty big question. The answer is that you bake in uh what I'd say is number one, you first um you got to align yourself with the AI, right? You got to make sure that you and the AI are aligned in what you're building. Second is you um build in architectural awareness from day one.

You get it to specifically tell you all the modules it's going to build uh and you have some control over the modules. And then you uh you add automated review. And then periodically you review the codebase with an AI next to you using my improve your codebase skill which not only improves the architecture of your codebase, makes it easier to change, but makes the feedback loops better for the AI so that it's not producing crap code or code that doesn't work. Yeah, a DAG is for branching sessions. Here we go. This is better. So it's actually using the the right format now.

So okay, we got a language which is a coding agent. We've then got a session which is one logical run of any coding agent. We've then got a turn one user message plus the full assistant response. We've then got a model request, one HTTP call, the agent makes the model provider during a turn. Okay, cool. So these are starting to be sort of entities within our database. I still sort of use Ralph loops. Um, I'm using Sand Castle now. Yep, this V will be available afterwards. Cool. This is good. I'd like to discuss some of the architectural stuff here, such as the sidecar, the the binary, if we want to call it that.

Yeah. Now, when we get to building stuff, I'll show you um my automated review step. You would need an agent observability platform so that you can get insights as to what your agents are doing. So, if you're, you know, how many tokens you're spending, how much context window your AFK agents especially are using. This is most and also if you're a team leader, you want to know how your team is using agents and you want to maybe um you might want to compare the sessions using one model or compare the sessions using another model. Yeah, we're grilling about slopwatch.

You got vendor locked inside lovable cloud. For some reason, that's a just a strange image in my head. Uh, how to migrate from lovable cloud to superbase without losing data? Um, no. I would ask Claude uh using grill me. If they're both post P postgress, you might be fine. Uh, okay. grilling the on machine capture thing. The naming is harder than it looks because there are two physical forms. Brilliant. Uh, the naming trap. The thing has two forms based on the project. It's either a subprocess or in process code, a TypeScript extension or a JS plugin.

Cool. We need one umbrella term for the thing that captures the events on the dev's machine. Yeah. yeah, this is cool. So, we've either got a sidec car or an adapter, a collector. Yeah, it's a bit too hotel flavored. The capture capture is that right? The capture runs on the developer's machine. Okay. Interesting. So it doesn't the AI doesn't like sidecar as the process. This um if you're tuning in late, this is the process that um essentially runs next to the coding agent, captures the data that the coding agent is putting out and sends it to the the deployed back end which we also need to name capture.

Capture surely. Can you use capture like that? Is it not capturer? Listener. Yeah, I suppose listener is good. Listener is probably good. I like listener. I like listener. Using sand castle. Sweet. We like Matt PCO. That's nice. I don't like capture on its own. Ingestion pipeline that sort of refers to the entire thing. This is like a one small part of the um ingesttor I suppose Capturer adapter tap h claw code tap sits on the pipe and siphons off a copy. That's quite nice. capture is a count noun is grammatically iffy. I don't like that agent.

That's not doesn't not going to work for us. Capturer. What about listener? Watcher. Makes sense. Watcher makes sense. Watcher. Listener. Seer. Oracle. listener collector maybe collector I feel like it said collector didn't it if it my call I'd order them let's have a look listener is already a term of art inside themselves pi's extension API is already this okay so it's it's worried about collision between our terminology and the other terminology. Who watches the watcher? Who watches the watcher? blandness. Yeah, it is too bland, isn't it? Voyer snitch peeper. The improcess peeper. You dirty little improcess peeper.

feel like because we're uh that would be funny actually just to have I think maybe people should name like they should have funny language to talk about there. Uh yeah, I like it too. AI assisted dev is actually really fun for streams. Spy spy. What about peeper? A dirty little one. Taylor. Taylor. Dirty little peeper. Yeah, I'm still thinking that 100k tokens is still the smart zone. Producer is not quite right. Stalker. Stalker. Two stalkers immediately. Grammatically it works. It's vivid. No collision. But here's the honest problem. Uh, asking devs. Oh god, it's given me such a serious answer.

I'm going to have to go back. Um, I think probably tap. Actually, I'm No, no. I'm just going to say listener. Listener is fine for now. We can fix this later. Like it's We just need to get to good enough here, right? We don't want to just like bike shed about this bloody language all day. Yeah, I undid it by um pressing escape twice and went um went back to an earlier turn. I mean, I enjoy my usual coding sessions, too. Um, what's different is that I usually run two of these at the same time, so I just try to burn myself out properly.

Yeah, here we go. Number four is the server side binary. You called it the binary in your last message. Um, proposed candidates. The server, the back end, the hub, the collector, the collector's pretty good. I like that. I mean, the server, you know, that's it. It's the slotwatch server, the wire. Let's get on with the implementation. We are so far away from implementation. Believe me, we are so far away. Uh, we need to figure out where we're going first. So, slopwatch. Yeah, I think the server. I think server's great. Observer, the sensor, the wiretapper. Server is great.

I'm using Opus. Opus 4.7. Mhm. Mhm. Mhm. Mhm. Great. I think that is good for now. So, we got the coding a. So, let's let's try this out. We're now talking about this imaginary system that we've not created yet. Um, let me try to explain it to you using this language. So, Slopwatch is a self-hosted um on premises observability platform for coding agents. That's good. You take your coding agent and the user runs a listener next to the coding agent. That listener reports um information about sessions to the server and the server is a self-hosted process that receives events from the listeners, stores them in Postgress and serves a dashboard and host the admin plane one per organization.

That's what we're building. So those are the relationships. Uh, I like these example dialogues as well. This session cost $14. Where did it go? Most of it was is on one turn where the agent did 22 tool calls. Each was a model request charged separately. The rest is a sub agent I spawn with a task tool. It's a child session with its own cost. Cool. I love that. So, that's feeling pretty good. We've now got a shape here. We've got a local binary and we've got a Hey, Kieran. Um, we've got a backend server. Mhm.

Mhm. Um, did we? Yeah, we did some V1 architectural decisions. Okay. Okay. I want to wind up this session because we're nearly heading to the dumb zone. I want you to take a look at the research in V1 architectural decisions and check if there's anything that we need to add from uh this conversation. I mean, we are we're getting close to building. We're getting close to something that we can build here. We've got the language is starting to shape up. We're understanding all of the different deployable units. Um the theory, Valera, is that you would um install a plugin.

I suppose we need to add that to the docs as well, that would run the listener for you on in a hook. Yeah, that's the theory. So, there a couple of absolutely beasty grilling sessions here. And this is very like that's very reminiscent of the way I code. Any advice for graduating computer science students? Um, I don't think so. I never graduated computer science, so you're doing better than I did. I graduated drama degree and a masters in voice and singing. Okay, cool. That's looking good. So, let's commit this. Again, I don't read the research files.

It's just waste of time. Um, but I do like to read the context.md file. Now, we're getting somewhere. We're getting towards something real. and we're also getting towards um the end of my time. So, thank you for helping me choose a project. This is a really cool idea. I think it's got a I mean, it's got a great name. We've got in a basic architecture and we've grilled our way towards something that feels pretty good. All we've done here is we've basically created a two research documents sort of one looking at how different coding agents work in terms of ingestion and the second looking at um sort of creating some architectural decisions and understanding the basic architecture.

The main one is that we've hammered out some language here and we're now this is kind of like a um domain modeling session really that we've done. Uh I think what I'll do is I'll probably post a recap on this um recapping what we've created and then going from there. I don't want you know Hey Raphael, nice to see you. Uh I don't want to We really are just wrapping up. Raphael, sorry. yeah, I uh I want to mostly do this on videos. I don't know how long this project will take. Um, all I want is a project that I can work on that um is something I can make content out of essentially.

So, I mean, I've got a few minutes left. Has anyone got any questions about my general process in terms of what I've been doing recently and anything like that? I'll probably finish on the hour. So, we've got about seven minutes left. It's only just hit your feed watching Silicon Valley for the 12th time. I've never seen Silicon Valley. Never seen it. I need to watch it. I know I do. Everyone tells me I need to watch it. 85k tokens is 9% the daily limit. No, that's um the amount uh I have left in the context or sorry, the amount um the amount of context I've used up.

I'm on 1 million hoopus. Did I just use claw to create these empty files? Yeah, we've you go back in the stream. We've just been basically grilling um only grilling for the last two hours really or however long I've been on hour 43 which is very you know that's what I do when I code. So, um, these research files are basically just compacted versions of the conversations that I've had. Anyone know how to turn on auto or bypassing claw code desktop? I don't Since you're using speech to text, I'm surprised you don't clean up the prompts much to reduce token usage.

Is it not really worth it? Absolutely not worth it. No, people are way too anal about um about token usage in my opinion, especially input token usage. Input tokens are incredibly cheap, extraordinarily cheap. Output tokens are more expensive. I'm using Whisper Flow to dictate. How did 250 people know about the stream? Um, I post it on Twitter, I think. When you show the Sencastle run when we got something to build. We got nothing to build yet. Thank you. Thank you, Rafael. I'm glad you're enjoying using my workflow. I appreciate it. What tools am I using to avoid the agents from not listening?

Committing with no verify despite me telling it 10 times not to. Um, I don't tend to get those issues. Um, you might need to be more specific. Uh, your focus on terminology is a step I often skip, but you've made me reconsider that it's important to streamline communication. It's not only that. It's sort of bringing the language is how you bring a program into life. I now feel like most of this is on rails because we've figured out exactly what we're building and we've given them each term names. Obviously, you can bike shed names to death and we've done a fair bit of that on this stream.

Um, I probably should have cut some of it short and got a bit more efficient with it and but yeah, but we've landed on something that works, right? Use copilot so you don't have to worry about tokens. Not yet. Can you elaborate on token usage and how to maximize it? How do you mean what? Like use more tokens and just run a load of stuff in parallel. Is open code go models able to produce decent code? Not sure. Not checked. How do I make Claude more submissive? I don't know, man. It seems uh a bit of fetish going on there.